TL;DR —

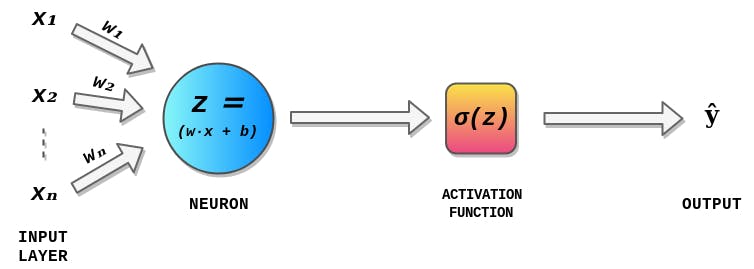

The Math behind neural networks and Deep Learning is still a mystery to some of us. Having knowledge of deep learning can help us understand what’s happening inside a neural network. I decided to to to start from scratch and derive the methodology and Math. Backpropagation, short for backward propagation of errors, refers to the algorithm for computing the gradient of the loss function with respect to the weights. In order to find the best weights and bias for our Perceptron, we need to know how the cost function changes. This is done with the help of gradients (gradients) in relation to another quantity.

[story continues]

Written by

@dasaradhsk

ML Enthusiast | Mechatronics Engineering Student

Topics and

tags

tags

neural-networks|perceptron|mathematics|data-science|deep-learning|hackernoon-top-story|programming|machine-learning|web-monetization

This story on HackerNoon has a decentralized backup on Sia.

Transaction ID: whi33SCSgvMGa79fCRvmIbOYPdBQdfGHzxKdIkmaq4w