There was no outage.

No alerts.

No failed pipelines.

Everything “worked.”

But our BI environment was slowing down, getting expensive, impossible to budget for.

Not because the warehouse was failing.

Because the design was wrong.

AI will expose that at scale.

The Architecture That Punished Curiosity.

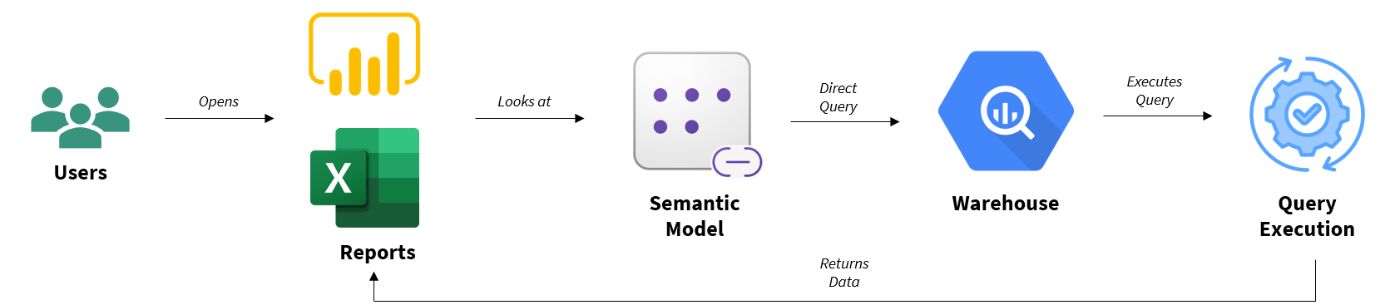

For years, the default in modern BI orgs was “DirectQuery”.

Users → Reports → Semantic Model → Warehouse → Execution → Results.

Every click triggered compute.

It felt good. It felt “real time.”

It tied warehouse cost to user behavior.

An analyst expanding a matrix hierarchy triggered dozens of executions.

Ten analysts at once? Compute storms.

The more the dashboards were used, the more expensive they became.

This is not scale.

This is fragility masquerading as flexibility.

As Gartner has repeatedly noted in cloud cost studies, the analytics environments most rife with instability are those where backend compute cost scales with ungoverned user behavior.

“DirectQuery” scales with curiosity.

This was manageable when the users were human.

Now enter AI.

AI Doesn’t Click. It Probes.

Here’s the thing most teams get wrong: AI does not behave like a human analyst.

|

A human |

An AI |

|---|---|

|

Asks a question |

Creates variations |

If “DirectQuery” punishes human curiosity, it’s fatal for machine curiosity.

You don’t get five queries per question. You get forty or four hundred.

AI multiplies whatever architectural behavior already exists.

Researchers at MIT Sloan Management Review have noted in their studies that AI systems amplify existing organizational structures. Strong governance thrives. Weak architecture crashes.

AI is not the risk. Your execution model is.

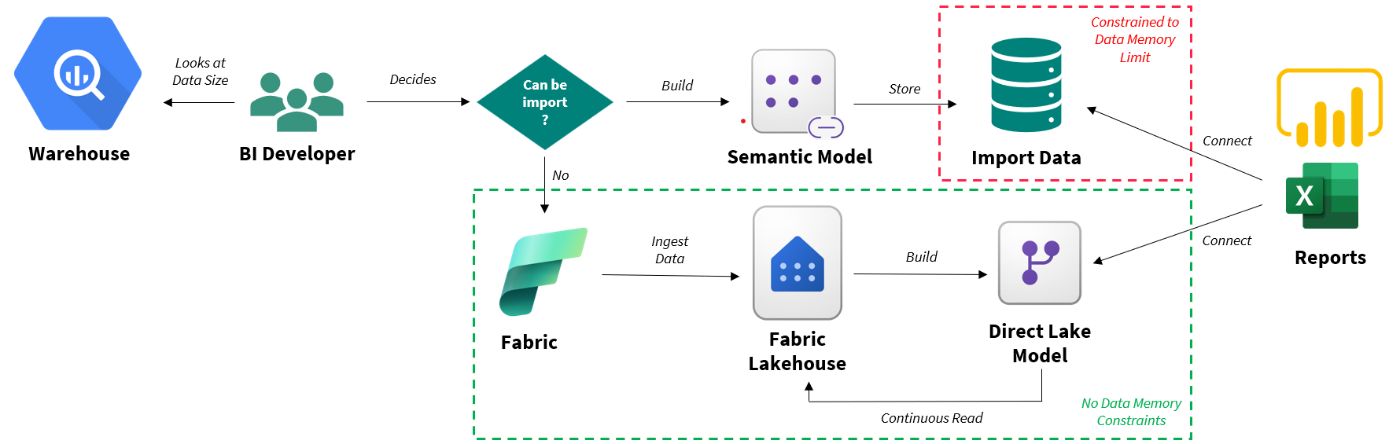

The First Discipline: Import Mode.

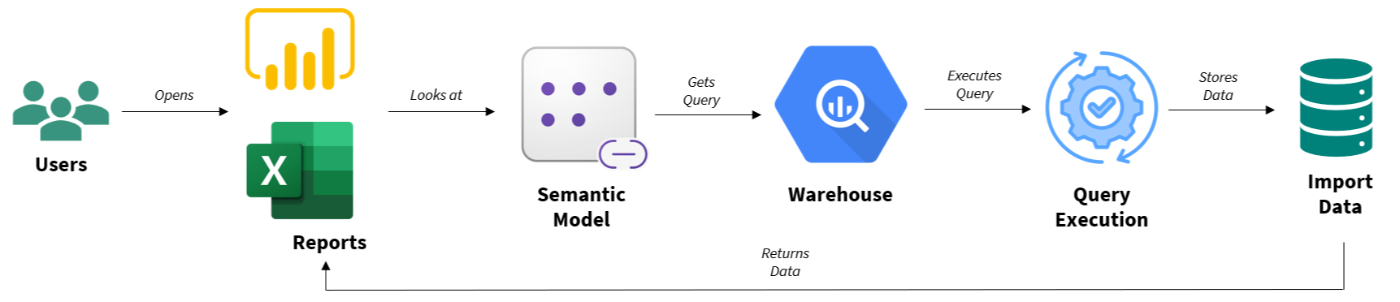

Import mode wasn’t about speed.

It was about control.

Data comes in. It’s stored in memory. It’s refreshed on purpose. It’s queried locally.

Warehouse compute happens per refresh.

Not per click.

This is when a principle started to surface:

Decision latency not technical capability should inform the architecture.

If a decision is made once per week, you don’t need to execute per click.

If a reviewer does their work once a day, the architecture does not need to be live.

- Import mode stabilized cost.

- Import mode stabilized performance.

- Import mode restored predictability.

AI fits much better here.

Because AI now explores governed, bounded, intentional data.

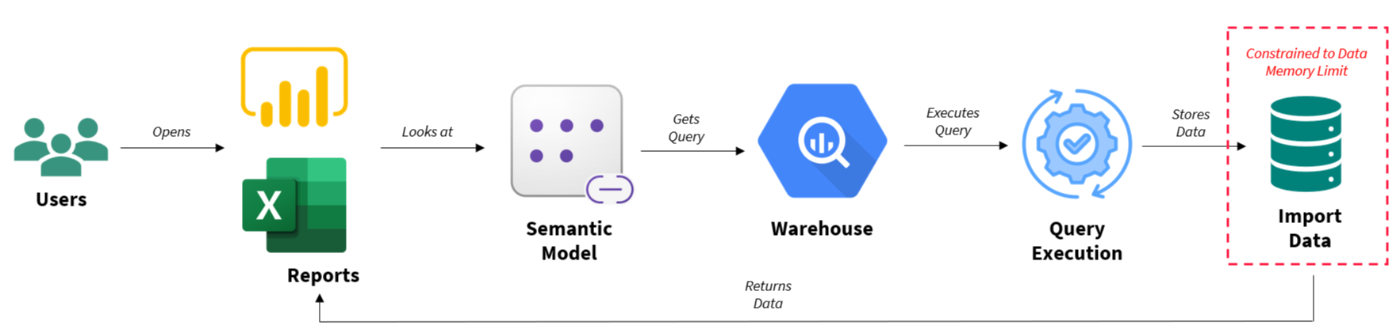

Then scale hit.

The Import Data Size Wall.

Every organization will reach this point.

- Memory limits

- Longer refreshes

- Transaction-rate demands

Leadership insists:

We want transactional-level access. We want to layer AI on top.

This is the fork in the road.

Most teams flail, revert to “DirectQuery”.

They give up discipline.

They abandon decoupling cost from behavior.

Now they pass that volatility to AI.

This is not transformation.

This is architectural regression.

The Substrate Shift: Direct Lake.

With Microsoft Fabric, Direct Lake switched the execution substrate.

Instead of:

Warehouse query per click

Or loading the warehouse entirely into memory.

Direct Lake reads delta/parquet files from the lakehouse.

No warehouse query per click.

No Data size Import limit.

No loss of semantic governance.

The architecture looks like this:

Warehouse → Controlled pipeline → Lakehouse → Direct Lake model → Reports (AI included)

See what changed? The execution mechanics.

See what didn’t change? The governance.

As Harvard Business Review argued in its study of enterprise AI transformations, sustainable competitive advantage does not come from layering intelligence on top of legacy systems. It comes from re-architecting the structural foundation of the firm.

Direct Lake enables scale.

It does not eliminate discipline.

This Isn’t About Fabric.

This applies everywhere:

- Snowflake

- BigQuery

- Databricks

- Redshift

- Fabric

|

Model |

Cost Scales With |

AI Risk |

|---|---|---|

|

DirectQuery |

User + AI behavior |

Explosive |

|

Import |

Refresh cadence |

Stable |

|

Direct Lake |

Ingestion pipelines |

Scalable |

DirectQuery + AI = volatility.

Governed ingestion + AI = leverage.

AI multiplies system behavior.

That is the rule.

The Manifesto.

Here is the uncomfortable truth.

BI systems were built for human consumption.

We are introducing machine consumption.

If you do not get execution right before bringing AI on the scene, AI will find your weaknesses long before any CFO could.

So here is the manifesto.

- Stop defaulting to live query.

- Align for decision latency.

- Govern semantic scope. Don’t let it explode.

- Build a centralized ingestion model.

- Bring AI only after execution discipline is established.

- AI should ride on top of a stable system, not one in free fall.

AI does not create chaos. It exposes it.

Final Question.

Before you deploy another AI assistant on your dashboards, ask:

Does our architecture scale with decisions or with curiosity?

The future of analytics is not real-time.

It is not AI-first.

It is decision-aligned.

And the teams who understand that will not just survive the AI era.

They will define it.

[story continues]

tags