Science fiction understood the structure of this transition long before Silicon Valley did

"The winners will be those who behave most like humans."

- Packy McCormick, Not Boring, January 2025

Science fiction did not predict AI. That is the wrong compliment.

What it predicted, with unnerving consistency, was what happens when a machine enters an unequal society: who gets to own it, who gets reorganized around it, who gets told to adapt, and who gets to describe that adaptation as progress.

That is why so much old sci-fi now feels more current than the discourse built around the technology itself. The writers missed the hardware. They missed the interface. They missed the timeline. They kept hitting the structure dead on.

The machine always arrives inside an existing order. And it always serves that order first.

There are two ways to read the sci-fi canon. One is as a product roadmap, a shelf of prototypes waiting for compute and cost curves to cooperate. The other is as a diagnostic archive, a hundred years of stories about labor, hierarchy, dependence, disposability, and the tricks power uses to make upheaval sound inevitable.

The roadmap reading is fun.

The diagnostic reading is what matters now.

Because the central question of the AI transition is not whether the technology works well enough to matter. It clearly does. Maybe not to the extent its owners pretend it does (yet), but it’s far more than just a gadget at this point.

The real question is simpler and more dangerous: who decided the terms of this transition before most people even understood they were inside one?

Among the people trying to answer what AI means for work, Packy McCormick has written one of the most useful essays in the cycle. Most Human Wins became influential for a reason. It gives ambitious people a way to think clearly. Skills commoditize. Value moves up the stack. The safest place to be is in the part of the process that still feels irreducibly human. As advice, it is excellent. As an analysis, it is incomplete.

Because a navigation system is not a terrain map.

It can tell you how to move. It cannot tell you who poured the concrete, who owns the roads, who installed the tollbooths, or why everyone is suddenly insisting you start driving faster.

That missing context matters because the current AI rollout does not feel like the internet or the smartphone. Those were pull technologies. People wanted them. The adoption was messy, the harms were real, but the demand was unmistakable. AI, especially in working life, feels different: less like something broadly demanded and more like something being installed.

That difference changes everything.

The Canon Saw the Structure First

'“Between the mind that plans and the hands that build there must be a Mediator, and this must be the heart.”

-Thea von Haribou, Metropolis

The sci-fi that aged best is not the sci-fi that got the gadgets right. It’s the sci-fi that got the hierarchy right.

Metropolis isn’t really about robots, it’s about verticality. Workers below, owners above, bodies synchronized to the machine. The famous robot is the least important thing in the film. The real subject is the arrangement. Its closing intertitle makes the class structure explicit, which is one reason the film still feels so legible today.

Philip K. Dick saw something even sharper in Do Androids Dream of Electric Sheep?. The android matters because it forces the human world to produce an explanation for exploitation. Why does this not count? What quality has been withheld from it so that use becomes permissible? Dick understood that whenever a system needs labor without guilt, it first needs a story about why the subject of that labor does not fully qualify for moral concern.

Kazuo Ishiguro updates the same logic for a softer, more managerial age in Klara and the Sun. It’s not really about an AI companion. but rather about a society that has become so comfortable sorting human beings by future utility that it barely notices itself doing it. Even the publisher’s description frames the novel around a changing world, an artificial narrator, and the fundamental question of what it means to love.

These works do not agree on what the machine is.

They agree on what power does with it.

The Stack Is Real. So Is the Hand That Built It.

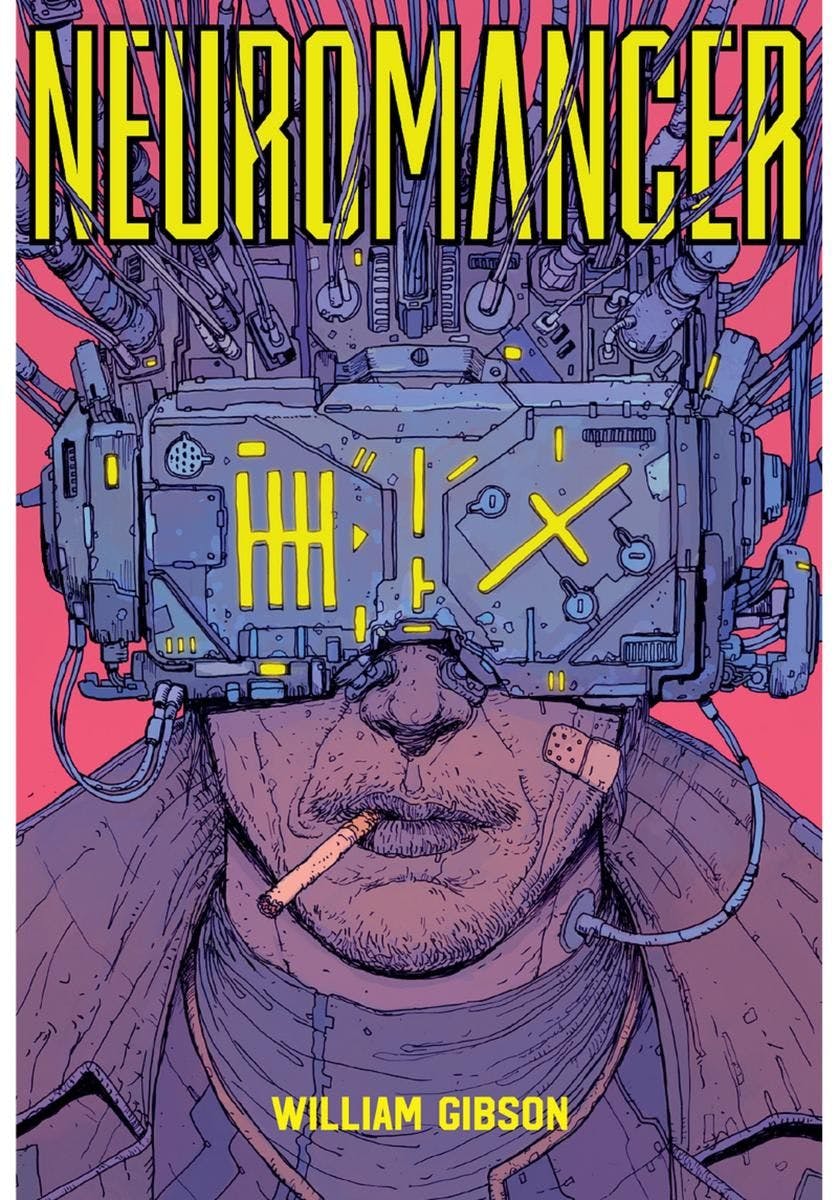

“Cyberspace. A consensual hallucination experienced daily by billions of legitimate operators…”

-William Gibson, Neuromancer

Packy’s right that technology commoditizes skills and pushes value upward.

That has happened repeatedly: agriculture industrialized, manufacturing mechanized, and information got indexed. Entire categories of competence got cheaper, faster, and more abundant. The stack is real.

But stacks are not natural formations. They’re built environments.

Someone owns the infrastructure.

Someone sets the rules of movement.

Someone decides who gets upward mobility and who gets told to call their exclusion a growth opportunity.

That’s the part the optimistic frame leaves undiscussed.

Isaac Asimov saw the stack clearly across his Robot stories and especially in The Caves of Steel. Robots take over labor, humans move higher. But his worlds carry a side effect the framework tends to underplay: the humans who adapt become more insulated, more managed, more cut off from the world they supposedly mastered. The city is efficient, but the price of that efficiency is enclosure.

Then there is the present.

The Stargate Project and the wider wave of hyperscaler infrastructure spending are not just context. They are pressure. OpenAI said Stargate intends to invest $500 billion over four years in AI infrastructure in the United States. Once commitments reach that scale, adoption stops looking like a neutral market event and starts looking more like a requirement of the thesis itself. The people making those bets cannot afford a broad shrug from the market. Uptake must happen.

This is why the current discourse feels so strange: we are being told a story about voluntary transformation while standing inside an installation project.

William Gibson would have recognized the shape instantly. In Neuromancer, AI is not the destroyer of hierarchy. It’s hierarchy perfected: the system is privatized, fragmented, contractual, too sprawling for most people to perceive, and too concentrated for most people to influence. The machine does not break the order, it scales it. Gibson’s “consensual hallucination” line is still useful not only because it predicted the internet perfectly, but because it captured the feeling of infrastructure becoming environment.

Moving up the stack is still good advice.

It’s just not the whole board.

The Coercion Arrives Dressed as Opportunity

“Ending is better than mending.”

-Aldous Huxley, Brave New World

This is the part the polite discourse keeps softening.

Workers are not uniformly enthusiastic about more AI in working life. A 2025 Pew Research survey found U.S. workers more worried than hopeful about future AI use in the workplace, while an earlier Pew survey on AI in hiring and evaluation found broad discomfort with these uses of AI.

Yet here it is. Not because mass demand made it unavoidable, but because capital at this scale does not know how to pause. It only knows how to deploy.

And so the coercion takes a very modern form: nobody forces you…exactly.

Nobody forces you to rewrite your résumé for machine parsing. You just disappear earlier if you do not.

Nobody forces you to use AI tools. You just become easier to outcompete if you refuse.

Nobody forces you to accept the new standard. You just pay the price for acting like there is still a choice.

That is not freedom. It is architecture.

Aldous Huxley understood the elegance of systems that no longer need visible force in Brave New World. “Ending is better than mending” is not just a slogan about consumption, it’s a compressed theory of how systems teach people to internalize replacement, speed, and managed desire as common sense. That’s why it still works here: the present version is not soma, a pleasure everyone unmistakably wants. It’s the cafeteria serving one meal: technically, you can decline. Practically, you still need lunch.

It’s what makes the current moment so slippery: it presents itself as empowerment, but it arrives as a mandate. It markets itself as optionality while removing the conditions under which refusal would remain affordable.

The coercion works because it does not feel like coercion.

It feels like being realistic.

The First Costs Land Where They Always Land

“It is the basic condition of life, to be required to violate your own identity.”

-Philip K. Dick, Do Androids Dream of Electric Sheep?

There’s a familiar rhythm to technological transition: the upside gets narrated from the top, the downside arrives first at the bottom.

The argument for scraping creative and informational work to train AI models follows a script that should sound ancient by now. The material was public, the individual contribution is negligible. The future gains are large and compensation is impractical. History will vindicate the seizure.

This logic isn’t new. It’s what ‘systems’ say when they want access to value without negotiation.

That’s one reason Ed Zitron has become such a useful counterweight in the current debate. His writing keeps returning to the gap between the grandness of the narrative and the messier economic reality underneath it. He’s valuable not because he’s always maximally right, but because he keeps dragging the conversation back to incentives, costs, and whoss being asked to absorb them first.

In this regard, P.K Dick still bites very hard: the android is a mirror. Not because it’s secretly human, but because the explanation for why it may be used always resembles the explanation for why some humans may be used first.

He did not predict the ‘dataset’, he predicted the justification.

The Useful Forecast

“The robot cannot go back until the question of ‘what do people need?’ is answered.”

—Becky Chambers, A Psalm for the Wild-Built

None of this makes the optimists wrong, though. Human judgment, presence, taste, courage, craft, and moral imagination may become more valuable as AI slop floods the web.

But optimism without structure becomes self-help for people standing in someone else’s system.

The sci-fi canon’s most useful forecast isn’t that machines get smarter, it’s mainly that benefits move upward first, costs move downward first, and institutions arrive late enough to call the outcome unfortunate instead of chosen.

That is the terrain.

The human story is not over. But it won’t be decided by individual adaptation alone, it’ll be decided by who gets leverage over the terms of transition, who gets protected during the installation, and whether the rest of us notice in time that what’s being presented as inevitability is, in large part, a set of decisions made by interested parties at extraordinary speed.

The Becky Chambers future glimpsed in A Psalm for the Wild-Built is still possible: a world in which people rediscover craft, slowness, and the question of what they actually want once efficiency stops being the only god. But it’s only one path, not the default.

The machine is here. The real question was never whether it would change things.

It was always who got to decide how.

This is the first in a six-part series using science fiction as a lens for understanding AI, work, and power in 2026 and beyond.

Next: who the AI actually works for, and what Gibson got exactly right about the answer.