The rapid advancement of artificial intelligence (AI) has created an unprecedented surge in the demand for energy. Training and running large-scale models require massive computational resources housed in power-intensive data centers. Today, hyperscale AI facilities demand gigawatts of power, equivalent to the needs of an entire city. This demand strains grids and increases emissions from fossil fuel reliance, not to mention exacerbating water scarcity due to cooling needs. While there have been improvements in efficiency in hardware and algorithms, the exponential scaling of AI models means infrastructure on Earth faces real limits in energy availability, land, regulatory hurdles, environmental impact, and cooling ability. This is where space comes into the picture.

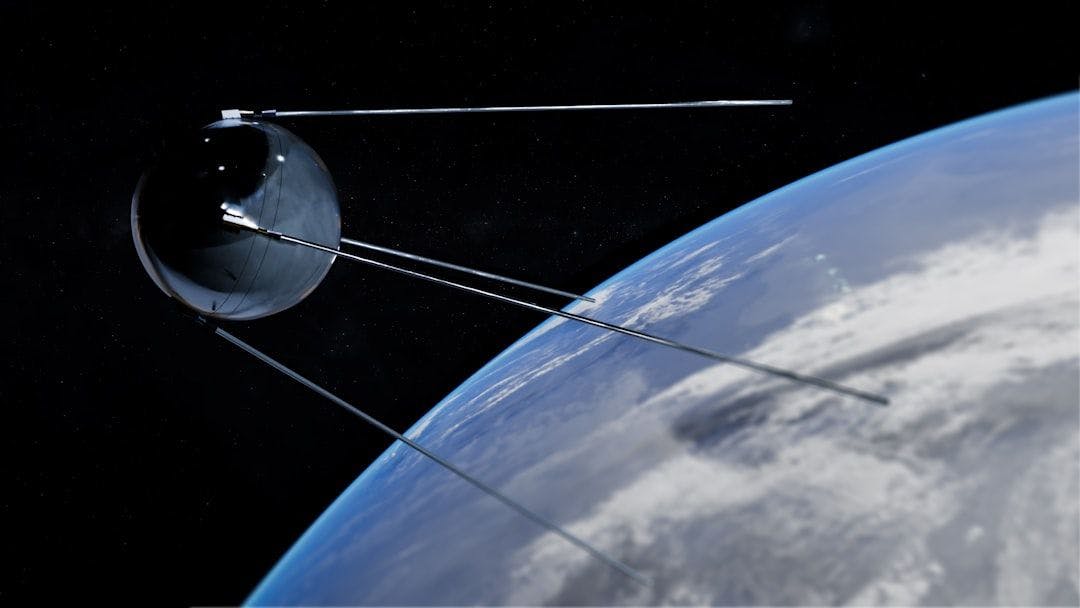

In the very near future, AI servers and data centers may be launched into space, which is deemed a viable location for such sizeable and energy-hungry technologies. In orbit, solar panels receive constant, uninterrupted sunlight – energy – without the need for human-generated electricity, removing the ability to overextend the existing energy grid limit on Earth. In space, it’s near-limitless, clean energy capture. Equally important, the vacuum of space provides superior radiative cooling, dissipating heat efficiently without the massive air- and water-based systems required on Earth, where cooling can use massive amounts of energy.

SpaceX and Google have already announced plans to deploy orbital servers for trial. At the recent Davos conference, Elon Musk said that space-based computing could become the cheapest option for AI training within the next two or three years. “The lowest cost place to put AI will be space, and that'll be true within two years, maybe three at the latest,” Musk said.

The advantages of space-based AI servers are compelling. They offer abundant, always-on solar power that can support gigawatt-scale operations sustainably, eliminate land and water issues, reduce emissions, and enable modular constellations for massive scalability. The natural vacuum cooling of space eliminates not only the need for heavy cooling infrastructure but also protection from Earth-based disasters or regulations, providing operational stability and resilience.

However, while the dream of space-based AI data centers is likely to become a reality mainly due to necessity, there are significant hurdles that must first be overcome. Launch costs may be dropping with reusable rockets like Starship, but they are still limited and too high to sustain frequent deployments. Hardware needs to be upgraded as it will face intense radiation that will degrade its components, and heat management will require careful design to avoid overheating in the sunlight. Latency for data transmission to Earth, while low in low-Earth orbit, introduces significant delays compared to terrestrial systems.

Maintenance will be close to impossible without advanced autonomous robotics, as it will be difficult and costly to send astronauts into space every time there is a server issue. The overall economics of such an endeavor are unknown, so it remains a long-term solution for now and not an immediate fix. In the near term, though, we will need to rely on Earth-based systems to generate the energy and cooling needs of AI data centers. Once the technical and economic hurdles are overcome, orbital data centers, with their many advantages, will no doubt become a reality.

[story continues]

tags