Authors:

(1) Yuwei Guo, The Chinese University of Hong Kong;

(2) Ceyuan Yang, Shanghai Artificial Intelligence Laboratory with Corresponding Author;

(3) Anyi Rao, Stanford University;

(4) Zhengyang Liang, Shanghai Artificial Intelligence Laboratory;

(5) Yaohui Wang, Shanghai Artificial Intelligence Laboratory;

(6) Yu Qiao, Shanghai Artificial Intelligence Laboratory;

(7) Maneesh Agrawala, Stanford University;

(8) Dahua Lin, Shanghai Artificial Intelligence Laboratory;

(9) Bo Dai, The Chinese University of Hong Kong and The Chinese University of Hong Kong.

Table of Links

- AnimateDiff

4.1 Alleviate Negative Effects from Training Data with Domain Adapter

4.2 Learn Motion Priors with Motion Module

4.3 Adapt to New Motion Patterns with MotionLora

5 Experiments and 5.1 Qualitative Results

8 Reproducibility Statement, Acknowledgement and References

5.4 CONTROLLABLE GENERATION.

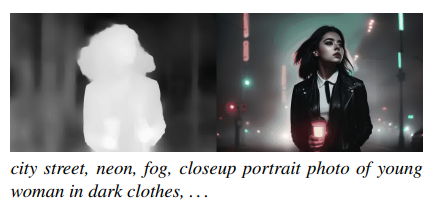

The separated learning of visual content and motion priors in AnimateDiff enables the direct application of existing content control approaches for controllable generation. To demonstrate this capability, we combined AnimateDiff with ControlNet (Zhang et al., 2023) to control the generation with extracted depth map sequence. In contrast to recent video editing techniques (Ceylan et al., 2023; Wang et al., 2023a) that employ DDIM (Song et al., 2020) inversion to obtain smoothed latent sequences, we generate animations from randomly sampled noise. As illustrated in Figure 8, our results exhibit meticulous motion details (such as hair and facial expressions) and high visual quality.

This paper is available on arxiv under CC BY 4.0 DEED license.

[story continues]

tags