There’s a lot of noise right now around Anthropic and defense integration. The framing is dramatic. Ethical AI company. Pentagon contracts. Public friction. Possible exclusion. Strategic risk to the United States.

If I had to take a guess, this was not originally a breakdown. It was a positioning dynamic. However, recent reporting that Anthropic has modified elements of its core safety policy in response to Pentagon pressure indicates that the escalation phase has already begun to produce concrete policy changes. This suggests the conflict functioned not as separation, but as leverage

to renegotiate the operational boundaries under which deployment would occur.

And from a purely strategic standpoint, it may actually be a win-win situation for Anthropic. When you actually look at what has been deployed so far inside federal environments, the picture is far less sensational than headlines suggest.

What Is Actually Being Deployed

If you strip away the rhetoric, what we’re seeing is essentially hardened, isolated LLM deployments inside DHS and federal networks.

Think air-gapped or enclave-based systems. Containerized. Logged. Controlled.

They are not deploying autonomous weapons controlled by Claude. They are deploying language models for summarization, document analysis, contextual reasoning, and internal workflow acceleration. The core mechanics are the same capabilities that existed when LLMs were first broadly released in 2022 and 2023. System prompts. Context injection. Retrieval. Persona definition.

The difference is not raw capability. The difference is security posture and deployment environment.

These systems are secured for federal networks, governed by compliance requirements, and embedded into existing infrastructure.

The Public Tension

We are in a strange cultural moment.

On one hand, the public has been primed for pro-AI sentiment for over a century through science fiction. AI as co-pilot. AI as assistant. AI as amplification of human capability.

On the other hand, there are immediate anxieties. Job displacement. Automation shocks. Integration into military and intelligence systems. The anti-AI crowd, or neo-Luddite wave, is very real.

Anthropic’s brand is built on ethical AI, alignment, and safety research. That brand equity matters enormously in the current climate. So publicly, they have to thread the needle carefully.

They cannot appear reckless.

At the same time, defense and intelligence agencies are not selecting vendors based purely on philosophical alignment statements. They are selecting based on security compliance, reliability, capability, and long-term viability.

Those two pressures collide in public, especially in a landscape where publicity itself has strategic value, even when operational realities remain unchanged.

The Pentagon’s Perspective

From the DoD’s standpoint, the key question is not “Is this company ethical?”

It’s “Can this system operate inside mission constraints?”

If multiple vendors offer similar core capabilities, then differentiation becomes guardrails, policy enforcement, auditability, and configurability. Not whether the base model can generate text.

And importantly, the Pentagon rarely relies on a single vendor in emerging technology domains. They build parallel ecosystems. Redundancy is part of risk mitigation.

If one vendor declines a particular use case, workloads shift rather than stop. It is more accurate to think of it as redistribution across parallel systems. So the idea that Anthropic would be dramatically blacklisted over positioning disagreements is unlikely. More probable is selective integration under specific constraints.

The Guardrail Question

There is speculation that Anthropic might “drop guardrails” in defense contexts.

That framing misunderstands how enterprise deployments work.

Guardrails are not binary. They are configurable. Policy enforcement layers differ depending on operational context, data classification, and user role. The version of a model deployed in a consumer web interface is not necessarily identical to the version deployed in a secured federal enclave.

That does not mean “no guardrails,” despite how it is often framed. It means different policy environments.

However, recent safety policy revisions indicate that guardrails themselves can become subject to institutional negotiation under sufficient strategic pressure. This demonstrates that guardrail architecture is not purely technical—it is partially determined by political, economic, and national security constraints.

If you do not see meaningful exclusion in procurement listings, SAM registrations, or federal contract activity, then integration is continuing, regardless of public rhetoric.

Why Anthropic Likely Won’t Actually Be Dropped

If I had to guess, even if there is public friction or signaling pressure, Anthropic will not actually get dropped.

Recent reporting indicates Anthropic has modified elements of its safety policy in response to defense pressure. This does not necessarily mean unrestricted deployment, but it does demonstrate that guardrail policy itself can become a negotiable variable. The public conflict phase may have functioned to establish leverage, define red lines, and shape the terms under which eventual integration would occur, rather than prevent integration entirely.

The DoD rarely relies on single vendors, especially in emerging technology domains. Removing a technically viable supplier entirely introduces its own operational and strategic risk. Vendor diversity is part of how federal systems maintain resilience.

The fact that policy changes occurred without Anthropic exiting federal integration reinforces that the conflict was not about whether deployment would continue, but about defining the acceptable operational scope. In game-theoretic terms, this reflects a transition from signaling to negotiated adjustment rather than structural disengagement. From Anthropic’s perspective, publicly reinforcing their ethical positioning protects their broader commercial credibility. It reassures enterprise customers, regulators, and the public that they are not abandoning their alignment principles. That positioning has real long-term value.

At the same time, remaining technically and operationally viable within federal environments ensures they do not remove themselves from one of the most important infrastructure transitions currently underway.

This creates a situation where public positioning and operational integration do not necessarily contradict each other.

If we do not actually see concrete exclusion in SAM listings, procurement pipelines, or federal contract activity, then it is reasonable to assume integration continues regardless of public posture. Procurement systems leave traces. Vendor relationships do not simply disappear without structural indicators.

Guardrails in these environments are also not static. They are configurable layers aligned to operational requirements, classification levels, and deployment contexts. The constraints applied to consumer-facing systems are not identical to those applied inside secured federal infrastructure.

From a strategic standpoint, both sides benefit from maintaining optionality.

The China Angle

The broader concern raised in pieces like the Forbes article is strategic asymmetry.

China does not have a clean separation between private AI companies and state objectives. Integration into military and intelligence infrastructure is direct. To remain competitive in the 21st century, it should be expected that these practices will be adopted and emulated elsewhere, similar to how Ukraine’s use of drone warfare rapidly became a new norm in defense.

The U.S. model is different. It relies on private companies navigating public opinion, employee sentiment, investor pressure, and regulatory ambiguity. And that definitely creates friction, but it also creates a system where companies that can integrate responsibly while maintaining public trust become disproportionately valuable.

Refusing integration entirely does not slow global AI militarization. It simply shifts which actors shape it, and what is discussed publicly rarely reflects how decisions are actually made behind the scenes.

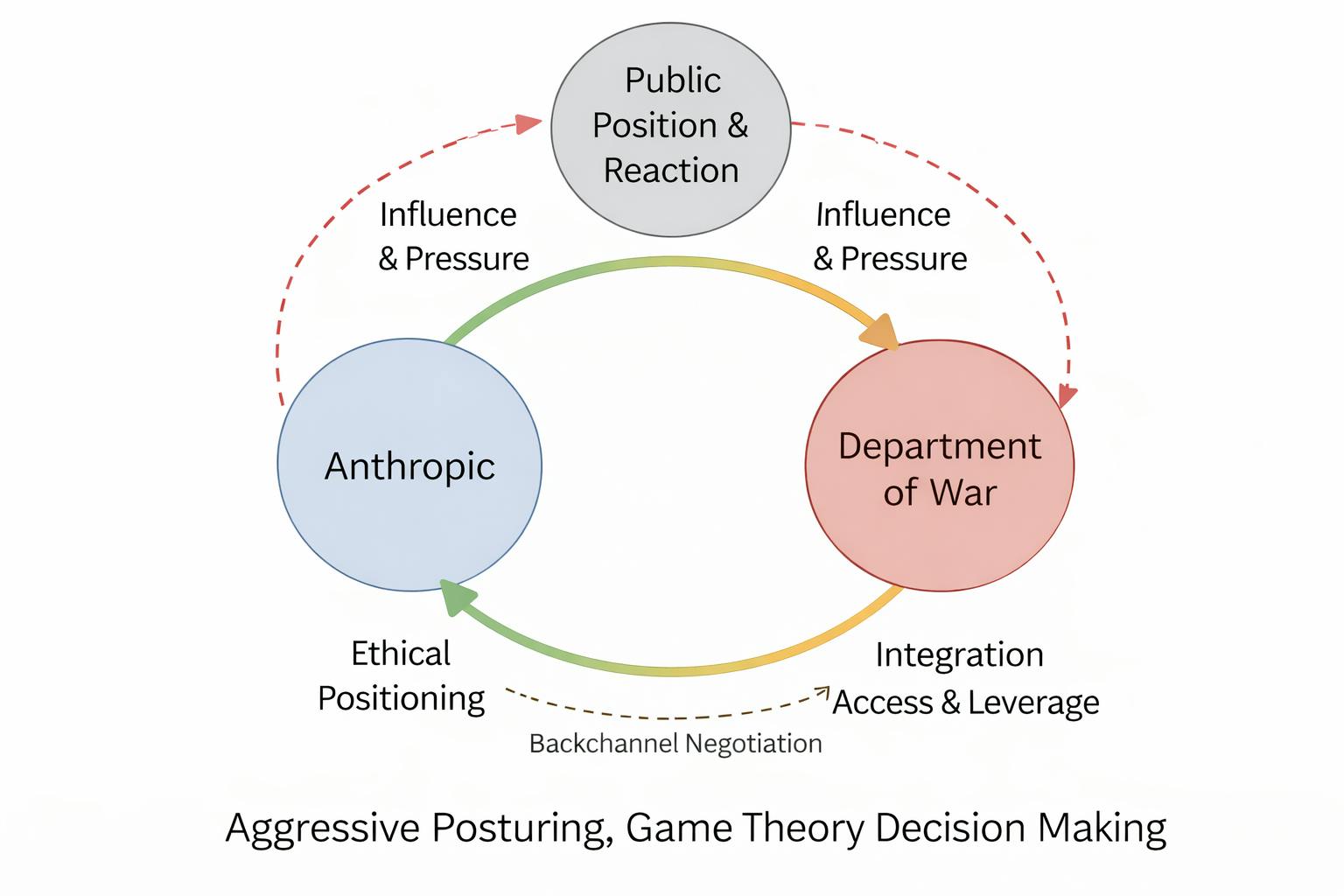

The Game Theory

There is likely a lot of game theory going on here, including real short-term pressure, public signaling, and private negotiation happening at the same time.

Anthropic’s incentives are not purely technical, they are reputational and commercial. Their alignment and safety positioning is a core part of their brand and what differentiates them from other AI vendors. Publicly reinforcing ethical boundaries reassures enterprise customers, regulators, and the broader public that they are not abandoning those principles in pursuit of defense contracts.

At the same time, fully removing themselves from federal integration would mean voluntarily excluding themselves from one of the largest infrastructure transitions currently underway. Once procurement pipelines, deployment standards, and vendor ecosystems solidify, it becomes much harder to enter later. So even under public pressure, maintaining technical and contractual viability remains critical.

The Pentagon operates under its own strategic incentives. In the short term, applying pressure signals seriousness, establishes leverage, and communicates expectations to vendors and competitors. It also reassures internal stakeholders and policymakers that alignment and compliance requirements are being enforced.

But in practice, defense procurement and integration occur over long timelines, often through pilots, subcontractors, enclave deployments, and classified programs that are not fully visible publicly. Vendor relationships evolve gradually, and operational continuity does not necessarily stop because of public friction.

Public positioning and operational reality are often intentionally separated. Confidentiality, classification, and restricted procurement channels exist specifically to allow negotiation, evaluation, and deployment without full public visibility. What appears externally as conflict can internally be part of leverage, negotiation, or controlled signaling.

In this way, both sides initially preserved leverage and optionality. However, the subsequent modification of Anthropic’s safety policy indicates that the escalation phase produced concrete negotiation outcomes. Public resistance established credibility with enterprise customers, regulators, and employees, while eventual policy adaptation preserved Anthropic’s position inside federal deployment pipelines. This pattern is consistent with brinkmanship dynamics, where escalation establishes negotiating boundaries before operational compromise occurs.

Anthropic reinforces its ethical positioning while remaining technically viable. The DoD applies pressure while maintaining access to capable vendors. Public perception is managed, while operational decisions continue on longer timelines.

This is not necessarily a contradiction. It is the predictable outcome of strategic negotiation inside classified and infrastructure-level technology transitions.

And this pattern is not new. Similar dynamics played out with Google during Project Maven, where public backlash and internal employee protests led to public withdrawal, while other defense-related AI and cloud integrations across the industry continued to expand. Microsoft faced internal pressure over military cloud contracts while still becoming a core infrastructure provider through programs like JEDI and JWCC. Palantir openly positioned itself as a defense partner and became deeply embedded across U.S. and allied intelligence systems. In each case, public positioning, internal pressure, and operational deployment did not always move in lockstep. Infrastructure integration tends to continue through parallel channels even when public narratives suggest hesitation or conflict.

At the same time, escalation in public rhetoric does not automatically translate into structural disengagement. Deadlines, pressure, and supply chain risk language can function as negotiation leverage as much as finalized procurement outcomes. Defense integration occurs through layered contracts, subcontractors, enclave deployments, and classified channels that do not unwind overnight. If a vendor were truly being removed, it would become visible through procurement exclusion, contract termination, or replacement integration timelines, not solely public statements. Until those structural indicators appear, it remains entirely possible that what is being presented publicly as confrontation is part of the negotiation process shaping long-term deployment terms. In defense procurement, rhetoric can move faster than infrastructure, and escalation alone does not confirm separation unless it is followed by concrete procurement or deployment changes.

Escalation Outcome

Recent safety policy changes confirm that the confrontation phase produced real institutional effects. This does not necessarily indicate unrestricted deployment, but it demonstrates that guardrail policy itself can become part of strategic negotiation. The conflict phase may have functioned to establish leverage, signal internal resistance, and shape the terms under which eventual integration would continue.

Thesis

The real question is not whether AI integrates into national security infrastructure. That is already happening.

The real question is which companies are present when standards, deployment models, and guardrail architectures are defined. Defense infrastructure is built over years, and the vendors present during early integration phases often remain embedded for decades.

If you are not in the room, you do not shape the rules.

From that perspective, what appears publicly as conflict may be part of a positioning cycle rather than a true separation. And for Anthropic, this illustrates how ethical positioning, public escalation, and eventual policy adaptation can coexist within the same strategic cycle. The public conflict established negotiating leverage and clarified institutional boundaries, while policy adjustments preserved Anthropic’s strategic viability during the formation of national security AI infrastructure. Effectively a win-win for both parties.