You can feel it. You open a newsletter or a post from a founder you follow. The hook is punchy, so it draws you to the first paragraph.

Every line is clean, very polite, and satisfying to the ear. I know, I used to write like that too, spending the whole night trying to perfect sentences that just didn't make sense when I read them out loud)

Sugar-coating your thoughts in writing just to sound like everyone.

AI has been around for decades, but the high rate of use started around 2022. I remember because it was seven or eight months after I started writing content on Facebook. It was pretty hard having to do research, staying up whole nights just to scratch out your next post, using your own brain to connect words that you didn’t even believe would make sense to someone else. When AI came, it was super easy to use the tools.

You could produce easy, smooth content. The hype was pumping, gurus, teaching left, right, and center what they didn’t even know themselves.

You know what I mean: the founder who shares such content knows it, and a small fraction sees right through it. People who don’t follow the “polished version commodity” … people who never wrote anything in their lives can write using tools. What is there to say? This is just how things are written now.

Except here’s the thing: The people you are trying to impress, the experienced operators, the investors who have seen a thousand decks, the senior engineer reading your technical blog, they feel it too. They don’t tell you. They form an opinion instead.

"Fluency is not the same as knowing what you are talking about. It never was. AI just made it easier to forget that fact."

This is the hidden cost of learning that nobody puts in the press release. It’s not even the cost of the subscription. Not the time spent on prompt engineering, but the cost of quietly publishing something that sounds like it came from the same source as the previous piece of content you’ve just passed in the feed. It’s all a commodity. It’s all painted in the same color and hard to question because it’s for everyone, yet it comes from no one's specific mind.

The Novice and the Expert Are Not Using the Same Tool

Here’s what the AI demo does not show you. It shows a beginner typing a question and receiving confident, structured, well-formatted answers. But it doesn't show what happens next, when the answer meets the real world, a real expert, or a real decision with real consequences.

The expert using the same tool has something the beginner does not. They have failed and learned; with that comes expertise and a calibrated sense of wrongness.

They don’t read the same as a beginner. They know within a few sentences whether an output is directionally correct or subtly broken. They know which parts to trust and which parts to interrogate. They have built, over years of work, an internal model of the domain that lets them use AI as a lever rather than a crutch.

The amateur in AI has no such model. They receive confident output that they don’t even know how it came to its conclusion. They have no reliable way to elevate it. Which means the risk is not that they will get wrong answers, it is that they will get answers that are wrong in ways they cannot detect; that distinction is everything. And it shows differently across every field.

Marketing: The Algorithm Optimized for Clicks. You Need to Optimize for Trust.

AI-generated marketing copy sounds professional. It hits the emotional triggers, structures the argument, and lands a call to action. A founder reads it and thinks: done.

An experienced marketer reads it and starts seeing counting problems. The voice is generic in a way that slowly weakens brand distinctiveness. Claims will make the legal team nervous. Audience segmentation is implied but not real; the copy speaks to everyone and therefore, moves no one. Urgency is manufactured in a way that seasoned buyers recognize and dismiss.

None of this is obvious if you have never watched a campaign fail. But experienced marketers have watched campaigns fail in exactly these ways, dozens of times, with real budgets and real consequences. That library of failure is their edge. AI has not earned it. You have not earned it yet if you are using AI to skip the years it takes to build it.

Coding: The Code Runs. That Is Not the Same as the Code Being Right.

The most seductive thing AI does in software is make working code feel like good code. A junior engineer gets a function that passes the tests and ships it. The senior engineer on the code review sees the race condition, the SQL (Structured Query Language) injection surface, the n+1 query that will destroy database performance at scale, not because they are smarter, but because they have watched systems fail in exactly those ways at three in the morning.

AI does not know your architecture, load patterns, deployment environment, or the specific way your stack will misbehave under pressure. The experienced engineer provides that context. More importantly, they know which parts of the AI's output to trust and which parts to throw away. Amateurs accept output. Experts interrogate it. That is not a workflow difference. That is the whole game.

Network Engineering: The Disaster You Do Not See Until It Is Everywhere

Network problems don’t come with warnings. They just show up, things get really slow late at night, or the data randomly does not go through. It can take hours to figure out the cause, sometimes only to turn out to be something small, like a wrong setting AI created and someone deployed because it looked right.

Experienced network engineers carry something that cannot be prompted into existence: a mental map of how systems fail. Every outage they have lived through adds a layer to that map. AI can generate a firewall configuration. It cannot tell you about the specific interaction between that configuration and the legacy BGP setup two hops down the chain that your team inherited from an acquisition three years ago, and nobody fully understands.

You need someone who has been there when things go badly. No tool substitutes for that.

Writing: The Piece That Moves Nobody

Real arguments are not perfectly smooth. There are parts where you can feel the writer struggling, having to make a tough choice or rethink what they were saying. That slight tension isn’t a mistake. It actually shows that you are thinking deeply.

AI prose has no friction. It flows because it has never had to decide anything. The sentences are all the right length. Transitions always land. The conclusion wraps everything up. By the end, you’ve read 800 words, and nothing has actually shifted in you.

Experienced writers and editors feel this before they can name it. A kind of frictionlessness that should not be there. Writing that is technically correct but somehow does not make contact. Word choices are fine. None is surprising. The argument is structured to sound like an argument rather than to actually be one.

That is what you are publishing when you publish unrevised AI prose. Not bad writing, writing that does not land. Writing that signals, to anyone who reads carefully, that the person behind it has not yet done the work of actually thinking it through.

Human Judgment: The Part That Cannot Be Outsourced

Judgment is not intelligence. It does not have the right information. It is the capacity to make a call in conditions of genuine ambiguity, where the data is incomplete, values conflict, and there is no algorithm that resolves it cleanly. It is knowing when the technically correct answer is the wrong answer for this specific situation, this specific person, this specific moment.

AI does not make judgments. It produces outputs. Those outputs can be extraordinarily useful. They can also be confidently, smoothly, and fluently wrong. The only thing standing between that wrongness and a real consequence is a human who has developed enough expertise to catch it.

Accountability Is Not a Feature You Can Turn Off

There is a reason we still require licensed engineers to stamp structural drawings. A reason doctors still sign off on AI-assisted diagnosis. A reason juries still deliberate. It is not nostalgia; it is that accountability requires personhood.

When something goes wrong, and in consequential domains, things go wrong, someone has to answer for it. That person cannot be the model. It has to be a human who understands the domain well enough to take responsibility. Remove that layer, and you do not have a more efficient system; you have an ungovernable one.

For founders specifically, your investors are not just evaluating your product. They are evaluating your judgment, your ability to make good calls under uncertainty. That is the one thing that cannot be delegated to a tool. It is the thing they are watching for when they read your writing, listen to your pitch, and watch how you handle a hard question.

Context is the Thing That Does Not Transfer.

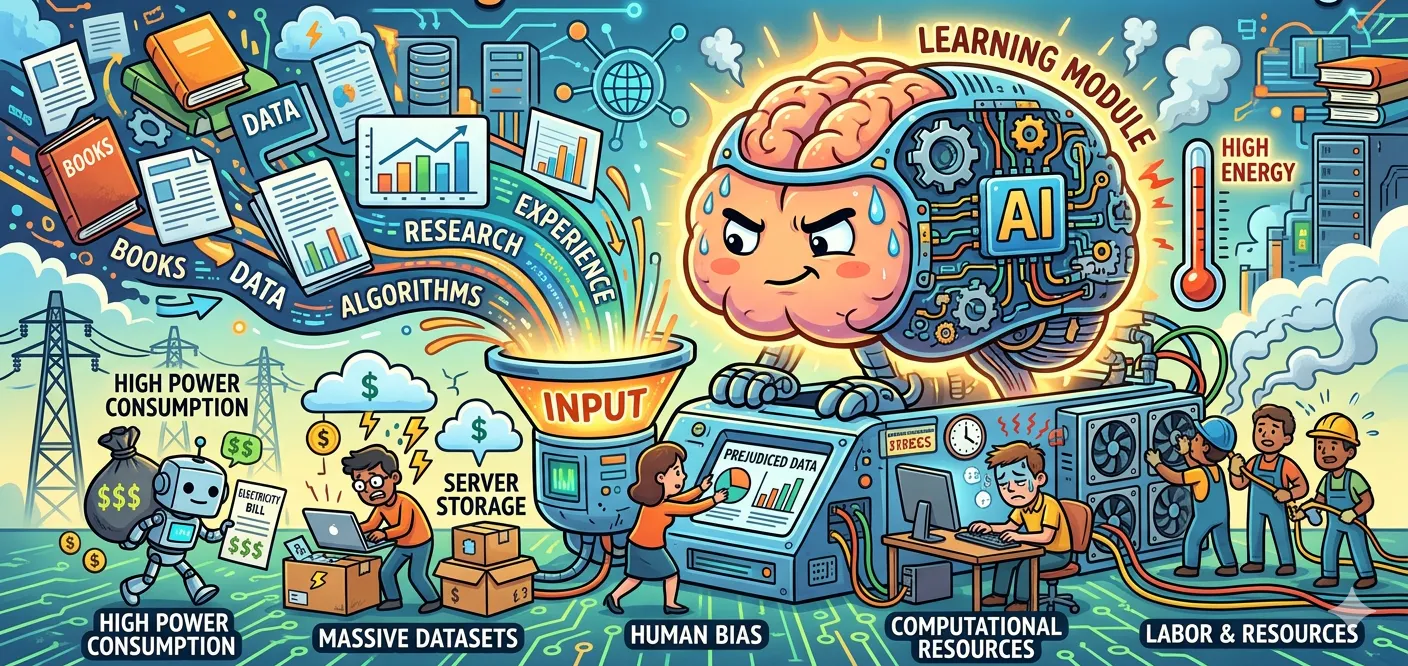

AI models are trained on data, but data has edges. It reflects the world that produced it, specific cultures, assumptions, and time periods. The world keeps moving. The training data does not.

An experienced professional knows when a technically sound recommendation fails because context has shifted. They know when the output reflects bias baked into the dataset. They know when the standard approach will land wrong with this particular audience at this particular moment. That situational awareness is earned through years of noticing when things didn't go as expected.

It is invisible until the moment it prevents a disaster. And it is exactly what you give up when you skip the years of experience and go straight to AI.

The Mechanic Who Has Never Opened a Hood

You need to make a high-performance engine better, not just fix it. Two people are available for the job:

- The first has spent 15 years working on engines. They’ve taken them apart and put them back together so many times that their hands just know what to do. They understand what can be pushed and what will break under pressure. They’ve made costly mistakes and learned from them.

- The second person has never opened a hood. But they have AI. They describe the problem, get a confident answer with references to real performance techniques. On the surface, it looks right.

The experienced mechanic can look at those same suggestions and instantly see what works, what’s risky, and what would destroy the engine in under an hour. They use AI to move faster where they already understand things, and trust their judgment where it matters most.

The second person follows the output. Some of it will be fine. Some will not. And they will not know which is which until it is too late.

AI accelerates expertise. It does not create it. And everyone in the room with real expertise can tell the difference.

That is the hidden cost. Not the subscription fee. Not the hours spent on prompts. The cost is what you communicate about yourself when you use a tool to project knowledge you have not yet built to people who have built it and will know.

Conclusion

Founders who understand this use AI to go faster on things they already know. They invest in building genuine expertise. They write with friction, because friction is evidence of a mind actually working. The opportunity AI offers is real. The shortcut it appears to offer is not. Build expertise. Accumulate failures that teach you what success actually looks like. Develop judgment that no tool can replicate. Then use AI to go faster. In that order. Not the other way around.