TL;DR —

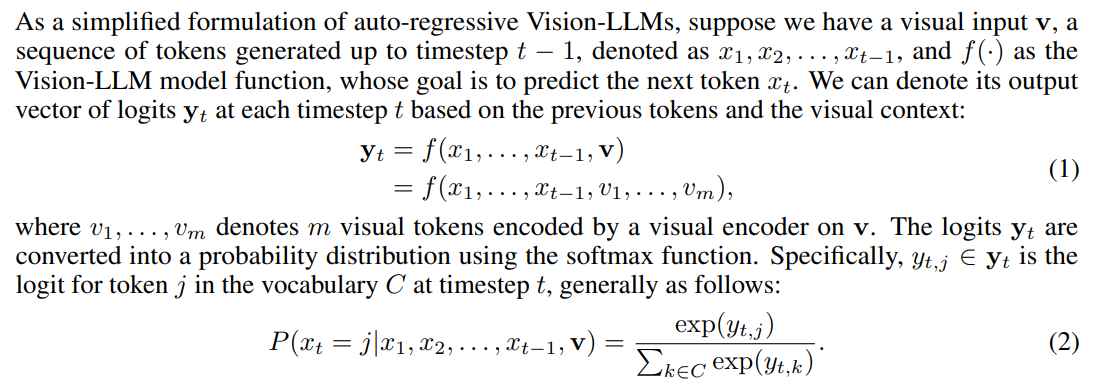

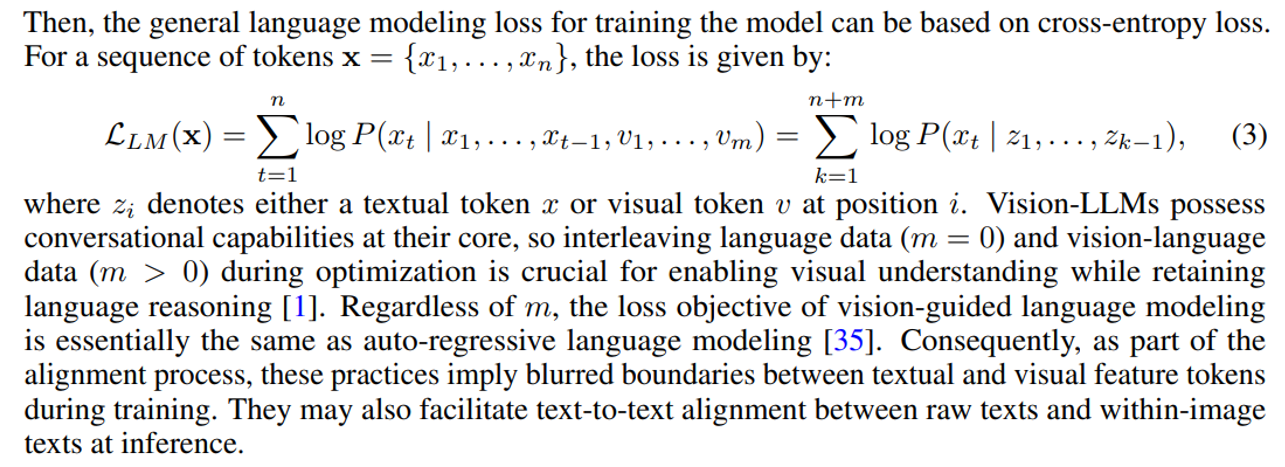

Explaining the role of logits and the softmax function in converting the output vector into a final probability distribution for the next token.

Table of Links

-

Related Work

-

Preliminaries

-

Methodology

4.1 Auto-Generation of Typographic Attack

3 Preliminaries

3.1 Revisiting Auto-Regressive Vision-LLMs

Authors:

(1) Nhat Chung, CFAR and IHPC, A*STAR, Singapore and VNU-HCM, Vietnam;

(2) Sensen Gao, CFAR and IHPC, A*STAR, Singapore and Nankai University, China;

(3) Tuan-Anh Vu, CFAR and IHPC, A*STAR, Singapore and HKUST, HKSAR;

(4) Jie Zhang, Nanyang Technological University, Singapore;

(5) Aishan Liu, Beihang University, China;

(6) Yun Lin, Shanghai Jiao Tong University, China;

(7) Jin Song Dong, National University of Singapore, Singapore;

(8) Qing Guo, CFAR and IHPC, A*STAR, Singapore and National University of Singapore, Singapore.

This paper is

[story continues]

Written by

@textgeneration

Text Generation

Topics and

tags

tags

vision-language-models|vision-llms|autoregressive-models|token-generation|softmax-function|deep-learning|logits|autoregressive-vision-llms

This story on HackerNoon has a decentralized backup on Sia.

Transaction ID: NR23PmrAp9uttKMUNxOrSoKTMbkI0WLfyDnnB0biuxs