"Don't use regular expressions, otherwise you'll have 2 problems instead of 1" - that's what the experts say. And what is left for the naughty ones who want to efficiently search through a huge number of templates?

Sure, there are cool solutions like Ragel or re2c for this specific problem. However, for my project it seemed impractical to master these fine technologies for the time being.

In this article, we will look at alternatives to the standard regexp library in Go and benchmark them for speed and memory consumption. We will also consider the differences between them from a practical point of view.

Analogues

At the moment, I've found the following working alternatives to the default regexp that can be used to find patterns in Go (the versions used in the benchmarks are given in brackets):

- go-re2 (1.3.0) - as simple as possible replaces the default regexp. Uses C++ re2 to improve performance when dealing with large inputs or complex expressions;

- regexp2 (1.10.0) - a feature-rich regexp engine for Go. It does not have runtime guarantees like the built-in regexp package, but is compatible with Perl5 and .NET;

- go-pcre (1.0.0) - provides support for Perl compatible regular expressions using libpcre or libpcre++. JIT compilation is available, making this fork much faster than its counterparts. On the downside, you'll need the libpcre3-dev dependency;

- rure-go (regex 1.9.3) - uses the Rust regex engine with CGo bindings. The downside is a Rust library dependency that needs to be compiled;

- gohs (1.2.2 + hs5.4.1) - regex engine designed for high performance. It is implemented as a library that provides a simple C-API. It also requires compilation and linking of third-party dependencies;

- go-yara - A tool for identifying and classifying malware samples. Although YARA has functionality for templating and regular expressions, it is very limited, so I will not include this library in the upcoming tests.

Existing benchmarks

Before we start comparing the aforementioned solutions, it is worth to show how bad things are with the standard regex library in Go. I found the project where the author compares the performance of standard regex engines of various languages. The point of this benchmark is to repeatedly run 3 regular expressions over a predefined text. Go came in 3rd place in this benchmark! From the end...

|

Language |

Email(ms) |

URI(ms) |

IP(ms) |

Total(ms) |

|---|---|---|---|---|

|

Nim Regex |

1.32 |

26.92 |

7.84 |

36.09 |

|

Nim |

22.70 |

21.49 |

6.75 |

50.94 |

|

Rust |

26.66 |

25.70 |

5.28 |

57.63 |

|

... |

... |

... |

... |

... |

|

Python PyPy3 |

258.78 |

221.89 |

257.35 |

738.03 |

|

Python 3 |

273.86 |

190.79 |

319.13 |

783.78 |

|

Go |

248.14 |

241.28 |

360.90 |

850.32 |

|

C++ STL |

433.09 |

344.74 |

245.66 |

1023.49 |

|

C# Mono |

2859.05 |

2431.87 |

145.82 |

5436.75 |

As far as I can tell, benchmarking regex engines is not the easiest topic, because you need to know implementation and algorithm details for a correct comparison. From other benchmarks I can highlight the following:

-

Benchmarks Game - comparison of engines with optimized versions for each language. For example, Go is no longer at the bottom, but it is still far from ideal there... And of course it does not use a native library, but a wrapper over PCRE - go-pcre, which was specified in analogues.

-

Performance comparison of regular expression engines - Comparison of different regex engines (PCRE, PCRE-DFA, TRE, Oniguruma, RE2, PCRE-JIT).

-

A comparison of regex engines - here the author tries to compare engines using different regular expressions, which can complicate things depending on the engine implementation. In this benchmark, the author's top three engines are: Hyperscan, PCRE (with JIT compilation) and Rust regex (rure uses it):

Benchmark #1

Now let's try to compare the analogues with default regex engine libraries of other languages. And also see how much faster they are compared to the default Go regex. To do this, I updated the above project by adding new libraries code. Here is what I got after running it on my machine:

|

Language |

Email(ms) |

URI(ms) |

IP(ms) |

Total(ms) |

|---|---|---|---|---|

|

Go Rure |

2.61 |

2.11 |

3.33 |

8.05 |

|

C++ SRELL |

1.74 |

3.03 |

10.67 |

15.45 |

|

Rust |

11.66 |

1.70 |

5.13 |

18.48 |

|

Nim |

13.98 |

14.35 |

3.13 |

31.46 |

|

PHP |

14.43 |

14.63 |

4.87 |

33.93 |

|

Go PCRE |

14.18 |

14.98 |

6.21 |

35.37 |

|

C# .Net Core |

10.83 |

5.10 |

26.71 |

42.64 |

|

Javascript |

42.78 |

30.17 |

0.92 |

73.87 |

|

Go Re2 |

35.81 |

37.86 |

33.79 |

107.46 |

|

Go Hyperscan |

90.17 |

31.64 |

8.68 |

130.49 |

|

Perl |

92.51 |

66.42 |

22.51 |

181.44 |

|

Dart |

73.48 |

63.60 |

80.52 |

217.59 |

|

C PCRE2 |

110.87 |

100.57 |

10.50 |

221.94 |

|

Kotlin |

120.31 |

163.53 |

293.69 |

577.53 |

|

Java |

205.14 |

201.55 |

295.68 |

702.36 |

|

Python 3 |

246.26 |

176.01 |

303.08 |

725.34 |

|

C++ STL |

328.87 |

273.43 |

230.35 |

832.64 |

|

Go |

270.19 |

275.73 |

504.79 |

1050.71 |

|

Go Regexp2 |

1703.51 |

1482.60 |

64.46 |

3250.57 |

|

C# Mono |

2543.82 |

2139.44 |

110.37 |

4793.64 |

Ps. Shortened the table a bit, so please check the project fork to see all the results.. Pss. In this case, virtualization might have affected the results (the tests were performed in a Docker environment on Windows 11).

Still, you can see how much faster regexps can be with some libraries! We even outperformed Rust with a Go library that uses the Rust library 🥴🙆🏼♂️. Perhaps this is what the author of this solution is trying to explain to us in his repository.

As a result, almost all alternative solutions give us a speedup of 8-130 times! Except for Regexp2, which turns out to be slower than the standard library.

Benchmark #2

Questions

While studying the existing benchmarks and the results of Benchmark#1, I was lacking the answers to the following questions:

- How fast do the above libraries process large files?

- How much faster is the processing when grouping regular expressions?

- How fast will regular expressions with no matches in the text be processed?

- How much memory do the different libraries use?

- How many regexps will I be able to compile using grouping?

Benchmarker differences

To answer these questions, I wrote a small benchmarking program that can be used to compare different regex engines in terms of speed and memory usage. If you want to test it yourself or evaluate the correctness of the used methods, here is the code.

The following features of this benchmark are worth mentioning:

- In the tests below, I used 5 different regular expressions:

allRegexps["email"] = `(?P<name>[-\w\d\.]+?)(?:\s+at\s+|\s*@\s*|\s*(?:[\[\]@]){3}\s*)(?P<host>[-\w\d\.]*?)\s*(?:dot|\.|(?:[\[\]dot\.]){3,5})\s*(?P<domain>\w+)`

allRegexps["bitcoin"] = `\b([13][a-km-zA-HJ-NP-Z1-9]{25,34}|bc1[ac-hj-np-zAC-HJ-NP-Z02-9]{11,71})`

allRegexps["ssn"] = `\d{3}-\d{2}-\d{4}`

allRegexps["uri"] = `[\w]+://[^/\s?#]+[^\s?#]+(?:\?[^\s#]*)?(?:#[^\s]*)?`

allRegexps["tel"] = `\+\d{1,4}?[-.\s]?\(?\d{1,3}?\)?[-.\s]?\d{1,4}[-.\s]?\d{1,4}[-.\s]?\d{1,9}`

In a good way, I should have used tricky regular expressions, like other benchmark authors do - to check the "weaknesses" of the algorithms. But I don't have much insight into the under-the-hood details of engines, so I used general-purpose regexps. That's why I think it should be possible to evaluate different parameters of libraries from a practical point of view.

- Instead of a static file, we will use a string containing our matches, repeated many times in memory to simulate files of different sizes:

var data = bytes.Repeat([]byte("123@mail.co nümbr=+71112223334 SSN:123-45-6789 http://1.1.1.1 3FZbgi29cpjq2GjdwV8eyHuJJnkLtktZc5 Й"), config.repeatScanTimes)

- In addition to running the regexps sequentially over the data, there will be a separate run with named grouping of regular expressions, where they will take the following form:

`(?P<name_1>regexp_1)|(?P<name_2>regexp_2)|...|(?P<name_N>regexp_N)`

By the way, Hyperscan has a special functionality where we can build a database of regexps and use it against data. In the benchmarks I will use this method.

- Unlike in Benchmark#1, for each regexp I will measure the time to find the result, without taking into account the compilation time;

- In the end, we will get the results for each library and each regexp in the following form:

Generate data...

Test data size: 100.00MB

Run RURE:

[bitcoin] count=1000000, mem=16007.26KB, time=2.11075s

[ssn] count=1000000, mem=16007.26KB, time=62.074ms

[uri] count=1000000, mem=16007.26KB, time=69.186ms

[tel] count=1000000, mem=16007.26KB, time=83.101ms

[email] count=1000000, mem=16007.26KB, time=172.915ms

Total. Counted: 5000000, Memory: 80.04MB, Duration: 2.498027s

...

1-2. Processing large files and grouping regular expressions

By "large file" I mean the amount of data generated from our predefined string in memory equal to 100MB. Of course it's hardly a big file, but I didn't want to wait hours for results in some cases 😅.

Also, it makes sense to try grouping all regular expressions into one and see how much it affects performance. The hypothesis is that a sequential run of regexps over the data should take longer than a single run with a grouped regexp.

Well, let's run the benchmark and see how fast each library processes the data. You can do this with my tool as follows:

# Bench all libs, repeat the line 1000000 times (100MB)

regexcmp 1000000

# Bench individual lib

regexcmp 1000000 rure

# See all options

regexcmp -h

As a result, we have the following data:

|

Lib |

|

Tel |

URI |

SSN |

Bitcoin |

Total |

Total-group |

|---|---|---|---|---|---|---|---|

|

Rure |

0.173s |

0.083s |

0.069s |

0.062s |

2.11s |

2.49s |

10.13s |

|

PCRE |

49.5s |

0.367s |

2.92s |

2.07s |

2.19s |

57.07s |

9.94s |

|

Re2 |

0.954s |

0.63s |

0.956s |

0.945s |

1.05s |

4.54s |

3.24s |

|

Hyperscan |

0.469s |

1.09s |

0.669s |

0.174s |

1.97s |

4.38s |

4.97s |

|

Regexp2 |

126.4s |

1.02s |

21.51s |

2.63s |

3.38s |

154.9s |

20.65s |

|

Go regexp |

11.22s |

1.52s |

3.62s |

2.66s |

3.32s |

22.36s |

26.49s |

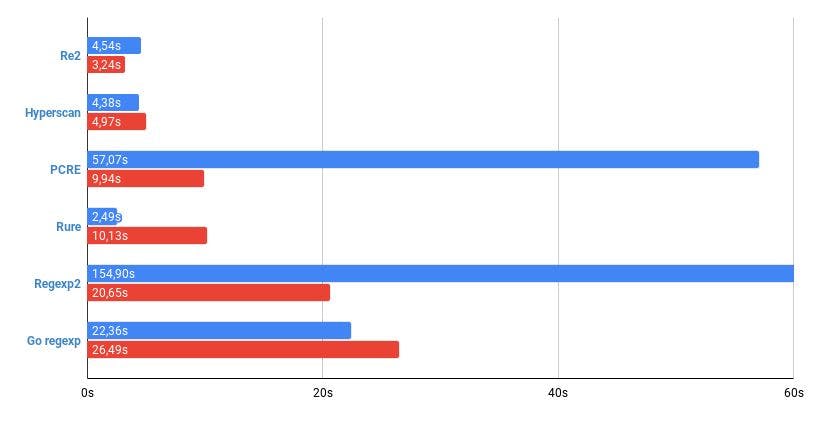

The plot below shows the processing time of 100MB of data by all regexps in sequential mode and using grouping:

Conclusions:

- Grouping can indeed significantly improve execution speed, but in some cases it can make it worse :);

- The fastest in sequential processing turned out to be - Rure, with grouping - Re2;

- Certain regexps may cause problems with some libraries (time to find

emailin Regexp2 and PCRE); - Now it is hard to say that some solutions are 180 times faster than the standard library, the maximum gain is x8-9.

3. Non-matching regexps

In the previous case, we simulated the ideal situation where there are always matches in the data. But what if there are no hits for regexp in the text, how much will that affect performance?

In this test, I additionally added 5 modified regexps for SSN that do not match the data. In this case, SSN means \d{3}-\d{2}-\d{4} regexp, and Non-matching - \d{3}-\d{2}-\d{4}1. Not a big difference? But let's see how it affects the time it takes to find all the matches:

|

Lib |

SSN |

Non-matching |

Total |

Total-group |

|---|---|---|---|---|

|

Rure |

0.06s |

0.083s |

2.93s |

15.68s |

|

PCRE |

2.06s |

4.21s |

81.02s |

13.52s |

|

Re2 |

0.960s |

0.449s |

6.75s |

3.26s |

|

Hyperscan |

0.175s |

0.043s |

4.61s |

4.94s |

|

Regexp2 |

2.73s |

3.09s |

171.1s |

15.72s |

|

Go regexp |

2.66s |

2.57s |

35.13s |

37.66s |

The plot below shows the time taken to process all 10 regexps, sorted by Non-matching processing time:

Conclusions:

- This time is the same: the fastest in sequential processing was - Rure, with grouped expressions - Re2;

- PCRE is once again different, with 2x time to process

non-matchingregexps in sequential mode; - Some algorithms are much faster when there are no matches (Re2, Hyperscan);

4. Memory consumption

Now let's see how much memory the different solutions consume when processing a 100MB file. Below, I have provided the results for each individual regexp and the total amount of memory consumed:

|

Lib |

Email, MB |

Tel, MB |

URI, MB |

SSN, MB |

Bitcoin, MB |

Non-matching, KB |

Total, GB |

Total-group, GB |

|---|---|---|---|---|---|---|---|---|

|

Rure |

16 |

16 |

16 |

16 |

16 |

0.0001 |

0.08 |

0.08 |

|

PCRE |

186 |

186 |

186 |

186 |

186 |

0.38 |

0.93 |

0.9 |

|

Re2 |

254 |

254 |

254 |

255 |

254 |

100473 |

1.77 |

0.97 |

|

Hyperscan |

314 |

314 |

314 |

314 |

314 |

0.68 |

1.57 |

1.7 |

|

Regexp2 |

997 |

854 |

854 |

854 |

902 |

396006 |

6.44 |

5.23 |

|

Go regex |

191 |

144 |

144 |

160 |

208 |

3.62 |

0.78 |

1.8 |

The graph below shows the memory used by the libraries to process 10 regexps (as in the last test), sorted by the time of `non-mathcing':

Conclusions:

- Rure surprises with its practically zero memory consumption;

- Regexp2 is resource hungry, consuming significantly more memory than its competitors;

- Re2, despite its speed, is not the most memory-efficient solution;

- Go regex is on the good side, with relatively low costs in sequential mode.

5. Maximum number of regexps

The main questions seem to be answered. Now let's see the maximum number of regexps we can compile with different solutions. In this case we will take a single regexp and repeat it many times in groups.

For this purpose I will use the URI regexp:

`[\w]+://[^/\s?#]+[^\s?#]+(?:\?[^\s#]*)?(?:#[^\s]*)?`

Next, I've listed the results of compiling the regexps, along with the memory they use. The numbers in the first line are the number of URI expressions in the group:

|

Lib |

100 |

250 |

500 |

1000 |

5000 |

10000 |

|---|---|---|---|---|---|---|

|

Rure |

0.38MB |

❌ |

❌ |

❌ |

❌ |

❌ |

|

PCRE |

0.40MB |

2.37MB |

❌ |

❌ |

❌ |

❌ |

|

Re2 |

7.10MB |

15.51MB |

38.35MB |

92.79MB |

1181MB |

❌ |

|

Hyperscan |

0.03MB |

0.06MB |

0.12MB |

0.25MB |

1.21MB |

2.52MB |

|

Regexp2 |

1.06MB |

3.96MB |

12.56MB |

43.93MB |

926MB |

3604MB |

|

Go regex |

1.29MB |

4.52MB |

14.02MB |

47.18MB |

942MB |

3636MB |

Conclusions:

- As we can see, some solutions have limitations on the size of compiled regexps;

- Hyperscan not only allows to use a large number of regexps, but also uses minimal memory for compiled regexps;

- Regexp2 and Go Regex have comparable memory consumption and also allow to compile a large number of regexps;

- Re2 consumes the most memory at compile time.

Conclusion

I hope it was useful for you to learn about alternative solutions for regexps in Go, and based on the data I presented, everyone will be able to draw some conclusions for themselves, which will allow you to choose the most suitable regex solution for your situation.

We can compare these libraries, the algorithms they use, their best/worst sides in details and for a long time. I just wanted to show the difference between them in the most general cases.

Thus, I would advise you to look at rure-go for maximum speedup of regexps, but if you need the easiest library installation without dependencies, that is go-re2. And in the case of handling a large number of regexps hyperscan would be a good choice. Also, don't forget the cost of using CGo in some libraries.

As for me, I will definitely use these "findings" in my future project :).