TL;DR —

A simplified guide to understanding Theorem 5’s proof in RL, breaking down complex concepts for beginners.

Authors:

(1) Jongmin Lee, Department of Mathematical Science, Seoul National University;

(2) Ernest K. Ryu, Department of Mathematical Science, Seoul National University and Interdisciplinary Program in Artificial Intelligence, Seoul National University.

1.1 Notations and preliminaries

2.1 Accelerated rate for Bellman consistency operator

2.2 Accelerated rate for Bellman optimality opera

5 Approximate Anchored Value Iteration

6 Gauss–Seidel Anchored Value Iteration

7 Conclusion, Acknowledgments and Disclosure of Funding and References

D Omitted proofs in Section 4

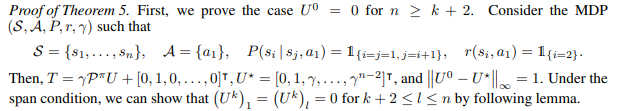

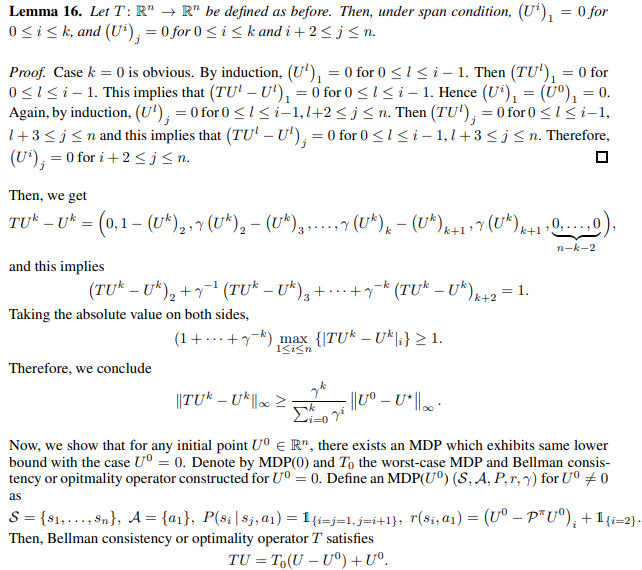

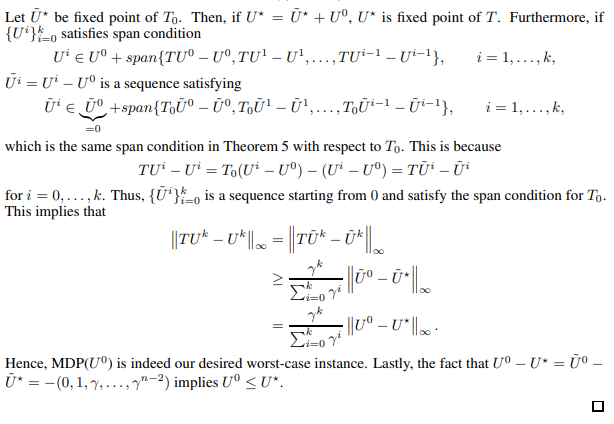

We present the proof of Theorem 5.

This paper is available on arxiv under CC BY 4.0 DEED license.

[story continues]

Written by

@anchoring

Anchoring provides a steady start, grounding decisions and perspectives in clarity and confidence.

Topics and

tags

tags

reinforcement-learning|dynamic-programming|nesterov-acceleration|machine-learning-optimization|value-iteration|value-iteration-convergence|bellman-error|reinforcement-learning-proofs

This story on HackerNoon has a decentralized backup on Sia.

Transaction ID: mieMXzBF0xhi5IEGU8dzXxbcK0yYrwr63kkwi9N0Z7k