Navigating the Nuances of Median Statistics in Sliding Windows

Following the whirlwind success of our previous exploration into the algorithmic enigmas of coding challenges, it's time to delve into another intriguing puzzle that has garnered attention in coding interviews and beyond. Today, we're shifting our focus from the tangible geometry of cube surfaces to the abstract realms of statistics, specifically, the calculation of medians within a sliding window. But what exactly is a median, and why does it hold more significance than the average in certain scenarios?

A median represents the middle value in a dataset when arranged in ascending order. Unlike the mean, which sums all values and divides by their count, the median is less susceptible to outliers—a property that renders it invaluable in datasets with high variance and a low signal-to-noise ratio, such as those found in financial markets. The formula for the median in a sorted list of numbers depends on the count being odd or even: for an odd count, it's the middle number; for an even count, it's the average of the two middle numbers.

Let's be real: when it comes to slicing and dicing data over time, window-based stats are pretty much the superhero of the story. Whether in high-frequency trading (HFT), machine learning, time series analysis, or monitoring systems, understanding the dynamic median of a dataset over a specified window offers insights that static analysis could miss.

The Problem: Median in a Sliding Window

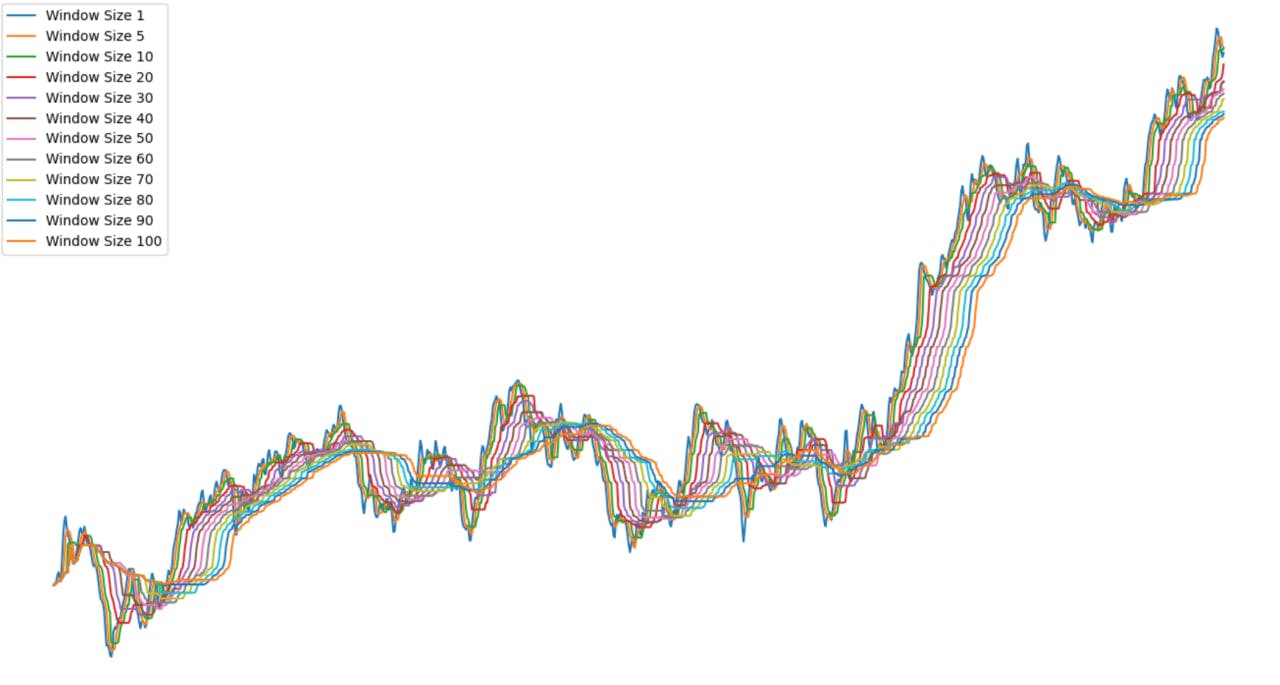

Consider a sliding window moving across a series of numerical data points. Within this window, we aim to calculate the median of the data points at each step, providing a continuous view of the dataset's central tendency as the window progresses. This task is not only a staple of coding interviews but also finds practical application in fields as diverse as HFT, machine learning, and real-time data analysis.

Example:

Given the input series [ -7, -7, -6, 1, -6, -5, -10, 4, 6, 8], with a window size of 3, the medians are [-7.0, -6.0, -6.0, -5.0, -6.0, -5.0, 4.0, 6.0]

This task's relevance extends far beyond the confines of coding challenges, impacting various domains such as HFT, where accurate and timely data analysis can yield significant financial outcomes, machine learning models that depend on robust feature extraction from noisy datasets, and the critical evaluation of trends in time series data, vital for forecasting and anomaly detection. The application of such an algorithm in real-world scenarios underscores the overarching importance of median statistics in sliding windows, offering a more reliable measure of central tendency amidst data fluctuations.

As we proceed, this article will unravel the intricacies of calculating medians in sliding windows, a testament to the ongoing need for adept problem-solving and algorithmic prowess in the ever-evolving field of software development and data analysis. Stay tuned as we dissect the problem, outline a solution, and delve into the practical applications of this fascinating statistical challenge.

Essential Data Structures: Binary Heaps, Queues, and Lazy Deletion Dictionaries

To address the median in a sliding window challenge, we deploy an arsenal of sophisticated data structures, each serving a unique role in the algorithm's design and functionality. Here's a closer look at these components:

- Binary Heaps: At the core of our solution are two types of binary heaps: the min-heap and the max-heap. A binary heap is a complete binary tree where each node is smaller (min-heap) or larger (max-heap) than its children, ensuring the smallest or largest element is always at the root. Picture a binary heap as a family tree where each parent is either the runt (in a min-heap) or the giant (in a max-heap) compared to their kids. This property is key to efficiently managing the data within the sliding window, allowing quick access to the median. The max-heap is used to store the lower half of the numbers in the window, ensuring quick access to the smaller values, while the min-heap holds the upper half, managing the larger values. This division enables us to efficiently locate the median value by looking at the roots of these heaps.

- Queue: This data structure operates on a first-in, first-out (FIFO) principle, making it perfect for tracking the order of elements as they enter and exit the sliding window. As new data points arrive, they're enqueued (added to the rear), and as the window moves, the oldest data points are dequeued (removed from the front). This mechanism ensures we always know which element to remove from our binary heaps when it exits the window.

- Dictionary for Lazy Deletions: Lazy deletion is an optimization technique used to handle the removal of elements from our heaps. Instead of immediately removing an element from a heap when it exits the window, we mark it for deletion in a dictionary. This approach allows us to defer the actual removal until necessary (i.e., when the element reaches the root of the heap), reducing the overhead associated with heap operations. The dictionary tracks the count of how many times each element is marked for deletion, enabling efficient management of duplicates and ensuring that our heaps always reflect the current state of the sliding window.

By integrating these data structures, we construct a robust framework capable of dynamically calculating medians in a sliding window, balancing efficiency with accuracy. This strategic assembly not only facilitates the median calculation but also exemplifies the power of data structure synergy in solving complex algorithmic problems.

The crux of the median calculation lies in maintaining a balanced view of the data as it changes dynamically. By keeping half of the elements in a max-heap and the other half in a min-heap, we can efficiently calculate the median by examining the tops of these heaps. This approach adapts well to sliding window scenarios, where data points continuously enter and exit the window, necessitating real-time updates to the median calculation.

Algorithmic Approach: Building a Composite Data Structure

Our solution architecture combines the aforementioned data structures to form a composite structure capable of efficiently solving our median calculation problem. This setup involves:

- Adding Elements: When a new element arrives, we determine which heap to add it to based on its value relative to the current median. This step might ruffle some feathers or, well, disturb the heap harmony for a sec.

- Rebalancing Heaps: To ensure the heaps remain balanced (i.e., have the same number of elements or differ by at most one), we may need to move elements between them after adding a new element or removing an old one.

- Removing Old Elements: As the window slides, the oldest element (the one that exits the window) must be removed. We use the lazy deletion strategy here, marking elements for deletion in our dictionary and physically removing them from the heaps only when they reach the top, thereby avoiding unnecessary reheapifications.

- Lazy Cleaning: This step involves removing the elements marked for deletion from the heaps, ensuring that our median calculation remains accurate and our data structure does not grow unnecessarily large.

Working with the Composite Structure

Implementing this composite structure involves careful management of each component:

-

Initialization: Setting up the min-heap, max-heap, queue, and lazy deletion dictionary.

An important aspect of our approach that readers should note is the use of a simple yet effective trick for converting between max heaps and min heaps. We achieve this by applying a unary minus to the elements before adding them to the heap. This allows us to use Python's standard min-heap provided by

heapqas a max heap, by adding and removing elements with their sign inverted. This approach significantly simplifies managing the two heaps to maintain the median in a sliding window, facilitating the process of adding new elements, balancing the heaps, and adjusting the median as elements are added or removed. -

Warm-up: Populating our data structure with an initial set of elements to start the median calculation process.

-

Calculating the Median: Efficiently determining the median based on the current state of the min-heap and max-heap.

-

Rebalancing: Adjusting the heaps to maintain balance after adding or removing elements.

-

Element Addition and Removal: Adding new elements to the appropriate heap based on their value relative to the median, and removing elements from the queue (and marking them for lazy deletion) as they exit the window.

-

Lazy Cleanup: Periodically executing lazy deletion to remove marked elements from the heaps, ensuring the efficiency and accuracy of the median calculation.

Through this comprehensive approach, we address the challenges of calculating the median in a dynamic, sliding window environment, showcasing the power of combining simple data structures to solve complex algorithmic problems. As we continue, we will delve deeper into the specifics of each component within the MedianWindow class, providing a detailed guide to its implementation and usage.

The Code: MedianWindow Class Implementation

Having explored the theoretical underpinnings and the essential data structures that make our solution viable, it's time to present the implementation of the MedianWindow class. This class is the cornerstone of our approach, embodying the algorithmic strategy we've outlined to dynamically calculate medians within a sliding window of data points.

import heapq

class MedianWindow:

def __init__(self, win_size):

self.win_size = win_size

self.min_heap = []

self.max_heap = []

self.queue = deque([])

self.heap_dict = defaultdict(int)

self.balance = 0

def warmup(self, batch):

for x in batch:

self.queue.append(x)

heapq.heappush(

self.max_heap,

-x

)

heapq.heappush(

self.min_heap,

-heapq.heappop(self.max_heap)

)

if len(self.min_heap) > len(self.max_heap):

heapq.heappush(

self.max_heap,

-heapq.heappop(self.min_heap)

)

@property

def median(self):

if self.win_size % 2 == 1:

return -self.max_heap[0]

else:

return (-self.max_heap[0] + self.min_heap[0]) / 2

def rebalance(self):

if self.balance < 0:

heapq.heappush(

self.max_heap,

-heapq.heappop(self.min_heap)

)

elif self.balance > 0:

heapq.heappush(

self.min_heap,

-heapq.heappop(self.max_heap)

)

def push(self, x):

self.queue.append(x)

oldest = self.queue[0]

self.balance = -1 if oldest <= self.median else 1

if x <= self.median:

self.balance += 1

heapq.heappush(

self.max_heap,

-x

)

else:

self.balance -= 1

heapq.heappush(

self.min_heap,

x

)

self.rebalance()

def should_remove_max_heap_top(self):

h = self.max_heap

return h and self.heap_dict[-self.max_heap[0]] > 0

def should_remove_min_heap_top(self):

h = self.min_heap

return h and self.heap_dict[self.min_heap[0]] > 0

def lazy_clean(self):

while self.should_remove_max_heap_top():

self.heap_dict[-self.max_heap[0]] -= 1

heapq.heappop(self.max_heap)

while self.should_remove_min_heap_top():

self.heap_dict[self.min_heap[0]] -= 1

heapq.heappop(self.min_heap)

def pop(self):

prev_num = self.queue.popleft()

self.heap_dict[prev_num] += 1

self.lazy_clean()

return prev_num

Example of usage:

w = MedianWindow(k)

w.warmup(data[:k])

result = [w.median]

for i in range(k, len(data)):

w.push(data[i])

old_elem = w.pop()

result.append(w.median)

This implementation encapsulates the complexity of managing a sliding window of data points and efficiently calculating the median. The class utilizes binary heaps to maintain a running median, a queue to track the sliding window, and a dictionary for lazy deletions, illustrating a practical application of these data structures in solving a non-trivial algorithmic challenge.

Analyzing Time Complexity

Understanding the time complexity of our solution is crucial for assessing its efficiency and suitability for real-world applications. The MedianWindow class's operations can be analyzed as follows:

-

Initialization (

__init__): The constructor has a constant time complexity, O(1), as it merely sets up the initial state without processing any data. -

Warm-up (

warmup): This method has a time complexity of O(k log k), where k is the size of the sliding window. Each insertion into a heap is O(log k), and we perform k such insertions. -

Median Calculation (

median): Retrieving the median has a constant time complexity, O(1), since it involves returning the top element from one or both heaps, operations that do not depend on the size of the window. -

Rebalancing (

rebalance): The rebalance operation, invoked after adding or removing elements, maintains the heaps in a balanced state. This operation has a logarithmic time complexity, O(log k), due to the heap insertions and deletions. -

Adding Elements (

push): Adding a new element involves inserting it into one of the heaps and possibly rebalancing. The overall time complexity is O(log k) due to these heap operations. -

Removing Elements (

pop): The removal process has a logarithmic time complexity, O(log k), for similar reasons to the addition process. However, because of lazy deletion, the actual removal from the heap is deferred, potentially reducing the frequency of heap reorganizations. -

Lazy Cleanup (

lazy_clean): This operation's time complexity can vary. In the worst case, if many elements are marked for deletion, cleaning them out when they reach the top of the heap can take O(log k) per element. However, this cost is amortized over the operations that caused the elements to be marked, mitigating the impact on performance.

The efficiency of the MedianWindow class hinges on the logarithmic time complexity of heap operations, making it well-suited for handling sliding windows of data where the window size is significantly smaller than the total number of data points. This property is particularly beneficial in applications like high-frequency trading and real-time data analysis, where performance and timely data processing are paramount.

In the next section, we'll delve into the practical challenges one might encounter when implementing this algorithm and suggest potential optimizations and real-world applications, underscoring the practical relevance of mastering data structures and algorithms through the lens of the sliding window median problem.

Factors Affecting Performance

The efficiency of the MedianWindow algorithm hinges on several critical factors:

- Heap Operations: The core of our performance lies in the heap operations, primarily insertions and deletions, each with a logarithmic time complexity of O(log k), where k is the window size. Efficient management of these operations is key to maintaining the algorithm's overall performance.

- Lazy Deletion Strategy: Our approach to lazy deletion significantly impacts performance by deferring the removal of elements from the heaps until absolutely necessary. While this strategy reduces immediate computational overhead, its effectiveness is contingent on the frequency and distribution of elements marked for deletion.

- Window Size and Data Distribution: The size of the sliding window and the distribution of input data can affect performance. Larger windows increase the complexity of heap operations, and skewed data distributions may lead to unbalanced heaps, necessitating more frequent rebalancing operations.

Practical Challenges

To solidify understanding and encourage hands-on experimentation, here are some practical challenges for readers:

- Percentile Calculation: Enrich the algorithm's capabilities by enabling the calculation of any percentile, not just the median. This enhancement involves introducing a new parameter to adjust the algorithm accordingly. To tackle this challenge, consider how the current structure, based on min and max heaps, could be generalized or modified to provide efficient access to arbitrary percentiles. This extension not only broadens the algorithm's applicability but also challenges the reader to think about data structures in a more flexible and adaptable way.

- Memory Efficiency: Modify the MedianWindow class to improve memory efficiency when dealing with large datasets. Explore strategies to minimize the memory footprint of heap structures.

- Diverse Data Types: Extend the algorithm to support median calculations on datasets with non-numeric types, such as timestamps or custom objects, ensuring type consistency and appropriate comparison mechanisms.

Algorithmic Improvements and Optimization

While the MedianWindow algorithm demonstrates robust capabilities, there's always room for optimization:

- Heuristic Rebalancing: Implement a heuristic approach to rebalancing the heaps based on anticipated data patterns or historical distributions. This could reduce the number of rebalancing operations needed, enhancing performance.

- Batch Processing: For datasets where real-time processing is not critical, modify the algorithm to process data in batches. This can significantly reduce the computational overhead associated with heap operations.

- Parallel Processing: Explore the feasibility of parallelizing heap operations or the processing of incoming data points. While the inherently sequential nature of median calculation may limit parallelism, certain preprocessing tasks or the lazy cleanup operation might be parallelized to improve throughput.

- Alternative Data Structures: Investigate the use of alternative data structures, such as Fibonacci heaps or soft heaps, which might offer better amortized time complexities for certain operations, albeit at the cost of increased implementation complexity or specific trade-offs.

In concluding our exploration of the Dynamic Median Calculation within the Sliding Windows algorithm, we've not just tackled a complex problem but have also highlighted the critical thinking skills vital in modern data analysis. This endeavor goes beyond mere computation; it is an exercise in enhancing our algorithmic agility and intellectual flexibility.

Stay engaged as we continue to explore the vast frontiers of algorithmic development, each step bringing us closer to mastering the art of problem-solving in an ever-evolving digital world.