Vibe coding hype is winding down, because there are huge limitations to what can large language models achieve. Despite this coding agents are getting pretty good. They can spin up new frontend pages, wire up APIs, , and even build CI/CD configs. But anyone knows how inconsistent they are. The same prompt can work one time and break the next. They forget parts of your codebase, mix deprecated SDKs, or just make stuff up.

The issue isn’t that the model is bad — it’s that it doesn’t know your context. Here’s the proof - https://cursed-lang.org/. Someone wrote a programming language purely using the coding agent. If this was possible, anything is.

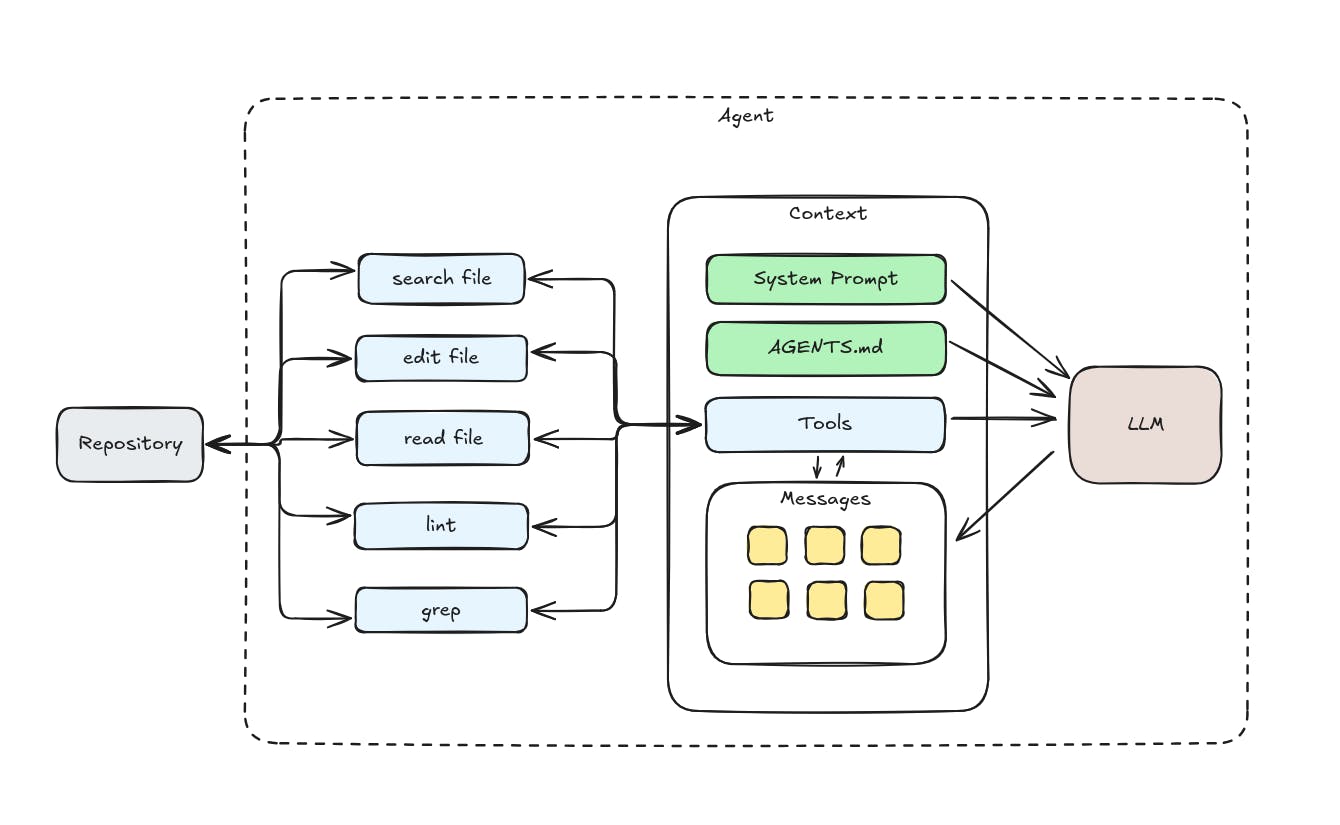

To get reliable output, you can’t just prompt better. You need to engineer the environment the agent works in — shape its inputs, give it structure, and set the right boundaries. That’s what’s called context engineering.

1. LLMs Don’t Think Like Engineers

LLMs don’t “understand” code the way we do. They predict tokens based on patterns in the previous input, not on app builds or structure. So even small changes in the prompt or surrounding files can change the output. That’s why one run passes tests and another breaks imports or forgets a semicolon.

Without structure, they’re like interns guessing your stack from memory.

You can’t fix this by yelling at the model. (I can tell, I tried) You fix it by giving it the right scaffolding — the constraints and environment that guide it toward valid code every time.

2. Constraints Make Code Predictable

If you want the agent to behave consistently, constraints are your friend. They narrow the space of possible outputs, and force the model to stay aligned with your setup.

Some useful constraints:

- Types — If you’re using TypeScript or JSON schemas, the agent can see exactly what shapes it needs to follow.

- Lint + format rules — Prettier, ESLint, or codegen rules make the output consistent without extra prompting.

- Smaller tasks — Instead of “build me a backend,” ask “add this route to

src/api/user.ts.” Local scope = fewer surprises. - Configs and templates — Tools like Typeconf and Varlock can predefine environment variables, SDKs, or any configuration patterns the agent must follow.

The trick is to make sure that you’re always writing code with strong types. You don’t lose flexibility — you just catch mistakes earlier and make behavior repeatable.

3. Build Systems as Ground Truth

Even when the code looks fine, it often breaks at build time because the agent guessed wrong about how things are wired. But often the agent doesn’t even understand how to build your code correctly.

For example:

- You ask it to run tests, it writes

npm test— but your repo usespnpmornx. - It tries to build a Dockerfile that doesn’t even exist your actual environment.

- It imports a package that isn’t even installed.

The fix is to abstract the build system — give the agent a clear picture of how code is built, tested, and deployed. Think of it as an additional agent tool for your project’s environment.

Once the agent knows what “build” means in your world, it can use that knowledge instead of guessing.

Bazel, Buck, Nx, or even a well-structured package.json are already good foundations. The more you surface this info, the fewer hallucinations you’ll deal with. If you want to go further you can write your own tool hooks to prevent the agent from calling incorrect build system, check out the Claude Code guide: https://docs.claude.com/en/docs/claude-code/hooks-guide.

4. The Problem with Outdated Training

Most coding agents are trained on data that’s a year or two out of date. They’ll happily use APIs that no longer exist, or code patterns that everyone have abandoned ages ago.

You’ve probably seen stuff like:

- Old React lifecycle methods

- Non-existent library functions

- Deprecated Next.js APIs

- NPM commands for libraries that moved to new versions

You can’t rely on training alone. You need to bring your own context — real docs, real code, real configs.

Some ways to close the gap:

- Feed in the library doc, for example via MCPs like Context7.

- Let the agent read actual code files and dependencies - point it to read node_modules, you’d be surprised how often it would help fixing the API.

- Add custom instructions to AGENTS.md telling the model to avoid the repeating problematic pattern it is trying to use.

When the model knows what’s actually there, it stops hallucinating.

5. Layers of Context

A good coding agent doesn’t just read your prompt — it reads the whole situation.

You can think of context in three layers:

Static context

Project structure, file layout, types, configs, build commands, dependencies.

Dynamic context

The current task, open file, error messages, test results, runtime logs.

External context

Docs, SDK references, changelogs, or snippets from the web when needed.

Combine all three, and the agent starts acting like someone who’s actually onboarded to your codebase — not a random freelancer guessing from memory.

6. Examples from the Real World

I’m currently building SourceWizard - coding agent for automating integrations. When I started automating the WorkOS AuthKit integration, here’s the non-exhausting list of the problems that the model generated for me:

- Started using the deprecated

withAuthandgetUserAPIs; - Mixed frontend and backend logic; (like using

useStateon server-side components) - Generated incorrect environment variables;

- Installed the wrong package;

It goes without saying that the model also shuffled through all of the package managers, sometimes npm, sometimes pnpm, whatever was most interesting for the model at a time.

After I’ve added constraints, directly specified what the agent should use, supplied with the latest API the model started generating the integration code consistently.

The difference isn’t in the model — it’s in what it sees.

7. Context Is the New Interface

Most people still think about coding agents like chatbots: give a prompt, get an answer. But for real engineering work, the prompt is just one piece.

The real magic happens in the context — the files, types, commands, and feedback loops the agent can access. That’s what makes it useful.

In the future, we won’t just “talk” to coding agents — we’ll wire them into our build systems. They’ll understand our repos, know our tools, and follow the same rules as every other part of the stack.

Conclusion

Coding agents fail not because they’re dumb, but because they’re working blind.

If you want them to be reliable teammates, give them structure:

- Constraints that define how they should code;

- Build abstractions that show how your project actually runs;

- Up-to-date context so they stop using old patterns;

The better the context, the better the code.

You don’t just drop a new engineer into your repo and say “figure it out.” You onboard them. Coding agents are the same.