In 2026, the problem is no longer how to make more ads. It is how to stop making the wrong ones.

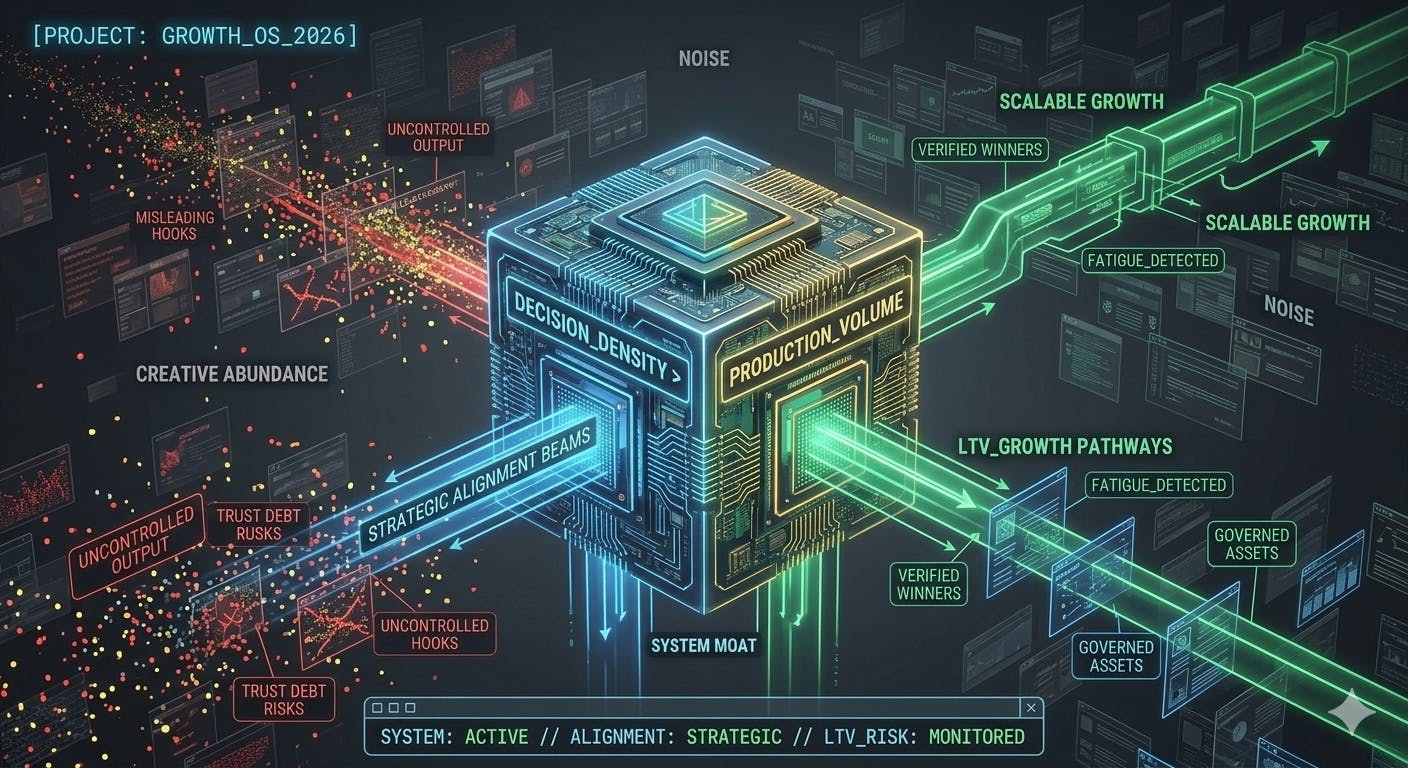

Why the competitive edge in AI-native performance marketing is shifting from production volume to decision quality, creative selection, and controllable scaling.

⚡ Quick Take:

- The Shift: Competitive advantage has moved from production volume (now cheap and abundant due to AI) to decision quality and selection logic.

- The Core Problem: Most teams are trapped in "micro-iterations" or "taste-driven illusions," wasting budget on ads that fatigue faster than they can be replaced.

- The Solution: Transitioning to a new model, treating ads as managed, versioned assets within an engineered lifecycle.

- The New Moat: In 2026, growth belongs to the smallest, clearest systems that can convert creative abundance into disciplined economic decisions.

I. The New Decision-Making Problem

Most consumer teams can now generate more ads, more variants, more hooks, more edits, more formats, and more localised versions than they can meaningfully evaluate. Generative systems have pushed the marginal cost of production down so aggressively that the old operating logic of performance marketing has started to break. The question is no longer, “How do we make enough creatives to feed Meta, TikTok, Google, and the rest of the machine?” It is now far more operationally difficult: what deserves budget, what deserves iteration, what deserves to become a reference, and what should be killed before it quietly burns spend?

Recent research in advertising systems, creative evaluation, and AI-assisted generation increasingly points in the same direction: competitive advantage is shifting away from raw production volume and towards structured selection, fatigue detection, and controllable scaling.

This shift is exposing two common failures across the industry.

- The first is the micro-iteration trap. Some companies spend most of their budget recycling old winners, reworking proven formats, and adapting competitor ads, while allocating very little room for genuinely new strategic exploration. The result is a market full of creatives that look increasingly similar, move in circles, and fatigue faster than teams expect.

- The second is the taste-driven illusion. Other companies continue to make creative decisions through taste, instinct, or executive preference. They back ads because someone says, “I like this”, “it feels premium”, or “it fits the brand vision”, then wonder why beautifully made campaigns produce weak or negative returns.

Both models are increasingly fragile and unreliable.

In AI-native acquisition systems, creative is no longer just a campaign asset. It behaves more like a versioned, testable, degradable asset moving through an engineered lifecycle. Its value is temporal, comparative, and highly sensitive to context. Even modest improvements in selection, relevance, or persuasive fit can create meaningful economic advantage at scale.

That is why the question is no longer whether AI should be used in performance marketing. That debate is already stale. The real question is what operating model is required once content generation becomes abundant and cheap.

I would call that model Creative Performance Engineering.

II. What Creative Performance Engineering actually means

Creative Performance Engineering is the emerging discipline of treating performance ads as managed systems rather than a stream of isolated outputs. It sits at the intersection of growth, creative strategy, experimentation, design, and production operations. Its purpose is to govern the full creative lifecycle: how ideas are generated, how variants are constrained, how they are tested, how fatigue is detected, and how winners are scaled without damaging trust, clarity, or efficiency.

In practical terms, the model is simple:

“Strategy is slow, production is fast”

- Analyze (Historical data & signal quality)

- Hypothesize (Strategic tension vs. product truth)

- Make (High-velocity, structured execution)

- Test (Comparative offline/online filters)

- Kill/Scale (The economic decision point)

That may sound obvious, but most teams still operate through memory, taste, and inherited habit. They overproduce without constraints, test without comparative logic, keep weak creatives alive too long, and scale winners without a framework for decay. Recent research and leaders’ practical findings give us a more disciplined way to think about each stage.

Creative abundance without decision discipline is not a growth system. It is just a faster way to waste budget.

III. Architecture of an effective creative team

Before a creative can be tested, it has to be produced inside a system that does not collapse under its own volume.

This is where many organisations still lag. They speak fluently about CTR, ROAS, volume, and speed, but far less clearly about the operating model behind creative production. In practice, Creative Performance Engineering begins well before Ads Manager. It begins in team design, handoff logic, and the constraints that shape what gets made in the first place.

The strongest consumer teams increasingly operate through three linked layers: strategic interpretation, production execution, and performance judgement.

Strategic interpretation

The first layer is owned by creative strategists, performance marketers, or creative leads. Their role is not to write every line or approve every frame. Their role is to define the system: which audience tensions are worth pursuing, which emotional angles are being tested, which proof points are credible, which message territories are exhausted, and which directions deserve volume.

Production Execution

The second layer belongs to producers, editors, motion designers, creative services leads, and AI-native builders. This is the execution layer. It turns hypotheses into assets repeatedly, under time pressure, across placements, geographies, and refresh cycles. In weaker teams, production behaves like a service desk. In stronger ones, it behaves like a modular factory with taste.

Performance Judgement

The third layer is performance judgement. This is where the system decides which creatives are merely well-made and which are commercially useful. That distinction matters. Many ads are polished. Far fewer deserve budget.

This is also why the best-performing creative is rarely the most visually impressive. More often, it is the creative where data signals and creative features align. Research in advertising optimisation increasingly supports this logic: performance improves when systems combine behavioural data with interpretable creative features rather than treating creative as an aesthetic black box.

Top-performing creative is usually built from a small set of repeatable ingredients:

a recognisable user tension, a legible product promise, one dominant emotional frame, fast cognitive processing in the first seconds, a proof mechanism that reduces disbelief, and a format native enough not to look like an advert before it has earned attention.

That sounds straightforward, yet many teams still complicate the wrong things. They add more people, more reviews, more references, and more aesthetic debate. What they often need instead is a smaller, sharper engine.

In my own work, I have seen compact teams outperform far larger creative organisations when the system was clear. A small unit consisting of one UA manager, one creative producer, and one editor working on one product was able to generate significantly more useful volume than a larger cross-product team serving multiple products, not because the individuals were inherently stronger, but because the pipeline was cleaner, context-switching was reduced, the hand-offs were shorter, and decision-making was tighter.

Most startups and consumer companies do not need a large creative department to build a serious performance content machine. They need a high-clarity system. In many cases, three core functions are enough: one person to define hypotheses and message architecture, one person to turn those hypotheses into assets, and one person to interpret signal quality and feed decisions back into the system.

AI makes this small-team logic even more relevant. It does not make human teams obsolete. It simply means that production volume is no longer the moat. Judgement and strategic coherence are. Research comparing AI- and human-generated advertising has already shown that AI-generated ads can outperform human-created alternatives in persuasion preference tests, with one study reporting a 59% preference for AI-generated ads versus 40.9% for human-generated ones.

So the question is no longer, “How do we build a bigger team?”

It is, “How do we build a smaller system with better decision density?”

IV. Strategy as the Source of the Scalable Winner

Before a creative becomes a winner, it begins as a hypothesis.

Teams often discuss testing as if the main challenge were media execution or creative volume. They ask why a competitor remake did not work, or why a viral TikTok reference failed to convert. But the deeper problem sits upstream: “Where do valuable ideas come from, and why do some become scalable winners while others never move?”

A scalable winner is rarely just a high-performing ad. More often, there is a stronger underlying strategic pattern.

Weak teams generate tests by asking, What else can we make?

Stronger teams generate tests by asking,What else can we learn?

The purpose of strategy is not simply to produce campaign themes. It is to generate a renewable supply of hypotheses that can be tested, decomposed, recombined, and iterated into increasingly scalable forms.

In practice, winning tests tend to emerge from four sources.

Audience tension.

The best performance ideas rarely begin with format. They begin with tension: an unmet desire, a recurring frustration, a hesitation, a fear, a vanity trigger, a contradiction, or a feeling the user does not yet know how to articulate. In consumer apps, strong ads often work because the user recognises themselves in the logic of the message before they scroll away.

Product truth.

Weak systems drift too far into hooks and too far away from the product. They generate clicks, but not credible promises. That usually creates short-term response and weak downstream quality. Stronger teams understand the product, the onboarding, the funnel, the monetisation logic, and the experience itself. The scalable winner tends to be anchored in a product truth that can survive repetition.

Pattern inversion.

Some winners come not from following category norms, but from deliberately breaking them. In crowded categories, teams often end up copying the same hooks, the same testimonial pacing, the same pseudo-UGC grammar, and the same visual signals. That may work briefly, but it compresses the market. Strong strategy teams map category patterns, then look for strategic contrast: more proof where competitors rely on aspiration, more sincerity where others rely on irony, more restraint where others shout.

Winner decomposition.

Some of the best new tests come from taking an existing winner apart and asking what actually caused the response. Was it the opening tension, the framing of the promise, the proof device, the tonal register, the first three seconds, or the audience-product match? Mature teams treat winners not as finished artefacts, but as diagnostic objects.

A winner should not only be scaled. It should be dissected.

That dissection creates second-generation tests, and second-generation tests are often more valuable than the original winner because they reveal whether the underlying advantage is transferable.

New tests should therefore not be random. They should be directional.

A stronger system defines each iteration as a deliberate move against a previous learning: same tension, new proof; same proof, new emotional frame; same winner, new audience; same structure, lower-friction opening; same concept, stronger first-second signal.

That is why iteration speed matters less than iteration quality. Shipping ten new ads is easy. Shipping ten strategically legible iterations is much harder, and much more useful.

In one consumer subscription product I worked on, the breakthrough did not come from inventing a completely new format. It came from decomposing an existing winner into its opening tension, proof structure, and emotional register, then rebuilding it across adjacent audience hypotheses. The result was not one more remake, but a more transferable creative system.

Another long-term winner emerged only after we stopped looking at the category and started looking at the product itself. The user journey, the onboarding logic, and the order in which features were revealed were materially different from what competitors were teaching the market to expect. Once the concept was rebuilt around the feature users encountered first, the creative stopped imitating the category and began reflecting the actual product logic. That was the point at which performance improved.

V. Make: Speed is cheap, strategic coherence is not

The first misconception of AI-era marketing is that more output automatically creates more opportunity. In reality, uncontrolled output creates noise.

Research suggests that structured ideation often outperforms looser forms of ideation, especially when the goal is originality without losing strategic alignment. Ideation templates can improve creative performance while preserving coherence, which is highly relevant for AI-native teams operating at volume.

That implies something very practical: if your team is producing dozens or hundreds of variations each week, the real leverage does not come from prompting harder. It comes from building a constraint layer that scales. That means structured briefs, message hierarchies, proof libraries, pattern banks, brand exclusions, compliance rules, and explicit definitions of what counts as a valid product promise.

This failure mode is familiar in real production environments. Once output velocity becomes a KPI in itself, teams start producing visual variation without informational variation. Different edits, different faces, different hooks, yet the same underlying message repeated in decorative wrappers. In practice, this is often where spending begins to leak.

In practice, many teams still do not set explicit strategic ratios for creative output. They ask for volume, but not for structure: how much should be allocated to reworks, competitor adaptations, localisation, audience expansion, or genuinely new concept development. Without that allocation logic, volume is under control. Up to 80% of the teams I met didn’t set specific targets or limitations for the creative strategy. Others use: 60% rework, 20-25% competitors, and 15-20% new ideas. A more predictive ad-spend on return method would be: 70-80% exploitation, 20-30% exploration. From a creative engine perspective, it makes perfect sense to set up a clear framework and test it. And then adjust it when it’s a new product, new geo, creative fatigue, new channel, and so on.

Research on multimodal co-creation systems reinforces this point. Creative systems become more usable and more effective when free-form prompting is replaced with structured input layers around clear volume, branding, goals, audience, and inspiration. The lesson is wonderfully unglamorous: ambiguity upstream creates waste downstream.

In other words, the future is not promptcraft as performance theatre. It is a specification design.

“The future isn't a finished MP4 file; it’s a library of atomic assets (hooks, bodies, CTAs) that the system can recombine.”

In practice, once production velocity rises, four failure modes appear almost immediately. Teams start optimising for output instead of decision quality. Variation becomes fake variation: different visuals, same message. Angles drift away from product truth. And fatigue accelerates because the system is recycling one emotional idea in decorative wrappers.

None of these problems is solved by producing more. They are solved by building a better structure.

VI. Test: Creative should be measured comparatively, not ceremonially

The second misconception is that creative testing is mostly about launching enough A/B tests. It is not. It is about the logic by which creatives are compared.

Recent work in creative selection frames advertising as a comparative reasoning problem rather than a simple scoring problem. That is far closer to reality. Ads do not compete against an abstract quality threshold. They compete against alternatives in a feed, in an auction, or within a narrow attention window.

Related benchmarking work also shows why a single quality score is too crude. Creativity can be assessed across multiple properties such as acceptability, consistency, performance estimation, recognition, and similarity. “Quality” is not one thing. It is a bundle of operationally useful properties.

For performance teams, the implication is straightforward. Creative testing needs at least two layers:

- The first is an offline pre-filter. This removes what should never reach budget: weak relevance, funnel mismatch, policy risk, off-brand tone, broken logic, poor clarity, or redundant patterning.

- The second is online confirmation. This determines whether a creative can actually win under real distribution conditions.

That split matters because production abundance without pre-filtering turns paid media into an expensive quality-control system.

VII. Kill: Creative fatigue is an economic event

Many teams still identify creative fatigue through instinct. Someone says the ad feels tired, someone else says it is still spending, and the discussion drags on until efficiency drops badly enough to force a decision.

That is operationally lazy. In 2026, we must reframe fatigue as a measurable economic event, and more importantly, as a source of Trust Debt.

Trust Debt is the hidden tax on your future growth. It occurs when a creative remains "mathematically" viable (positive ROAS) but becomes "psychologically" toxic. If an ad is "cringe," overly repetitive, or uses aggressive "hook-bait" that the product doesn't deliver on, it might still convert in the short term. However, it is quietly poisoning your Lifetime Value (LTV).

Every time a user rolls their eyes at a repetitive or misleading creative, your CAC (Customer Acquisition Cost) for their next interaction goes up. You are effectively borrowing conversions from the future at a high interest rate of brand erosion.

A modern kill policy should therefore not rely on a single metric. It should distinguish between:

- Performance Fatigue: Visible in declining CTR and rising CPA.

- Trust Fatigue: Visible when a message starts generating skepticism, negative comments, or low-quality downstream engagement long before the dashboard fully collapses.

The real question is no longer whether a creative is technically still alive, but whether continuing to fund it is more expensive than the "Trust Debt" it creates.

VIII. Scale: In 2026, you scale governance

Scaling used to mean taking a winner and pushing more budget behind it. In AI-native systems, that definition is no longer sufficient.

The more interesting question is how a system scales once generation, evaluation, and iteration are partially automated. The latest research increasingly points towards architectures that combine generation with verification and feedback rather than generation alone.

This matters because AI-generated advertising is now good enough to create a new operational risk. If generation scales without a corresponding verification layer, teams optimise for short-term response while quietly accumulating trust debt, brand incoherence, and message risk.

There is also a highly practical point here: editable output matters. Systems that produce assets in editable formats, rather than locked final exports, create a throughput advantage. Editability lowers the cost of iteration, localisation, repurposing, and governance. That is not a tooling detail. It is an operating advantage.

The teams that win will not merely generate more ads. They will generate more controllable ads.

IX. What this means for startups, tech companies, and consumer apps

The conclusion is almost perversely encouraging. You do not need a giant marketing department to compete in AI-era performance marketing. You need a tight operating system.

A small team can move remarkably fast when the work is divided cleanly across three functions: creative strategy, creative production, and performance analysis. One defines the opportunity space, one turns hypotheses into assets, and one validates, ranks, kills, and scales.

That is enough to build a serious content engine, provided the team shares one system rather than three opinions.

For consumer apps in particular, performance marketing is becoming less like campaign management and more like creative systems engineering. Growth no longer belongs to the team that makes the prettiest adverts, hires the largest agency roster, or produces the loudest volume of variants. It belongs to the team that can repeatedly convert messy creative abundance into disciplined economic decisions.

Final thought

The industry loves to say that AI is changing advertising. That is true, but still too vague to be useful. A more precise statement would be this:

AI has made production abundant. Advantage is therefore shifting into selection, stopping logic, and controlled scaling.

That is why Creative Performance Engineering is a useful lens for early 2026. It names the thing many teams are already feeling but have not yet fully articulated: the future of performance marketing is not more creativity in the abstract. It is better systems for making creative measurable, governable, and economically legible.

Or put less politely:

If your team still treats ads as one-off outputs rather than assets moving through an engineered lifecycle, you are probably buying media with a 2023 operating model in a 2026 market.

The production moat is gone. The system moat is just beginning. Let’s build it!

👉🏻 Connect with me on LinkedIn.

Selected research and further reading

- Tevi, Parker, Koslow, Ang. Creative Performance in Professional Advertising Development: The Role of Ideation Templates, Consumer Insight, and Intrinsic Motivation. Journal of the Academy of Marketing Science, 2025.

- Lin et al. Creative4U: MLLMs-based Advertising Creative Image Selector with Comparative Reasoning. Preprint, 2025.

- Shaw. A Path Signature Framework for Detecting Creative Fatigue in Digital Advertising. Preprint, 2025.

- Meguellati et al. LLM-Generated Ads: From Personalization Parity to Persuasion Superiority. Preprint, 2025.

- Zhang et al. AdTEC: A Unified Benchmark for Evaluating Text Quality in Search Engine Advertising. NAACL, 2025.

- Gan et al. HCMRM: A High-Consistency Multimodal Relevance Model for Search Ads. WWW Industry Track, 2025.

- Karnatak et al. Expanding the Generative AI Design Space through Structured Prompting and Multimodal Interfaces. CHI Workshop preprint, 2025.

- Wang, Shimose, Takamatsu. BannerAgency: Advertising Banner Design with Multimodal LLM Agents. EMNLP, 2025.

- NextAds. Towards Next-generation Personalised Video Advertising. Preprint, 2026.

Additional reading

- Kong. Deep Learning Model Optimization in Creative Generation for New Media Animated Ads. Discover Artificial Intelligence, 2025.

- Deckker, Sumanasekara. The Rise of AI in Digital Advertising: Trends, Challenges, and Future Directions. 2025.

- Hassan. AI-Driven Visual Element Optimization and Performance Prediction in Digital Advertising: An Empirical Analysis of Multi-Platform Campaign Effectiveness. 2025.