Table of Links

-

Convex Relaxation Techniques for Hyperbolic SVMs

B. Solution Extraction in Relaxed Formulation

C. On Moment Sum-of-Squares Relaxation Hierarchy

E. Detailed Experimental Results

F. Robust Hyperbolic Support Vector Machine

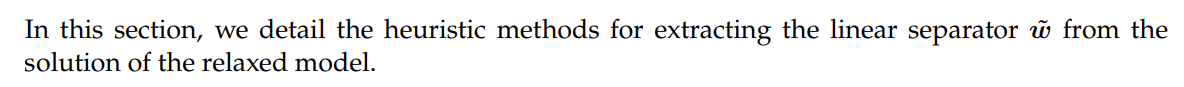

B Solution Extraction in Relaxed Formulation

B.1 Semidefinite Relaxation

Typically the top eigendirection is selected as the best candidate.

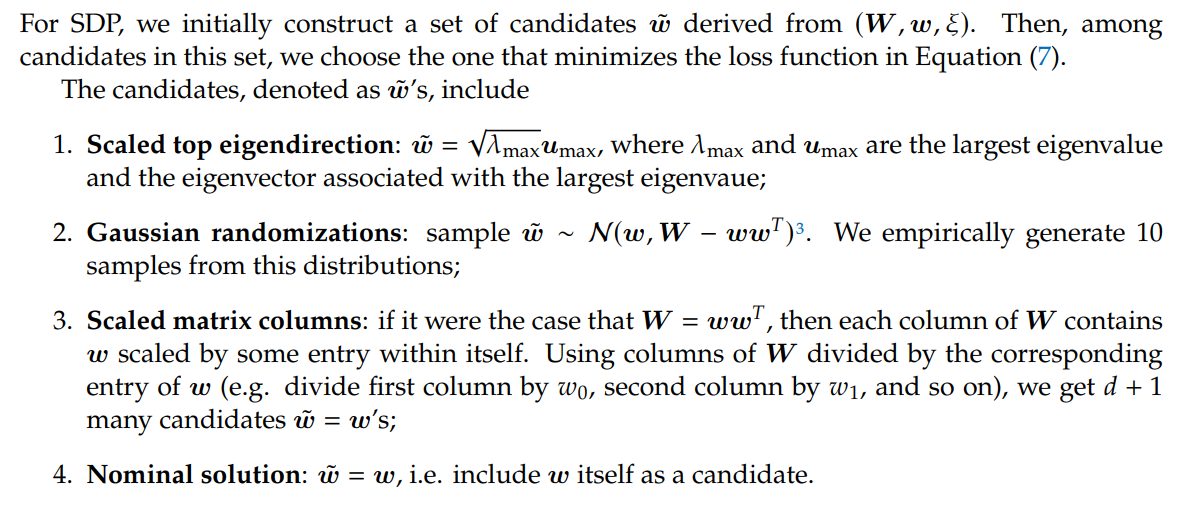

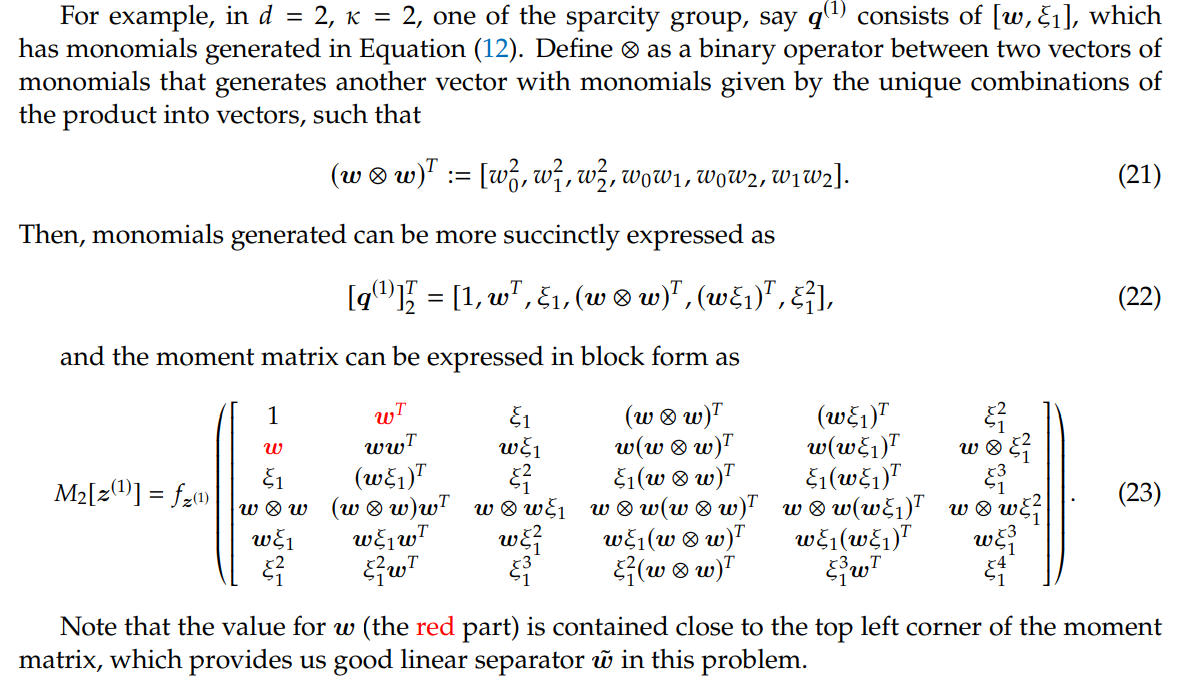

B.2 Moment-Sum-of-Squares Relaxation

In moment-sum-of-squares relaxation, the decision variable is the truncated multi-sequence z, but we could decode the solution from the moment matrix 𝑀𝜅[z] it generates. We are able to extract the part in TMS that corresponds to w = [𝑤0, 𝑤1, ..., 𝑤𝑑], by reading off these entries from the moment matrix, which is already a good enough solution.

Authors:

(1) Sheng Yang, John A. Paulson School of Engineering and Applied Sciences, Harvard University, Cambridge, MA (shengyang@g.harvard.edu);

(2) Peihan Liu, John A. Paulson School of Engineering and Applied Sciences, Harvard University, Cambridge, MA (peihanliu@fas.harvard.edu);

(3) Cengiz Pehlevan, John A. Paulson School of Engineering and Applied Sciences, Harvard University, Cambridge, MA, Center for Brain Science, Harvard University, Cambridge, MA, and Kempner Institute for the Study of Natural and Artificial Intelligence, Harvard University, Cambridge, MA (cpehlevan@seas.harvard.edu).

This paper is

[3] a method mentioned in slide 14 of https://web.stanford.edu/class/ee364b/lectures/sdp-relax_slides.pdf

[story continues]

tags