When dealing with highly imbalanced datasets, even well-trained classification models often perform poorly on the minority class. This is more than a modeling inconvenience; it means the very signals you care about most are at risk of being drowned out. In scenarios like churn prediction or A/B testing with low conversion rates, understanding which features truly separate the minority from the majority class becomes indispensable, especially when sample sizes are limited.

A common way to approach this is through model-based feature selection: train a classifier, extract its important features, and use them to guide further analysis. But when the minority class is severely underrepresented, this approach can become unstable, overfitting the dominant class and missing the patterns that matter most. This is why we’ll look at a model-free, lightweight alternative borrowed from decades of research in medical statistics, that’s fast, transparent, and effective. No complex modeling, no hyperparameter tuning: just straightforward, statistically sound comparisons that highlight the features that truly differentiate your groups.

In this article, I'll walk you through how this underutilized technique operates, why it outperforms current methods for imbalanced datasets, and the way you can apply it to common tasks like churn analysis, fraud detection, and A/B test validation. What’s more, it works not just intuitively, but provably. And it works even in "case-control" setups, where the joint distribution is not observed — a setting where most machine learning methods silently fail or require unrealistic assumptions.

Let’s start with the problem. When most of your samples belong to the majority class, models tend to learn patterns that simply reinforce that dominance. Feature importances using wrappers and embedded methods become noisy or misleading. You get beautiful-looking metrics that hide the fact that your model doesn’t really understand the minority class — the one you actually care about.

What’s worse, they also lack transparency. Why is this feature important? What does it actually do? Answers are often buried in complex interactions and nonlinearities.

Statistics Behind the Scenes: Pearson Distance and KL Divergence

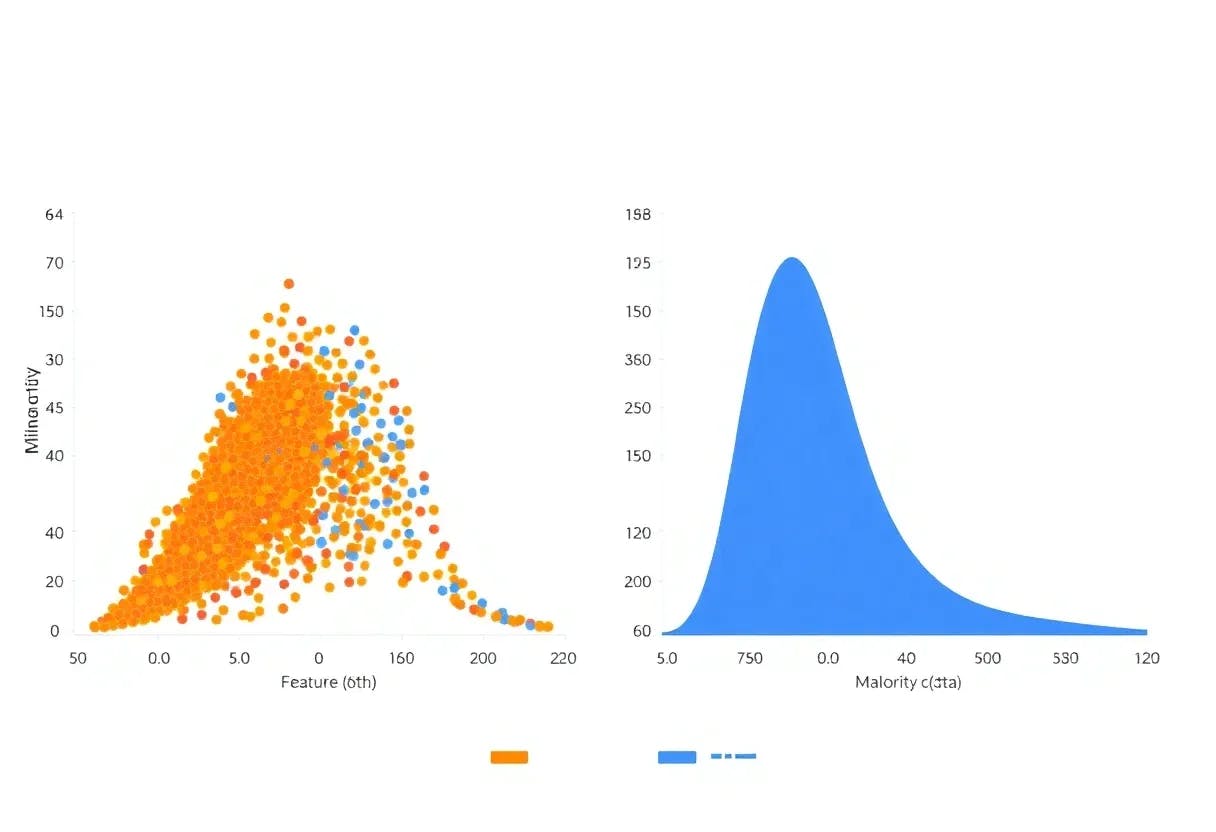

Here’s a simpler alternative: instead of building a model, directly compare how a given feature is distributed in each group — say, churned vs. retained users.

This idea comes from “case-control” studies in medicine and genetics. Suppose you want to find genetic markers associated with a rare disease. The population is inherently imbalanced — most people are healthy. So you create two groups — patients and healthy controls — and then look at the distribution of each feature (e.g., gene variant) in both.

The same idea applies to churn, fraud, or conversion: what separates those who converted from those who didn’t? To do this, we compute a statistical distance between the conditional distributions of each feature in the two groups. Two measures work especially well:

- Pearson chi-squared distance, which is intuitive and works particularly well for categorical features.

- KL divergence, which provides a more general way to quantify how much one distribution diverges from another, and is especially useful when features take on many possible values.

Let’s break down how this method actually works. Imagine you have a feature — for example, "user clicked on email" — and you want to know whether it helps distinguish between two groups, like churned vs. retained users.

Instead of training a model, we simply look at how differently this feature is distributed in each group. For example: maybe only 20% of churned users clicked the email, compared to 80% of retained users. That gap is a strong signal — it suggests that email engagement might be a key factor in predicting churn.

To turn that idea into a number, we calculate a distance between the two distributions — one for each group. The bigger the distance, the more the feature “separates” the groups, and the more useful it is.

The specific formula we use comes from something called the Pearson chi-squared distance. It's commonly used in statistics to measure how much two sets of probabilities differ. In this context, it tells us: "How different is this feature's behavior between Group A and Group B?"

Importantly, this method:

- Works great with categorical (discrete) features

- Doesn't assume anything about how the data is generated

- Produces a score for each feature that’s easy to interpret

If the distributions are nearly identical in both groups, the score will be close to zero — meaning the feature doesn’t help us. If they differ, the score goes up — showing us the feature matters.

It’s simple, transparent, and grounded in statistical theory — no black-box modeling needed.

This isn’t just a neat heuristic.

Under mild conditions, it’s been mathematically proven that this procedure has the sure screening property. That means: as your sample size grows, the method will reliably include all truly relevant features, with high probability.

The Power of Simplicity

One of the most compelling aspects of this approach is its lightweight implementation. You don't need specialized libraries, complex model training, or extensive computational resources. The core logic can be implemented in a few dozen lines of code and runs quickly even on large datasets.

This simplicity brings several advantages. First, it's easy to integrate into existing data pipelines without major infrastructure changes. Second, the results are immediately interpretable – no need to decode complex model coefficients or feature importance scores. Third, it's robust to many of the assumptions that can trip up more sophisticated methods.

Why It Works in Practice

Traditional A/B testing often stops at comparing group averages, but this technique encourages deeper investigation. Instead of just asking whether Variant A outperformed Variant B overall, we measure how strongly individual user features (such as device type, prior purchase history, or traffic source) are associated with the conversion outcome within each group. This helps you validate whether your experiment actually influenced behavior across the board, or if the observed lift was driven primarily by a specific user segment.

This kind of validation is crucial for building sustainable product improvements. It's the difference between stumbling onto a temporary win and understanding the underlying mechanisms that drive success.

In an era where explainable AI is increasingly important, this method delivers transparency by design. When you present results to product managers, executives, you can provide clear explanations of why certain features matter.

This transparency also makes it easier to spot potential issues. If your model is flagging features that don't make business sense, you'll notice immediately. If there are concerning patterns related to protected characteristics, they'll be visible rather than hidden in model weights.

The practical benefits extend to implementation as well. Since this is a model-free approach, you don't need to worry about model drift, retraining schedules, or complex deployment pipelines. The method is stateless – you can run it on demand, integrate it into exploratory analysis, or embed it in automated reporting.

This makes it perfect for rapid prototyping and iterative analysis. Data scientists can quickly test hypotheses, validate findings, and communicate results without getting bogged down in model management overhead.

Despite its effectiveness, this approach remains surprisingly underutilized in the tech industry. Most data science teams are familiar with the latest deep learning architectures but haven't explored the rich toolkit of statistical methods developed in other fields. This represents a missed opportunity – sometimes the simplest tools are the most powerful.

The medical research community has refined these techniques over decades, dealing with small sample sizes, rare outcomes, case-control studies, and the need for interpretable results. These constraints have produced methods that are both statistically rigorous and practically useful.