“Hope is not a strategy.” - Traditional DevOps Wisdom

At 2:47 AM, payment gateways across multiple African markets went dark. Transactions failing. Businesses couldn’t process payments. Every minute of downtime translated to real money lost by people who couldn’t afford it.

In consumer social apps, downtime is annoying. In financial infrastructure, it’s catastrophic. This is the reality of building fintech systems across markets where reliability isn’t optional.

The Principle: Design for Failure, Not Success

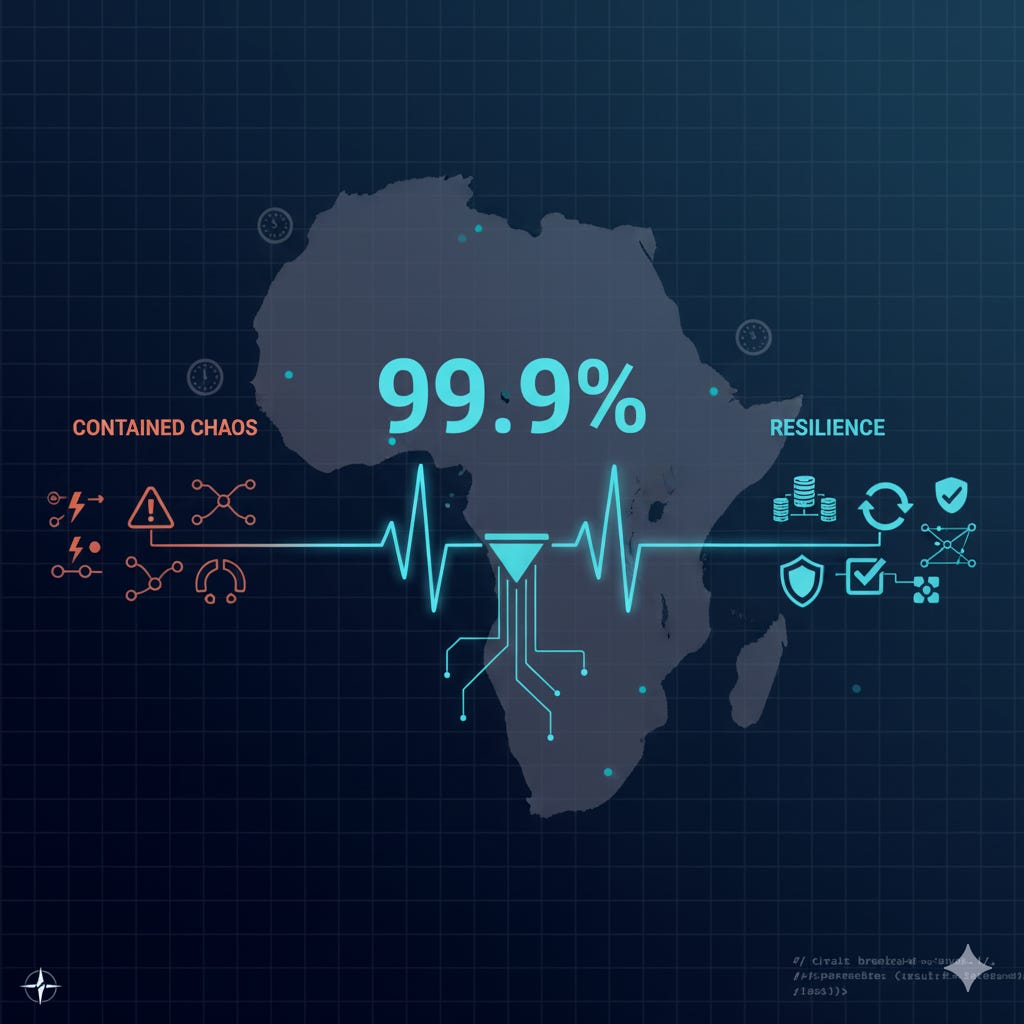

99.9% uptime sounds impressive until you calculate what it means: 43 minutes of acceptable downtime per month. In financial systems processing thousands of transactions hourly, that’s unacceptable.

The shift from mid-level to senior engineering thinking happens when you stop asking “will this work?” and start asking “how will this fail?” Every component will fail. Your job is ensuring the system survives anyway.

The African Infrastructure Reality

Building high-availability systems in mature markets with reliable infrastructure is challenging. Building them across African countries with variable infrastructure requires different thinking entirely.

Power infrastructure varies dramatically between markets. Internet connectivity ranges from fiber in major cities to spotty 3G in rural areas. Third-party integrations with local banks and mobile money providers each have different reliability profiles. Your system needs to handle all of this gracefully.

Scale happens in unpredictable bursts. Payroll days, holiday shopping, government disbursements can spike traffic 10x in minutes. Traditional capacity planning doesn’t work when usage patterns are this dynamic.

Architecture Patterns That Actually Work

Redundancy at every critical layer. Single points of failure are not acceptable. Multiple availability zones, database replication with automatic failover, load balancers in active-active configuration. When one component fails, traffic routes to healthy instances automatically.

For payment processing specifically, this means multiple payment rail integrations. If one mobile money provider is experiencing issues, the system fails over to alternatives without user intervention.

Circuit breakers on every external dependency. When a downstream service struggles, don’t keep hammering it. Open the circuit, return cached data or degraded functionality, attempt recovery after a cooldown period.

One fintech platform implemented this pattern across all bank API integrations. When a major bank’s API started timing out during an outage, the circuit opened automatically, the platform returned cached balance data with clear staleness indicators, and alerted operations. The system stayed operational while the bank recovered.

Asynchronous processing as default. Synchronous operations create fragile chains where every dependency must succeed. Instead, acknowledge requests immediately with a “pending” status, process asynchronously via queues, and notify users when complete.

This pattern handles temporary failures naturally. If processing fails, retry with exponential backoff. If it keeps failing, escalate to human review. Users never experience timeout errors.

Graceful degradation for non-critical features. Not all features are equally important. Under stress, critical paths like transaction processing and authentication must work. Features like transaction history, analytics dashboards, and non-essential notifications can degrade to cached or stale data.

Use feature flags to disable struggling components quickly without full deployments.

Idempotency everywhere. In distributed systems with retries, requests get processed multiple times. Every state-changing operation must check an idempotency key. If you’ve seen this request before, return the cached result. This prevents duplicate transfers when clients retry failed requests.

The Monitoring That Predicts Failure

You can’t fix what you can’t see. Comprehensive observability means structured logging with correlation IDs that flow through all services, metrics on request rates and error rates and latency percentiles, distributed tracing to follow requests across services, and real-time alerting on anomalies.

More importantly, monitor leading indicators that predict failure, not just lagging indicators that confirm it:

Leading indicators: error rates trending upward, latency increasing at p95 and p99, queue depths growing, database connection pool saturation, memory or CPU climbing toward limits.

Lagging indicators: zero traffic indicating complete outage, 100% error rate, customer complaints.

Alert on leading indicators. Fix problems before customers notice them.

When Everything Breaks at Once

One payment platform experienced a cascade failure from a seemingly minor configuration change. Increased database connection pool sizes triggered connection exhaustion under load. API servers timed out. Health checks failed. All servers marked unhealthy simultaneously. Complete outage for 47 minutes.

What saved them: automated rollback when health checks failed system-wide, database connection limits that prevented total collapse, queued transactions that reprocessed after recovery, detailed logs showing exactly what happened.

What changed afterward: staged rollouts where changes deploy to 5% of traffic, then 25%, then 50%, then 100% over hours not minutes. Connection pool limits with circuit breakers. Better load testing simulating production traffic patterns. Automated canary deployments that roll back on error rate spikes.

Testing Failure Scenarios

Hope is not a reliability strategy. Test your resilience by deliberately breaking things in staging environments. Kill random services. Inject network latency. Simulate database failovers. Max out CPU and memory.

Weekly chaos experiments reveal weaknesses before they surface in production. Every failure discovered in staging is one avoided with real money at stake.

The Cost-Reliability Tradeoff

High availability is expensive. Multiple data centers, over-provisioned infrastructure, complex architecture, 24/7 on-call rotation, extensive testing. Each additional nine of availability roughly doubles infrastructure costs.

For a side project, 95% uptime is fine. For financial infrastructure, 99.9% is minimum. For critical health systems, 99.99% might be necessary. Know what you’re optimizing for and why.

Questions for Your System

What happens if this service is down? If the answer is “nothing works,” you have a single point of failure.

Can you deploy without downtime? If not, you’re choosing between uptime and iteration speed.

Do you know when things are breaking? If you learn about problems from customer complaints, monitoring isn’t good enough.

Can the system recover automatically? Manual intervention should be rare. Self-healing should be normal.

Have you tested failure scenarios? Deliberately break things. Validate that redundancy actually works.

When downtime isn’t an option, you design differently. You spend more upfront. You move more carefully. You test more thoroughly. But you sleep better knowing that when components fail, the system survives.

What are your single points of failure? What happens if your primary database goes down at 3 AM? When did you last test your disaster recovery plan?