Table of Links

-

Discussion and Broader Impact, Acknowledgements, and References

D. Differences with Glaze Finetuning

H. Existing Style Mimicry Protections

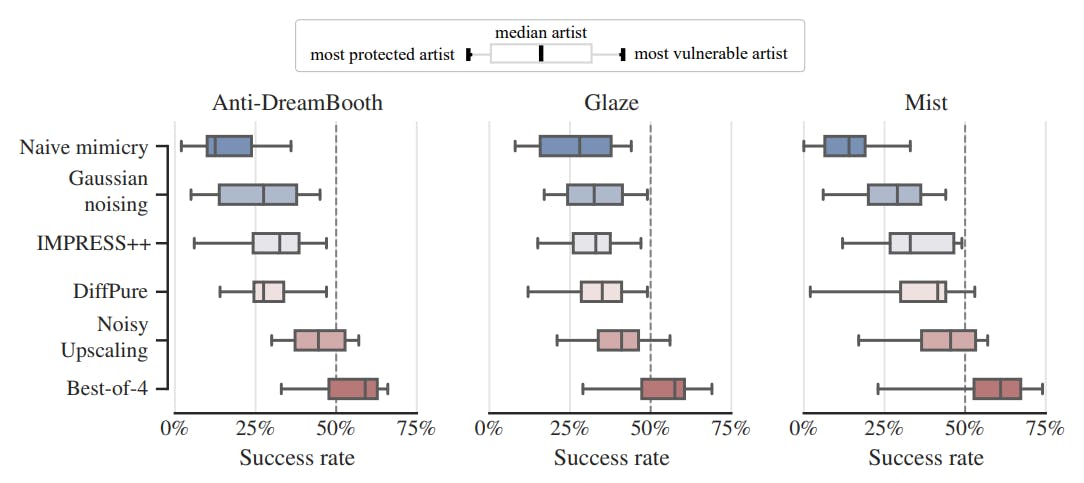

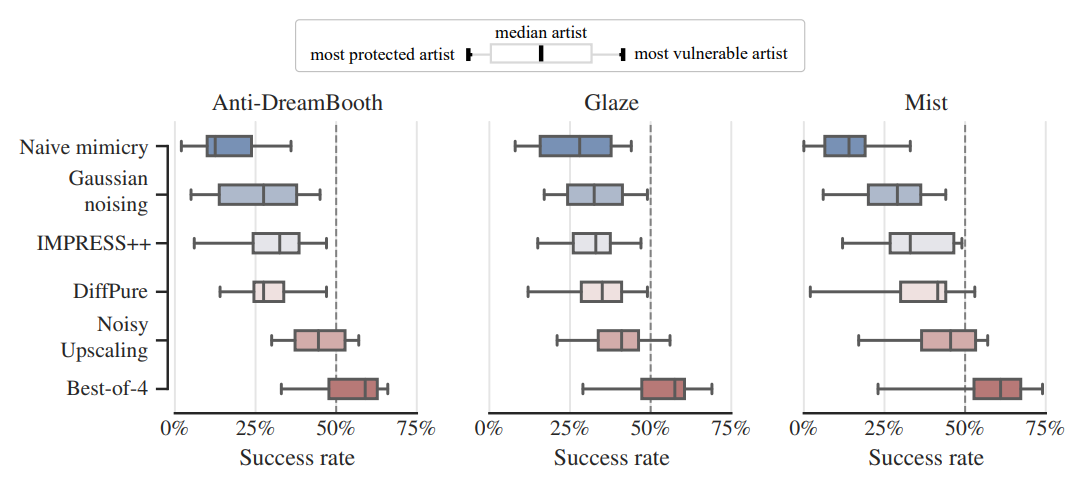

6 Results

In Figure 4, we report the distribution of success rates per artist (N=10) for each scenario. We averaged the quality and stylistic transfer success rates to simplify the analysis (detailed results can be found in Appendix C). Since the forger can try multiple mimicry methods for each prompt, and then decide which one worked best, we also evaluate a “best-of-4” method that picks the most successful mimicry method for each generation (according to human evaluators).

Authors:

(1) Robert Honig, ETH Zurich (robert.hoenig@inf.ethz.ch);

(2) Javier Rando, ETH Zurich (javier.rando@inf.ethz.ch);

(3) Nicholas Carlini, Google DeepMind;

(4) Florian Tramer, ETH Zurich (florian.tramer@inf.ethz.ch).

This paper is

[story continues]

tags