Social media platforms generate massive streams of conversational data. For researchers and data scientists, this data provides a way to study how language shapes online interaction at scale.

I built a Python pipeline to collect and analyze 16,695 Arabic tweets from X (formerly Twitter) to examine whether linguistic signals like uncertainty influence how people respond to posts.

This article walks through the technical process behind the project: data collection, preprocessing, linguistic classification, and statistical analysis.

Tech Stack

This project used a simple Python data science stack:

- Apify – tweet scraping

- Python 3.11 – analysis environment

- pandas – dataset processing

- numpy – numerical operations

- statsmodels – regression modeling

Collecting Tweets From X

The first step was building a dataset of tweets related to Lebanon.

Tweets were collected using Apify, an automation platform that provides a tweet-scraper actor capable of retrieving publicly accessible tweets through X’s web interface.

The search query used was:

(لبنان OR بيروت) lang:ar

This query retrieves Arabic-language tweets mentioning Lebanon or Beirut.

The collection window covered 35 consecutive days, from December 15, 2025 to January 18, 2026, a period with active political and economic discussions.

The initial scrape produced:

17,343 tweets

Cleaning and Preparing the Dataset

Before analysis, the dataset required several preprocessing steps.

Duplicate tweets were removed based on tweet identifiers. This eliminated 648 duplicate entries, leaving:

16,695 tweets in the final dataset

Other preprocessing steps included:

- Filtering out retweets

- Retaining replies and quote tweets

- Normalizing engagement metrics

Replies represented a substantial share of the dataset:

6,872 tweets (41.2%)

This turned out to be useful because replies provide insight into conversational interaction, not just passive engagement.

Measuring Engagement

To measure audience response, engagement was defined as the sum of:

- likes

- retweets

- replies

This composite metric captures overall interaction with a tweet.

However, social media engagement distributions are extremely skewed. A small number of posts receive very high engagement while most receive very little.

To stabilize the distribution, the regression models used the transformation:

log(1 + Total Engagement)

This is a common approach in computational social science when modeling engagement data.

Detecting Linguistic Uncertainty in Arabic Tweets

The next step was identifying tweets that express linguistic uncertainty.

Instead of training a machine learning model, I built a rule-based classifier using an Arabic uncertainty lexicon.

The classifier contains 60 uncertainty markers, grouped into six linguistic categories.

Examples include:

Modal expressions

- يمكن (may)

- ربما (perhaps)

- قد (might)

Hedging expressions

- أظن (I think)

- يبدو (it seems)

- على ما يبدو (apparently)

Question markers

- هل

- لماذا

- كيف

- ؟

Explicit uncertainty

- غير متأكد (not sure)

- ما بعرف (I don’t know)

Rumor indicators

- يقال (it is said)

- إشاعة (rumor)

- مصادر (sources)

Tweets containing at least one uncertainty marker were classified as uncertain.

Using this approach:

4,997 tweets (29.9%) were classified as uncertain.

Handling Context in Arabic Text

One challenge when working with Arabic text is ambiguity in common words.

For example, the word:

من

can mean “who” or “from.”

To reduce false positives, the classifier included context-sensitive rules. For instance, the word was counted only when it appeared in interrogative contexts rather than as a preposition.

These rules improved classification quality without requiring a full machine learning model.

Validating the Classifier

To evaluate the classifier, I conducted a manual validation step.

A stratified sample of 200 tweets was annotated by a native Lebanese Arabic speaker.

The classifier achieved:

- Accuracy: 73.5%

- Recall: 1.00

- Precision: 0.47

- F1-score: 0.639

- Cohen’s κ: 0.470

The model tended to over-predict uncertainty, producing some false positives. However, this type of error generally biases results toward smaller effects rather than artificially inflating them.

For large-scale observational analysis, the classifier performed adequately.

Modeling Engagement With Regression

To test whether linguistic uncertainty was associated with engagement, I estimated a regression model.

The model predicts log-transformed engagement using:

- linguistic uncertainty

- tweet length

- presence of a URL

- account verification status

The regression specification was:

log(1 + Engagement) =

β0 + β1(Uncertainty)

+ β2(Tweet Length)

+ β3(Has Link)

+ β4(Verified)

+ ε

Because multiple tweets were posted by the same accounts, standard errors were clustered at the author level.

The dataset contained 7,593 unique accounts, averaging 2.2 tweets per account.

All analyses were conducted using:

- Python 3.11

- pandas

- numpy

- scipy

- statsmodels

Checking Robustness With Negative Binomial Models

Social media engagement data often exhibits overdispersion, meaning the variance exceeds the mean.

To ensure results were not dependent on the regression specification, I also estimated negative binomial models predicting raw engagement counts.

The model produced consistent results, suggesting the findings were robust.

What the Analysis Revealed

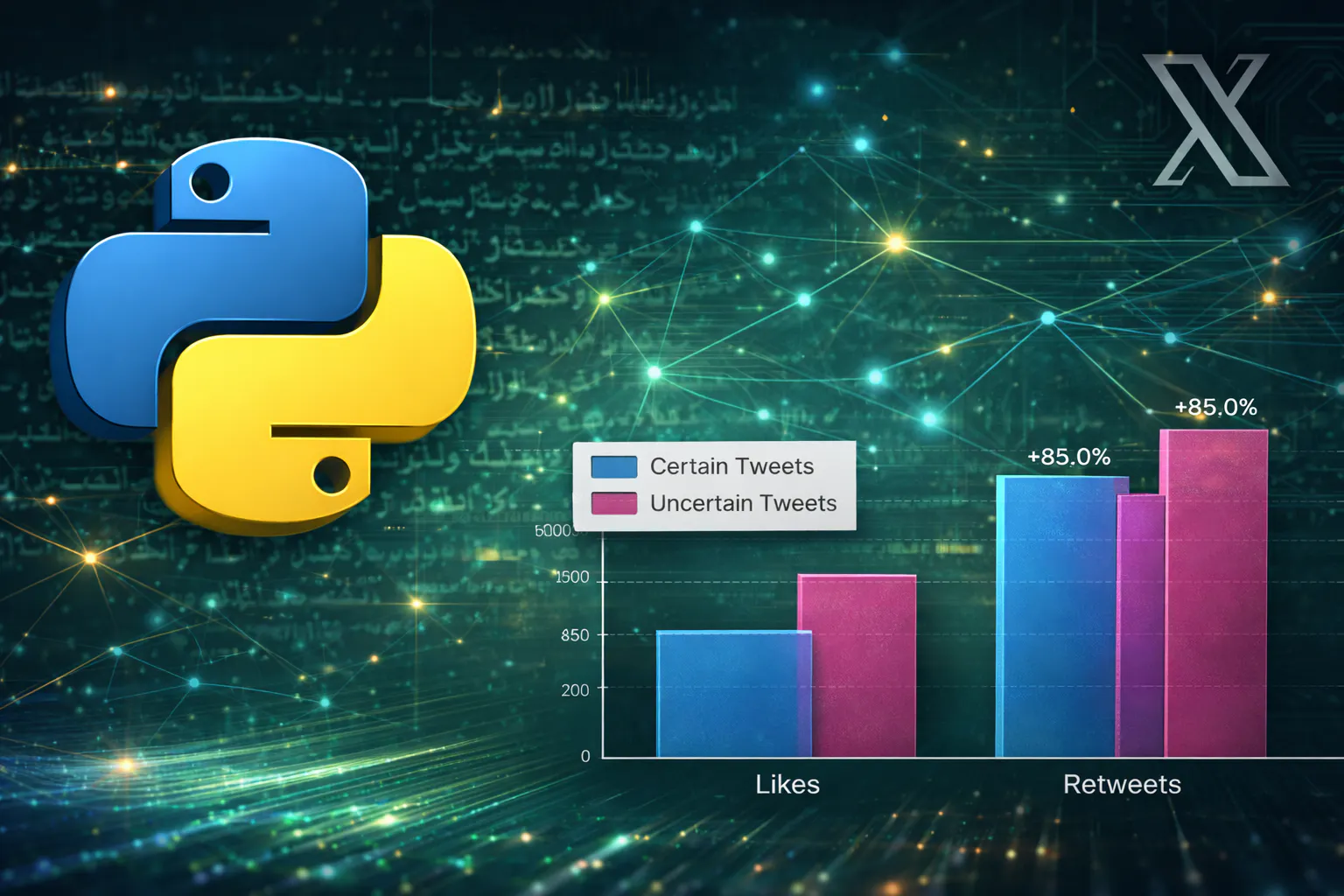

Once the pipeline was complete, the dataset revealed a clear pattern.

Tweets containing uncertainty markers showed substantially higher engagement.

On average:

- 47% more likes

- 65% more retweets

- 81% more replies

Overall engagement was 51.5% higher for uncertain tweets.

After controlling for tweet length, links, and verification status, uncertainty remained associated with roughly 25% higher expected engagement.

Interestingly, the strongest difference appeared in replies, indicating that uncertainty may encourage more conversational interaction.

Lessons From the Experiment

This project highlights a few useful takeaways for analyzing social media data.

Linguistic signals can be modeled computationally

Qualitative language features like hedging or speculation can be operationalized using lexicon-based methods.

Engagement types matter

Likes, retweets, and replies represent different forms of interaction. Treating engagement as a single aggregated metric may hide important patterns.

Lexicon methods remain useful

While machine learning models dominate modern NLP, rule-based approaches can still perform well for targeted tasks with interpretable linguistic categories.

Social media engagement is noisy

Even with statistically significant predictors, engagement remains highly unpredictable due to factors like network effects, timing, and algorithmic exposure.

Reproducing the Analysis

The workflow used in this project can be reproduced using a relatively simple data analysis pipeline.

The process consists of four main stages:

1. Data Collection — Tweets were collected from X using the Apify tweet-scraper with Arabic-language queries related to Lebanon.

2. Dataset Preparation — Duplicate tweets were removed and engagement metrics (likes, retweets, and replies) were extracted to construct the analysis dataset.

3. Uncertainty Detection — Tweets were classified using a rule-based Arabic uncertainty lexicon containing 60 markers representing hedging expressions, modal verbs, question markers, and rumor indicators.

4. Statistical Modeling — Engagement patterns were analyzed using regression models implemented in Python with standard data science libraries such aspandas, numpy, and statsmodels.

This workflow can be adapted to study other linguistic signals or topics in large-scale social media datasets.

Final Thoughts

Large-scale social media datasets provide an opportunity to study how linguistic signals shape interaction patterns.

By combining data scraping, rule-based NLP techniques, and statistical modeling, qualitative features of language can be transformed into measurable variables for large-scale analysis.

This experiment illustrates how a relatively simple computational pipeline can uncover patterns in digital conversation dynamics.

The full research paper, including the complete methodology and statistical results, is available on arXiv.