6 months ago I had a thesis: most marketing teams are doing work that AI agents could do better, faster, and 24/7.

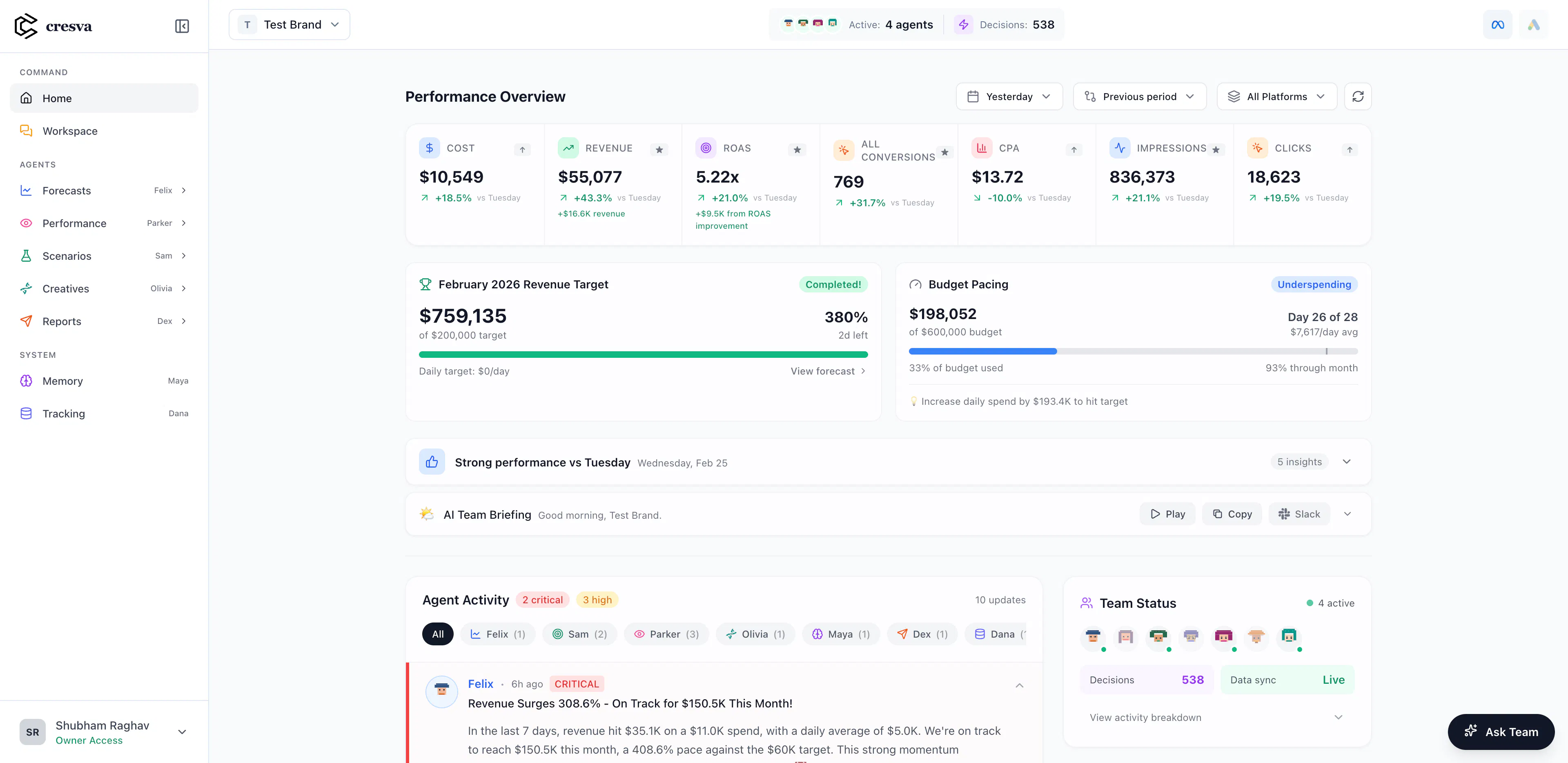

Today I have a live platform with 7 specialized AI agents, 20 free marketing tools, and brands already onboarded. No co-founder. No team. Just me, Claude, and an unhealthy amount of caffeine.

This isn't a pitch. This is the full technical breakdown of how I built it: what worked, what broke, and what I'd do differently.

Why Marketing Teams Are the Perfect Agent Use Case

I spent 6 years in marketing strategy, data analytics, and growth. Managed $10M+ in ad spend across Meta, Google, and TikTok for 100+ DTC brands. And I noticed something: 80% of what marketing teams do every day is repeatable.

Pull data. Analyze trends. Spot anomalies. Forecast performance. Allocate budget. Refresh creatives. Report to stakeholders.

Every morning, a media buyer opens Ads Manager, exports data to a spreadsheet, stares at it for 30 minutes, and makes the same three decisions they made yesterday. Multiply that across a team of 5-10 people, and you have hundreds of hours per month of intelligent-but-repetitive work.

That's not a chatbot problem. That's an agent problem.

A chatbot answers questions. An agent takes a goal, breaks it into steps, executes them across systems, and delivers results, with memory of what worked last time.

The Architecture: 7 Agents, 1 Orchestrator

I didn't build one monolithic AI. I built a team. Each agent has a specific role, specific tools, and a specific personality that reflects how real marketing specialists actually think.

Here's the cast:

Maya, Account Manager Remembers everything. Never asks twice. She's built a living history of your business across 800+ conversations. Ask her about a decision from 6 months ago and she'll recall the context, the constraints, and what happened next.

Felix, Performance Analyst Predicts revenue 90 days out at 91% accuracy. Learns from every prediction, the right ones AND the wrong ones. He sees trends forming weeks before they're obvious and gets more accurate the longer he works with you.

Sam, Media Strategist Tests 50+ scenarios before you spend a dollar. You ask about 70/30 Meta/Google? He'll also test 65/35, 60/40, AND what happens if you add TikTok at 15%. His job is preventing expensive mistakes.

Dana, Data Engineer One truth across platforms. No conflicts. When Meta says 847 conversions but Shopify shows 1,243, Dana reconciles it before you even see the discrepancy. Every number is traceable, verifiable, and matched across systems.

Parker, Attribution Analyst Shows which ads actually drove sales vs. which ones just took credit. Meta claiming conversions that would've happened anyway? Google inflating ROAS? Parker delivers cold, hard truth about true incremental impact.

Dex, Marketing Operations Auto-delivers insights to Slack and Email. Reports, alerts, anomaly notifications. You mention once you prefer Sheets? He never asks again. Just delivers Sheets. Forever. His job is making sure nobody wastes time on manual work.

Olivia, Creative Strategist Finds winning patterns across 10,000+ ads. Analyzes creatives using computer vision, predicts fatigue, scores Creative DNA (uniqueness, scroll-stop power, emotional resonance), and predicts creative performance before you spend a dollar.

How They Work Together

This is where it gets interesting. The agents don't work in isolation. They orchestrate.

When a brand asks "What happened to our performance this week?", here's what actually runs:

Orchestrator receives goal

→ Dana: Pull last 7 days vs prior 7 days (Meta + Google + Shopify)

→ Felix: Run performance analysis and forecast trends

→ Olivia: Check creative fatigue scores on active ads

→ Parker: Cross-reference platform-reported vs actual conversions

→ Sam: Synthesize findings into strategic recommendations

→ Dex: Format and deliver via Slack + weekly digest

Each agent passes typed data to the next. Every step logs provenance (where the data came from), freshness (how old it is), and confidence (how much to trust it).

The key technical decisions:

Typed contracts between agents. Every agent has a strict input/output schema. Dana outputs { table: Row[], schema, freshness, source }. Felix expects exactly that format. No ambiguity, no hallucination leaking between steps.

Provenance on everything. Every number in every report has a trail: "This ROAS figure came from Meta API, pulled 3 minutes ago, computed as revenue/spend from the Metrics Catalog." When a client questions a number, the system can explain exactly where it came from.

Memory across runs. The agents remember what they did last time. If Felix flagged an anomaly last Tuesday, and the same pattern appears this Tuesday, the system says "this is recurring" instead of treating it as new. This is the compound learning effect: the agents get smarter per brand over time.

The 20 Free Tools: Distribution As Architecture

Here's something most AI startups get wrong: they build one product and then try to market it. I inverted it.

Before the platform, I built 20 standalone tools, each solving one specific marketing problem in under 30 seconds:

- Creative Fatigue Predictor: upload an ad, get a fatigue prediction using GPT-4 Vision

- Ad Account Audit: finds wasted spend in 30 seconds

- AI Visibility Tracker: shows if ChatGPT and Perplexity recommend you

- Competitor Ad Intel: reverse-engineers competitor strategy

- Incrementality Audit: exposes platform inflation

- Landing Page Analyzer: finds conversion killers

- Revenue Forecaster: predicts next 90 days with confidence bands

- SKU Profitability: exposes which products lose money despite good ROAS

- And 12 more.

Each tool is a standalone page that ranks on Google. Each one captures leads. Each one demonstrates the kind of analysis the full platform does autonomously.

The tools aren't separate from the platform. They're the visible surface of the agents underneath. The Creative Fatigue Predictor IS Olivia. The Ad Account Audit IS Parker + Dana. The Revenue Forecaster IS Felix running Prophet models.

This means every free tool user is experiencing the agent system without knowing it. And when they want it running 24/7 on their actual data, the upgrade path is obvious.

Technical Stack

For anyone who wants to build something similar, here's what's under the hood:

Framework: Next.js 14 (App Router) with TypeScript end-to-end. Type safety from database to UI isn't optional when you have 7 agents passing data to each other.

Database: PostgreSQL via Prisma. Schemas for AgentRun, AgentStep, AgentArtifact, and AgentMemory track every execution. Every agent call is a row in AgentStep with inputs, outputs, status, and timing.

LLM Layer: GPT-4o for vision analysis and complex reasoning. Claude for strategy synthesis and long-context work. Temperature kept low (0.1-0.3) for consistency. Forced JSON output everywhere because agents need structured data, not prose.

Caching: Redis with TTLs by data grain. Hourly data caches for 5 minutes. Daily for 1-4 hours. The source planner decides whether to hit the API, cache, or warehouse based on freshness requirements.

Integrations: Meta Marketing API, Google Ads API, GA4, Shopify, Slack. OAuth flows with encrypted token storage and rotation. Each integration has its own ingestion pipeline and health monitoring.

Agent Orchestration: Currently mono-process (all agents run as modules within the Next.js API). Task graph with topological sort for execution order. Parallel execution for independent data pulls. Retry logic with jitter per step.

for (const node of topoSort(taskGraph)) {

try {

recordStepStart(node);

const outputs = await callAgent(node.agent, {

runId, ...node.inputs

});

saveStepOutputs(node, outputs);

criticCheck(node, outputs);

} catch (e) {

handleFail(node, e);

if (!node.canSkip) throw e;

}

}

composeAndDeliver(runId);

Critic Layer: Before any output reaches the user, a Critic agent validates it against the Metrics Catalog (is CTR > 100%? That's impossible. Is spend negative? Block it.) This catches hallucinations and computation errors before they erode trust.

What I Got Wrong

Building this solo in 6 months means making fast decisions. Some were wrong:

Over-investing in the UI before validating the agent logic. I spent days on beautiful dashboards when I should have shipped ugly-but-correct agent outputs to Slack first. The insight: agents don't need a pretty UI to prove value. A Slack message with the right insight at the right time is worth more than a dashboard nobody logs into.

Trying to support every platform from day one. Meta alone has enough complexity for months of work. I should have gone deep on Meta first, proven the agents work flawlessly for one platform, then expanded. Instead, I wired up Google and TikTok skeletons that distracted from core quality.

Underestimating entity resolution. When a user says "how's the Nike campaign doing?", mapping that to a specific campaign ID in a specific ad account is surprisingly hard. Fuzzy matching, disambiguation, active vs. paused entities. I should have invested more time here earlier.

What I Got Right

Starting with free tools. The tools gave me distribution before I had a product. By the time the platform launched, I had traffic, leads, and users who already trusted the analysis quality.

Agent memory from day one. Most AI products treat every interaction as stateless. By storing agent runs, step outputs, and brand-specific memory, the system compounds its understanding of each brand. The 10th analysis is meaningfully better than the 1st.

Provenance and transparency. Marketing people are naturally skeptical of AI-generated insights. By showing exactly where every number comes from, how fresh it is, and what methodology was used, trust builds faster than with a black-box approach.

What's Next

The platform is live. Brands are onboarded. The agents are running.

The next milestone is simple: get to 10 brands that would be upset if Cresva disappeared. Not 100. Not 1,000. Ten brands that rely on these agents daily.

Everything else (more agents, more platforms, more features) follows from that.

If you're a CMO, ecommerce strategist, or growth leader spending $500K+ on ads and want to see what AI agents running your marketing operations actually looks like, the free tools are live at cresva.ai.

And if you're a founder building AI agents for a vertical, here's my biggest advice: build the tools first, then the platform. Distribution before infrastructure. Always.