Natural Language Querying (NLQ) over enterprise data warehouses is moving from experimental to production. Teams are deploying NLQ interfaces that let business users ask questions in plain English and get results without writing SQL. In practice, accuracy in production rarely matches what teams see during evaluation. The common assumption is that the model isn't reliable enough, or that the tooling simply isn't mature yet. In most cases, neither is true — the model is working as intended, but it's operating against a schema it cannot meaningfully interpret. This article examines why contextual metadata is the missing layer, and demonstrates what enriching it actually changes.

The Wrong Diagnosis

Most teams building NLQ interfaces over enterprise warehouses hit the same wall. Results come back wrong, or the agent gets stuck — looping through tables and schemas, issuing query after query trying to figure out which one is even relevant. The natural response is to blame the model, swap providers, and tighten the prompts until something improves. Accuracy might improve slightly, but the same problems resurface a week later. Eventually the assumption hardens: the model isn't reliable enough, or NLQ over a warehouse this complex just isn't production-ready. Neither is correct.

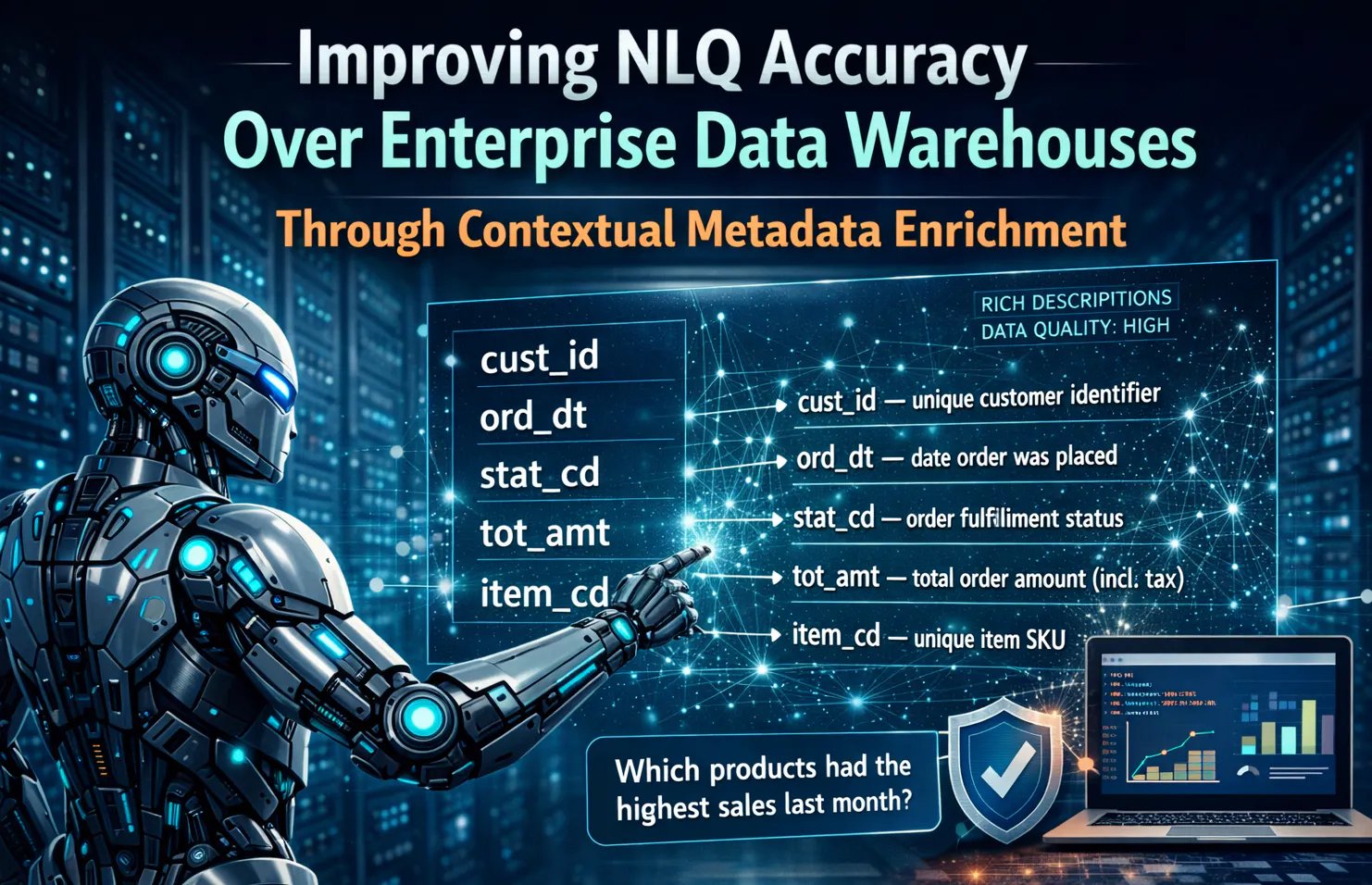

The model is doing exactly what it was designed to do: interpret a question, reason over the context it was given, and generate SQL to execute against the warehouse. The problem is that the context it receives is insufficient. Take stat_cd — a four-character VARCHAR with no description, no documented values, nothing that indicates whether it tracks fulfillment state, payment state, or something else entirely. The model guesses, and sometimes gets it right — but when the ambiguity can't be resolved at all, it explores, querying multiple schemas and tables trying to determine which one is right. The queries come out wrong, or the agent stalls.

This is not a model capability problem. It is a metadata problem, and that distinction matters because the two failure modes have completely different fixes. Swapping models when your metadata is the issue is the equivalent of upgrading your query engine when your data is dirty. The infrastructure gets more expensive, the results stay unreliable.

What the Model Is Actually Working With

When an NLQ agent connects to a warehouse through an MCP-integrated tool surface, it starts with no knowledge of your schema at all. It discovers the warehouse through tool calls — listing schemas, describing tables, inspecting column definitions, and what it builds up from that exploration is a bare structural representation: table names, column names, and data types, and in most production warehouses, that is about as far as it gets. The model has no way to know which table is the authoritative source for a given business concept. When a user asks about sales performance, it has to guess which of three tables with "sales" somewhere in the name actually holds the right data, and which fields within that table represent the metrics the business actually tracks. It is making probabilistic bets on schema intent, not reading documented meaning.

The gap this creates is not about model capability. Even the most capable models receiving an ambiguous schema will do what any reasonable system does when context is missing: make assumptions, infer meaning from naming conventions and data types, and explore further when inference fails. That exploration — repeated schema introspection, trial queries, multi-table scans is not a bug. It is the model trying to compensate for a context problem it cannot solve on its own.

What the model actually needs is not a better architecture or a larger context window. It is metadata — the contextual layer that explains what columns mean, what values they hold, how tables relate, and what the business logic behind the numbers actually is. Without it, the model can see the structure of your warehouse but has no idea what any of it actually means.

What Production Schemas Look Like

To make this concrete, consider a simple e-commerce data warehouse on Amazon Redshift — three tables covering customers, orders, and a daily sales summary:

customers — customer_id, email, region_code, acq_src, tier_flg, created_at

orders — order_id, customer_id, order_date, stat_cd, tot_amt, disc_pct, fulfil_ts

sales_summary — report_dt, report_qtr, order_cnt, total_revenue, rtn_amt

This is a realistic schema, not deliberately broken rather simply built the way most warehouses get built. Some columns are self-explanatory, others carry meaning that was obvious to whoever created the table and unclear to everyone since.

The most consequential failure mode here is source-of-truth ambiguity. Take two common questions: what were total sales last month, and how many orders were cancelled in Q3. For the first, the model sees both orders.tot_amt and sales_summary.total_revenue as plausible answers. For the second, it has no way to determine from the schema alone whether stat_cd in orders tracks cancellations, what value represents that state, or whether cancelled orders should be excluded from revenue calculations entirely. One table is transactional, one is aggregated — but nothing in the schema documents which table is the authoritative source for sales reporting, at what grain each operates, or when one should be preferred over the other. The model also has no way to know whether rtn_amt represents refunds that should be netted out of revenue, or something else entirely. Without metadata, every query involving revenue or order status is built on assumptions the model cannot verify.

There is nothing wrong with this schema for its original purpose. It just was never built with a reasoning layer in mind — and the metadata that would make it interpretable exists somewhere, in someone's head, may be a Confluence page, or in a Slack thread from two years ago, but just not anywhere the model can reach.

Testing the Metadata Gap

To demonstrate how metadata changes what the model can do, we ran a three-stage test using Kiro-CLI with the Amazon Redshift MCP server against a more complete e-commerce schema — customers, products, and promotions as dimensions, with orders and order line items as the transactional facts:

The test that follows uses the above schema loaded into an Amazon Redshift instance, with the Redshift MCP server handling all agent-to-warehouse communication through Kiro-CLI. If you want to replicate the setup, the AWS Big Data Blog has a detailed walkthrough of the Amazon Redshift MCP server configuration.

We ran two questions against the schema at each stage:

- What was the total revenue from orders placed last month?

- Which product categories generated the most revenue from promoted orders in Q3?

The first seems simple but requires the model to identify which table and fields represent authoritative revenue figures. The second is more demanding as it involves joining across four tables and correctly interpreting category codes, order status values, and promotion data.

Stage 1 — No Context

With no metadata provided, the agent starts exploring autonomously, working through the available schemas, table structures, and column definitions before attempting either query. For this schema it made eleven tool calls before attempting either query, spending most of that exploration trying to determine which table was the right source for revenue data. It identified both orders and order_items as candidates for Q1 and had no way to resolve the ambiguity.

Query produced for Q1:

SELECT SUM(total_amount) AS total_revenue

FROM ecomm_dw.orders

WHERE order_date >= DATE_TRUNC('month', CURRENT_DATE - INTERVAL '1 month')

AND order_date < DATE_TRUNC('month', CURRENT_DATE);

Q1 is partially correct — it queries the right table and the right field, but does not exclude cancelled orders because the agent has no knowledge of stat_cd values or their business meaning.

Query produced for Q2:

SELECT p.category_cd,

SUM(oi.quantity * oi.unit_price) AS total_revenue

FROM ecomm_dw.order_items oi

JOIN ecomm_dw.products p ON oi.product_id = p.product_id

WHERE oi.promo_id IS NOT NULL

GROUP BY p.category_cd

ORDER BY total_revenue DESC;

Q2 is structurally incomplete — it filters on promo_id IS NOT NULL as a proxy for promoted orders, missing the Q3 date filter entirely, and returns raw category_cd codes the business cannot interpret.

Stage 2 — Partial Context

At this stage the agent received table-level descriptions and basic column descriptions — enough to understand what each table represents and what the primary fields track, but without value semantics or business rule documentation. The exploration dropped to four tool calls. The agent correctly identified orders.total_amount as the revenue source for Q1 and understood the relationship between order_items, products, and promotions for Q2.

Query produced for Q1:

SELECT SUM(total_amount) AS total_revenue

FROM ecomm_dw.orders

WHERE order_date >= DATE_TRUNC('month', CURRENT_DATE - INTERVAL '1 month')

AND order_date < DATE_TRUNC('month', CURRENT_DATE)

AND stat_cd != 'CNCL';

Q1 now excludes cancelled orders — the column description for stat_cd was enough for the agent to infer the cancellation code.

Query produced for Q2:

SELECT p.category_cd,

SUM(oi.quantity * oi.unit_price) AS total_revenue

FROM ecomm_dw.order_items oi

JOIN ecomm_dw.products p ON oi.product_id = p.product_id

JOIN ecomm_dw.promotions pr ON oi.promo_id = pr.promo_id

JOIN ecomm_dw.orders o ON oi.order_id = o.order_id

WHERE EXTRACT(QUARTER FROM o.order_date) = 3

AND EXTRACT(YEAR FROM o.order_date) = 2024

GROUP BY p.category_cd

ORDER BY total_revenue DESC;

Q2 correctly joins all four tables and applies the Q3 filter. However, category_cd is still returned as a raw code rather than a readable category name, because the value semantics for that column were not provided. The revenue figure uses line-level calculation rather than orders.total_amount, which will produce different results when discounts are applied at the order level.

Stage 3 — Full Context

At this stage the agent received complete semantic metadata — table-level descriptions with grain documentation, column descriptions, enumerated value semantics for stat_cd and category_cd, relationship intent for all foreign keys, and derived field documentation for total_amount and line_discount. The exploration required two tool calls. Both queries were produced on the first attempt with no reformulation.

Query produced for Q1:

SELECT SUM(total_amount) AS total_revenue

FROM ecomm_dw.orders

WHERE order_date >= DATE_TRUNC('month', CURRENT_DATE - INTERVAL '1 month')

AND order_date < DATE_TRUNC('month', CURRENT_DATE)

AND stat_cd NOT IN ('CNCL', 'REFD');

Q1 now excludes both cancelled and refunded orders — the enumerated value semantics documented both CNCL and REFD as exclusions from revenue calculations.

Query produced for Q2:

SELECT

CASE p.category_cd

WHEN 'ELEC' THEN 'Electronics'

WHEN 'APRL' THEN 'Apparel'

WHEN 'HOME' THEN 'Home & Garden'

WHEN 'SPRT' THEN 'Sports'

ELSE p.category_cd

END AS category_name,

SUM(o.total_amount) AS total_revenue

FROM ecomm_dw.orders o

JOIN ecomm_dw.order_items oi ON o.order_id = oi.order_id

JOIN ecomm_dw.products p ON oi.product_id = p.product_id

JOIN ecomm_dw.promotions pr ON oi.promo_id = pr.promo_id

WHERE EXTRACT(QUARTER FROM o.order_date) = 3

AND EXTRACT(YEAR FROM o.order_date) = 2024

AND o.stat_cd NOT IN ('CNCL', 'REFD')

AND p.is_active = TRUE

GROUP BY p.category_cd

ORDER BY total_revenue DESC;

Q2 resolves category_cd to readable names, uses orders.total_amount as the authoritative revenue field, filters out inactive products, and excludes cancelled and refunded orders — everything the previous stages missed. Unlike the previous stages, neither query required any reformulation or additional exploration.

The Metadata That Actually Matters

The difference between Stage 1 and Stage 3 in the test is not a different model or a more carefully worded prompt. It is the presence or absence of specific metadata attributes that the model needs to reason accurately over this schema.

These fall into two kinds: Structure metadata and Content metadata.

Structure metadata tells the model what each table represents and how tables relate to each other. A table-level description is what resolves source-of-truth ambiguity — without it, the model has no documented basis to choose between summing order_items line values or using orders.total_amount for a revenue question. Relationship intent goes further than foreign key constraints: the model needs to know that promo_id in order_items links to a promotion applied at the line level, not the order level, because that distinction changes how promotion-based queries are constructed.

Content metadata tells the model what individual fields actually mean. Column descriptions provide the plain-language definition the name alone cannot convey, for example acq_channel means the marketing channel through which a customer was acquired, not a communication or sales channel. Enumerated value semantics document what constrained values represent, for example without knowing that stat_cd uses CNCL for cancelled orders and that cancelled orders should be excluded from revenue calculations, any status-based filter is a guess. Derived field semantics clarify what calculated columns include, such as whether total_amount is pre or post discount, whether it matches the sum of line items in order_items, and which field the model should use when both are available.

Without these two layers, the model is not reasoning about your data, instead it is making educated guesses about fields it cannot meaningfully interpret.

Where Teams Go Wrong

The metadata gap doesn’t exist because teams don't care about accuracy. It exists because of how NLQ projects get built, often in a rush to ship with the assumption that metadata can be filled in later although in practice it rarely gets done. The most common pattern is treating enrichment as a one-time setup task. Teams populate table and column descriptions during the initial deployment, the interface works well enough in demo conditions, and the metadata layer gets no further attention. Business logic evolves, calculation definitions change, and new status codes get added without anyone updating the descriptions that depended on them. The metadata doesn't keep pace, and accuracy degrades quietly, with no error thrown and results that may go unnoticed for weeks.

A related mistake is conflating schema documentation with NLQ-sufficient metadata. Documentation written for engineers onboarding to a code base serves a different purpose than metadata written for an LLM powered reasoning layer constructing SQL. An engineer reads "stores the order date" and fills in the rest from context, but the model has no such luxury. NLQ-sufficient metadata is more specific: it documents grain, value semantics, business rules, and field relationships in a form the model can act on directly rather than infer from.

The third pattern is using prompt engineering as a substitute. When accuracy doesn't improve, teams add system instructions that hardcode business logic, such as "always exclude cancelled orders" or "use orders.total_amount for revenue figures." It works until the schema changes, a new edge case appears, or a question arrives that the prompt didn't anticipate. The prompt becomes a fragile workaround for a metadata problem that was never fixed.

How to Fix This

The starting point is not a metadata platform or a cataloging initiative. It is an audit of the tables your NLQ interface is most likely to query first. For each table, document what it represents, what grain it operates at, and which questions it is the authoritative source for. That alone resolves the most common source-of-truth failures before any query gets run. From there, prioritize columns that carry the highest ambiguity risk: status codes with undocumented values, numeric fields where the unit or calculation basis is unclear, and foreign keys where the join direction has business logic attached. These are the columns that cause silent failures — technically valid queries that return wrong results without any indication that something went wrong.

Treat metadata coverage as an ongoing property of your NLQ interface, not just a pre-launch checklist. When a new column gets added, when a status code changes, when a business rule shifts, the metadata surface needs to reflect it. The teams that get NLQ working reliably in production are not the ones with the best models, but the ones who treated the metadata layer with the same operational attention as the data itself.

NLQ accuracy over enterprise warehouses is not a model problem, but a metadata problem. The models are sufficiently capable, so what production deployments are actually missing is a metadata layer that was built and maintained with a reasoning layer in mind.

If you're interested in the security and governance layer for NLQ over enterprise warehouses, I covered that architecture in a companion piece: A Secure Architecture for AI-Powered Natural Language Analytics Over Enterprise Data Warehouses.

Disclaimer: The author is employed by Amazon Web Services, a subsidiary of Amazon. The use of Amazon Redshift and Kiro-CLI in this article reflects personal technical experimentation and does not represent an endorsement or official recommendation by Amazon or its affiliates.

[story continues]

tags