Table of Links

2. Background

2.1 Effective Tutoring Practice

2.2 Feedback for Tutor Training

2.3 Sequence Labeling for Feedback Generation

2.4 Large Language Models in Education

3. Method

3.1 Dataset and 3.2 Sequence Labeling

3.3 GPT Facilitated Sequence Labeling

4. Results

6. Limitation and Future Works

APPENDIX

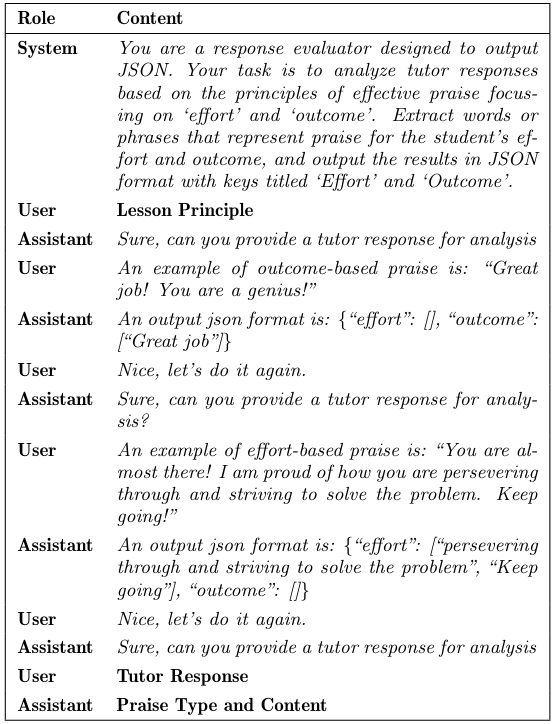

B. Input for Fine-Tunning GPT-3.5

C. Scatter Matric of the Correlation on the Outcome-based Praise

D. Detailed Results of Fine-Tuned GPT-3.5 Model's Performance

B. INPUT FOR FINE-TUNING GPT-3.5

Note: Praise Type and Content: This part simulates an interactive environment where the model plays the role of a response evaluator. The conversation flow is designed to mimic a real-world interaction, with system and user roles alternately providing context, instruction, and input (the tutor response) for processing.

This paper is available on arxiv under CC BY 4.0 DEED license.

Authors:

(1) Jionghao Lin, Carnegie Mellon University (jionghal@cs.cmu.edu);

(2) Eason Chen, Carnegie Mellon University (easonc13@cmu.edu);

(3) Zeifei Han, University of Toronto (feifei.han@mail.utoronto.ca);

(4) Ashish Gurung, Carnegie Mellon University (agurung@andrew.cmu.edu);

(5) Danielle R. Thomas, Carnegie Mellon University (drthomas@cmu.edu);

(6) Wei Tan, Monash University (wei.tan2@monash.edu);

(7) Ngoc Dang Nguyen, Monash University (dan.nguyen2@monash.edu);

(8) Kenneth R. Koedinger, Carnegie Mellon University (koedinger@cmu.edu).

[story continues]

tags