Summary

In this article, you’ll learn Anatomy of Kubernetes Pods and NodePort Service example with Running Containers in Pods.

Introduction to Pods

Kubernetes revolves around the pods. So, you have to know what these pods are. Pods are the run time environment using which we deploy the applications. It is an atomic unit of scheduling in Kubernetes. One or multiple containers deployed together on a single host is called as a pod. We will see how pods are deployed and scaled inside Kubernetes cluster. Now I am going to explain about the Kubernetes Pods and NodePort Service with Running Containers in Pods and Connecting to Running Containers in Kubernetes

Cluster Setup

The Kubernetes cluster setup that I am using for this demo consists of one master node and 3 worker node VMs. All these 4 VMs are running on Goggle cloud with centOS.

We will be connecting to these VMs using a cloud shell. Let’s check the status of these nodes by running kubectl get nodes command.

As you can see from the command output, we have 1 master and 3 worker nodes. All 4 nodes are ready and running successfully.

Now we will review the pod manifest file with nginx container. Go to the pod working directory where we have kept the manifest file. I have created one manifest file for the demo.

Most of the Kubernetes objects manifest file, the above pod manifest file consists of 4 top labels required field i.e. apiVersion, kind, metadata, and spec. So, we should have them in our yaml config file.

- apiVersion: This defines the version number which these Kubernetes objects belong to. So, the API version of pod is v1 here in our case.

- Kind: The kind of object which we are going to create. In our case, this is a pod.

- Metadata: This section consists of two fields. One name and other is a label. Here name of the pod is nginx pod.

- Labels: This is an optional field. Labels are used to logically group all related pods together for displaying and managing. Tier is for specifying the env. In our case it is dev. Labels help for filtering. Suppose there is 1000 number of pods running inside our Kubernetes cluster and we want to filter only nginx pods then these labels come very handy in this type of situation.

- Spec: here in this nginx example, I am deploying nginx container from Docker hub. The name of the container we define here is “nginx-container”. Image I am using here is nginx. Since I am not giving here any specific version, it is going to download and install the latest version. You can also give the environment variables port number and volumes for this nginx container.

Pod Config Creation:

We will deploy the pods using kubectl to create command. Let’s create the nginx pod. First, check if there are already any pods running inside the Kubernetes cluster.

As you can see that there are no pods running. Now we will create the pods using kubectl create command followed by the name of the manifest file which I have already mentioned above.

Here the nginx-pod.yaml is our manifest file and our pod got created after issuing the above command. Now again we will check if pod is created or not by issuing below get pods command:

Here you can see that nginx pod is successfully running. Now as there are 4 nodes inside the Kubernetes cluster, we have then how can we know that on which node this nginx pod is running on. We can get the info by getting pods commands with a wide option.

Now in the above image, you can see that the IP address of the pod and it is running on the worker 3 node.

Suppose we have lost our manifest file or deleted, and we have the nginx pod running inside our Kubernetes cluster. Then we can print the nginx pod details that are running from the yaml file.

Now you can from the above image that the nginx pod configuration in yaml format. This is useful when we want to display every minor detail of running an object.

Now to see the complete details of the pods issue command: “kubectl describe pod nginx pod | more”. Then you can see all the details about the pods:

You can see above the detail info about the pod that it is running fine on the worker node with the IP address detail and event details. We can also expose this pod using NodePort service. We will be discussing more details about the Kubernetes services like NodePort, Loadbalancer, and clusterIP services in our other Kubernetes services blog.

Expose the pods using Nodeport service:

Once the app is exposed to the outside world on to the internet, then we will access a sample html webpage and also the default nginx webpage using node IP and node port from any web browser.

Node IP: it the external IP address of any master node or any worker node inside the Kubernetes cluster. Besides accessing the webpage from the internet, we will also try to access that sample html webpage internally from the worker nodes using Kubernetes cluster using pod IP.

To create test.html page inside nginx-pod root directory, we need to get inside the pod first.

Here hostname is the pod name I have given i.e. nginx-pod. Now let’s create a sample “test.html” page inside the nginx root directory.

test.html:

This is a very simple html file and we are just printing some header. Now let’s expose this nginx-pod through NodePort Service. So now I will run the command.

So, now we have successfully exposed the nginx pod to the outside world using NodePort Service. Now we need to know the node port number on which this nginx webpage is exposed to the outside world. We can get that info by running describe command.

Svc option is for the nodeport service name of the nginx-pod. You can see above the node port is 30758. So, to access the webpage from the web browser, we need node IP and node port. This node IP is the external IP address of the Kubernetes master or any worker node inside the Kubernetes cluster.

As we have already checked that nginx pod is running on worker node 3, so let’s take the worker node 3 IP.

Here external IP is yellow marked i.e. 35.196.57.193. Now we have our node port 30758. So, we have everything to access the nginx page on the browser.

Test the node port IP:

- Now go the browser and access the URL with node port IP and port like below:

- As I mentioned earlier Node IP is a publicly routable external IP of any worker node or a Kubernetes master node. In our case, it is worker 3 external IP address. We got the node port by exposing the nginx pod using node port service.

- Now let’s access our test.html webpage that we have created inside the pod. Here we can see that we have successfully accessed the html webpage externally on internet.

- Note: You can able to access a webpage using your master node or any other worker node’s external IP address but with using the same node port.

- Now let’s access the same web page internally from Kubernetes cluster. For this, we need pod IP and port number. We will get that info from the to describe command:

- So, here the endpoint you can see is the pod IP and port. Now copy the IP and port and issue a curl command: Now we can see that we are successfully accessing the default nginx webpage.

- Now let’s try accessing the html webpage with pod IP and port number:

Pod Delete Operation:

Here is my demo, I have created two objects i.e. pod and NodePort service. Now let’s delete the pod and the service with the delete command followed by pod name and service name like I have done in below:

We will see now if it deleted successfully or not by below commands:

Here you can see above that the two objects deleted successfully.

Kubernetes Services Anatomy:

- Imagine that we have to deploy a web app. typically, webapp consists of UI part and backend database. So how do we expose the frontend web app to the outside world? And how do the UI part will connect to the backend database? Another thing is that when pods get recreated their IP address changes then it will be difficult to connect when the pods’ IP changes dynamically.

We can address above problems using Kubernetes Services.

- Suppose, we have a front-end web app and a backend DB. Some users are trying to access the web app. As we know every pod in Kubernetes has a unique IP address. These pods last for a very short time. There are various reasons why pods die. But when the controller recreates the pod then the pod will get the new IP address. So, whenever pods get recreated then it gets a new IP address. This creates a problem.

- Let’s say backend pods provide some kind of functionality to frontend pods inside Kubernetes cluster, then how does the frontend pods keep track of the backend pods. Another thing is you know every app is made up of Microservice. Each of the Microservice is deployed to the individual pods. So, to work this app properly, all the individual Microservice which are present in the pods need to be connected and communicated together. The next question is how these apps do are exposed to end-user who are trying to access through the internet.

To solve all the above problems Kubernetes Services come into picture.

Kubernetes Service

Service is a way of a grouping of pods that are running on a cluster. These are cheaper and we can have as many Java web development services possible within our cluster. Services provide some of the important features that are standardized across the cluster such as load balancing, service discovery between apps and features to support zero-downtime app deployment.

One of the services to solve the above problem is “NodePort Service”

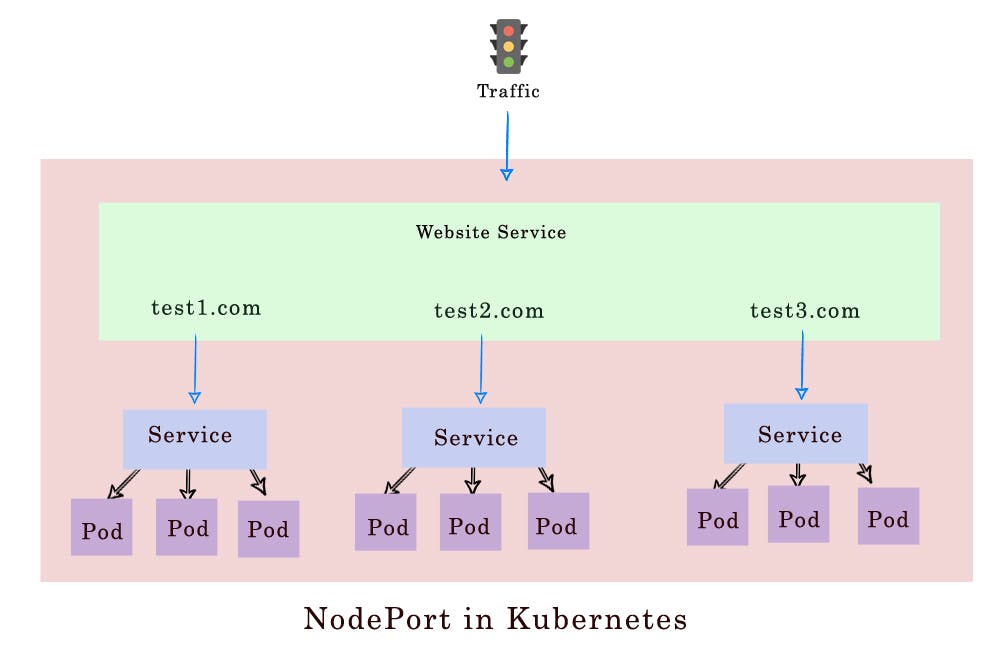

NodePort Service real-time example:

- We have a node here in the above image. And inside that we have a front-end web app. pod. Which is deployed on it with an IP address. There are users who are trying to access this application over internet. Now there are two challenges we have. First, we have already discussed that we cannot trust the pod IP as it changes dynamically after recreation. Second is there are no connectivity between user and pod. So, to address these two challenges, Kubernetes provides the “Nodeport” Service.

- Using NodePort we can expose the service on node IP at a static port which is called nodeport. Here we are talking about two things. One is node IP and other is NodePort.

- So, as you can see above currently there is a web app running on frontend pod. So, to expose this app to the outside world on internet, we need to create a service of type NodePort. So once the node port service is deployed then the application is exposed on Node IP which is here 192.168.1.1. And the node port that we will mention in the manifest file is 31000. There is a limitation here.

- If we are defining the node port manually inside a manifest file, then make sure you define the node port in the range of 30000 to 32767. If we don’t mention in the manifest file, then Kubernetes will assign an unused node port within that range dynamically. (Node port is a port where the pod is running).Node IP is a static IP to access the app from the browser.

- The second port you can see in the above image is “80”. This is the target port on the service itself. Another port i.e. service port is the port on actual pod where the webapp is running.

- Typically, the service port and target port are the same. To remember these ports, you have to look at the service side. Let’s assume you are on the service and port on the service is just referred to as the port. The requests are getting forwarded to the target port which is on a pod.

NodePort service example Demo

- Create 4 Nodes in Google cloud. Like below: (See our blog how to install Kubernetes and create nodes on Google cloud )

- I will be connecting to the above VMs using a cloud shell. Check the status of the nodes if they run successfully using the command (kubectl get nodes). First, check if no pods and deployment and services are running inside our Kubernetes cluster using ( kubectl get pods/deploy/service). So that we can have a clean Kubernetes cluster setup.

- Create a nodeport working directory inside the master node with ‘mkdir’ command. Then go to that working directory. Here my working dir is “nodePort-svc”.

Here I have created two yaml manifest files inside the working directory you can see that.

Nginx-deploy.yaml:

- This is a simple nginx deployment manifest file where I am going to deploy one replica of nginx container 1.7.9. Please note pod labels I have used here are “nginx-app”. We have to use these exact pod labels inside the nodeport service manifest file.

This ngix-app pod runs on port 80.

Nginx-svc.yaml:

- Nodeport service file contains 4 top sections. They are “api version”,”kind”,”metadata” and”spec”. Service is part of an initial stable release. So, the API version is “v1”.

- The kind of object I am creating here is the “Service”. I have defined the name of the service as “my-service “under the metadata section. I have also provided labels to this service, but this is an optional field. For simplicity, I am using the same labels as pod labels.

- In the spec section, all core ports lie. This spec section contains 3 important things i.e. “selector”, “type” and “ports”. The purpose of the selector is to define the way this NodePort service selects the pods that it needs to manage. This is done using the pods labels. So, I have included the exact pod label under the selector section.

- Type: this is the type of service we are going to create. Here the type of the service is “NodePort”.

- Ports: in this section, we define the ports. Here you can see I have defined 3 ports. 1st one is “nodePort”. We can define the nodePort manually here or else Kubernetes will assign the port automatically while creating the node port service. Here I have given is 31111. So, Kubernetes will expose the nginx application on every worker node and master node inside the Kubernetes cluster on the internet using this nodePort. So an external client can call the service by using the external IP of a node and nodePort.

- Port: This port is the port on the service itself. Here I have given port as “80”

- TargetPort: This is a port on the container where the application is running on. The request is being forwarded to the members of pod onto the specified port by the targetPort here i.e. 80 here in our manifest file. Generally, port and target ports are the same numbers. If we don’t specify the target port here, then it defaults to the value of the port fields.

Deployment

- Now we need to create the deployments using the deployment manifest file which I have already created inside my working directory of nginx service app. Issue the below command:

- The app deployed successfully. Now I need to expose the application to the outside world and for that I will do that with the help of NodePort service. Create NodePort service with the below command using our service file i.e. “nginx-svc.yaml file”:

- Now the service created successfully. Now let’s display the objects we have created so far with the below commands:

- As you can see “my-service” of type nodePort created successfully. Also, you can see there is one pod created with the deployment and that pod is running successfully on the worker node 1. Now let’s create a sample html webpage inside our working directory by going into the pod.

Let’s get inside to the pod with the below command:

- Now let’s create a sample html page inside our working directory.

Test.html:

- Now I will try to access this html page internally using pod IP and port. Now let’s find our pod IP by issuing below command:

- Now we know the pod IP and let’s call this using curl command:

- Now we can access the page successfully. Now I will try to access the same page using internal nodeport cluster IP. Now to find the cluster IP, we need to run the below command:

- So, using this nodeport cluster IP, we can access the same html webpage using curl command:

- The main part is we will access this same webpage externally from the internet using the node IP and node port. We know the node port we have configured already is “3111”. Now let’s get the external IP of a node in the goggle cloud dashboard inside our cluster. Let’s take the IP of the master node i.e. here 34.73.122.126.

- Now we will access our html webpage externally from the browser using this node IP(34.73.122.126) and node port(3111)

- So, you can see above, we can successfully able to access it. You can also access any of the worker node IP and node port and this will work fine.

Conclusion

Here, I have explained the most important features of NodePort Services. Also, I have demonstrated running Containers in Pods and Connecting to Running Containers in Kubernetes.

References

- https://kubernetes.io/docs/concepts/services-networking/connect-applications-service/

- https://kubernetes.io/docs/tutorials/kubernetes-basics/explore/explore-intro/