We were running a large production service with about 10 million monthly active users, and Redis acted as the main storage for user state. Every record in Redis was a JSON-serialized Pydantic model. It looked clean and convenient – until it started to hurt.

As we grew, our cluster scaled to five Redis nodes, yet memory pressure only kept getting worse. JSON objects were inflating far beyond the size of the actual data, and we were literally paying for air – in cloud invoices, wasted RAM, and degraded performance.

At some point I calculated the ratio of real payload to total storage, and the result made it obvious that we couldn’t continue like this:

14,000 bytes per user in JSON → 2,000 bytes in a binary format

A 7× difference. Just because of the serialization format.

That’s when I built what eventually became PyByntic – a compact binary encoder/decoder for Pydantic models. And below is the story of how I got there, what didn’t work, and why the final approach made Redis (and our wallets) a lot happier.

Why JSON Became a Problem

JSON is great as a universal exchange format. But inside a low-level cache, it turns into a memory-hungry monster:

- it stores field names in full

- it stores types implicitly as strings

- it duplicates structure over and over

- it’s not optimized for binary data

- it inflates RAM usage to 3–10× the size of the real payload

When you’re holding tens of millions of objects in Redis, this isn’t some academic inefficiency anymore – it’s a real bill and an extra server in the cluster. At scale, JSON stops being a harmless convenience and becomes a silent tax on memory.

What Alternatives Exist (and Why They Didn’t Work)

I went through the obvious candidates:

|

Format |

Why It Failed in Our Case |

|---|---|

|

Protobuf |

Too much ceremony: separate schemas, code generation, extra tooling, and a lot of friction for simple models |

|

MessagePack |

More compact than JSON, but still not enough – and integrating it cleanly with Pydantic was far from seamless |

|

BSON |

Smaller than JSON, but the Pydantic integration story was still clumsy and not worth the hassle |

All of these formats are good in general. But for the specific scenario of “Pydantic + Redis as a state store” they felt like using a sledgehammer to crack a nut – heavy, noisy, and with barely any real relief in memory usage.

I needed a solution that would:

- drop into the existing codebase with just a couple of lines

- deliver a radical reduction in memory usage

- avoid any extra DSLs, schemas, or code generation

- work directly with Pydantic models without breaking the ecosystem

What I Built

So I ended up writing a minimalist binary format with a lightweight encoder/decoder on top of annotated Pydantic models. That’s how PyByntic was born.

Its API is intentionally designed so that you can drop it in with almost no friction — in most cases, you just replace calls like:

model.serialize() # replaces .model_dump_json()

Model.deserialize(bytes) # replaces .model_validate_json()

Example usage:

from pybyntic import AnnotatedBaseModel

from pybyntic.types import UInt32, String, Bool

from typing import Annotated

class User(AnnotatedBaseModel):

user_id: Annotated[int, UInt32]

username: Annotated[str, String]

is_active: Annotated[bool, Bool]

data = User(

user_id=123,

username="alice",

is_active=True

)

raw = data.serialize()

obj = User.deserialize(raw)

Optionally, you can also provide a custom compression function:

import zlib

serialized = user.serialize(encoder=zlib.compress)

deserialized_user = User.deserialize(serialized, decoder=zlib.decompress)

Comparison

For a fair comparison, I generated 2 million user records based on our real production models. Each user object contained a mix of fields – UInt16, UInt32, Int32, Int64, Bool, Float32, String, and DateTime32. On top of that, every user also had nested objects such as roles and permissions, and in some cases there could be hundreds of permissions per user. In other words, this was not a synthetic toy example — it was a realistic dataset with deeply nested structures and a wide range of field types.

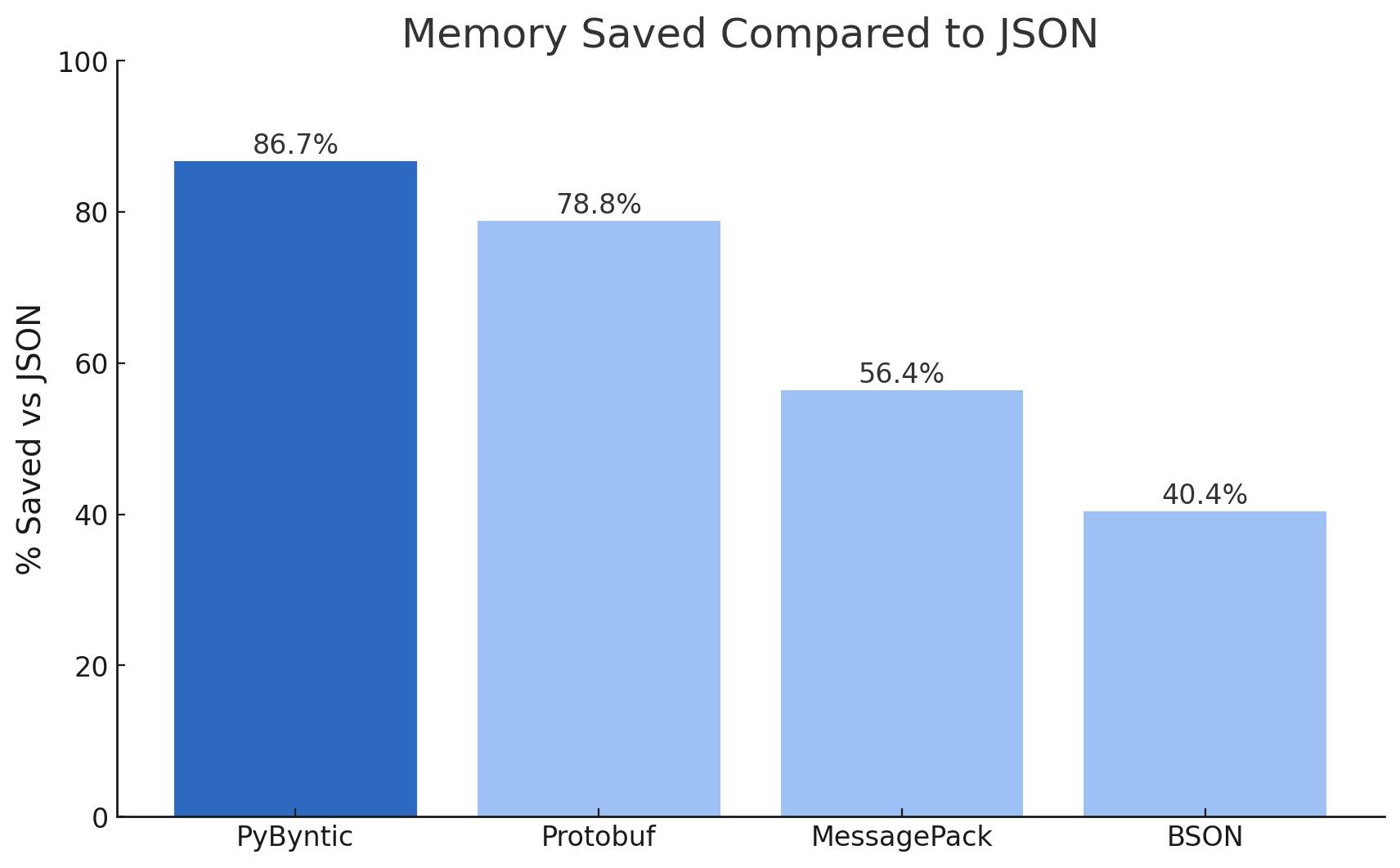

The chart shows how much memory Redis consumes when storing 2,000,000 user objects using different serialization formats. JSON is used as the baseline at approximately 35.1 GB. PyByntic turned out to be the most compact option — just ~4.6 GB (13.3% of JSON), which is about 7.5× smaller. Protobuf and MessagePack also offer a noticeable improvement over JSON, but in absolute numbers they still fall far behind PyByntic.

Let's compare what this means for your cloud bill:

|

Format |

Price of Redis on GCP |

|---|---|

|

JSON |

$876/month |

|

PyByntic |

$118/month |

|

MessagePack |

$380/month |

|

BSON |

$522/month |

|

Protobuf |

$187/month |

This calculation is based on storing 2,000,000 user objects using Memorystore for Redis Cluster on Google Cloud Platform. The savings are significant – and they scale even further as your load grows.

Where Does the Space Savings Come From?

The huge memory savings come from two simple facts: binary data doesn’t need a text format, and it doesn’t repeat structure on every object. In JSON, a typical datetime is stored as a string like "1970-01-01T00:00:01.000000" – that’s 26 characters, and since each ASCII character is 1 byte = 8 bits, a single timestamp costs 208 bits. In binary, a DateTime32 takes just 32 bits, making it 6.5× smaller with zero formatting overhead.

The same applies to numbers. For example, 18446744073709551615 (2^64−1) in JSON takes 20 characters = 160 bits, while the binary representation is a fixed 64 bits. And finally, JSON keeps repeating field names for every single object, thousands or millions of times. A binary format doesn’t need that — the schema is known in advance, so there’s no structural tax on every record.

Those three effects – no strings, no repetition, and no formatting overhead – are exactly where the size reduction comes from.

Conclusion

If you’re using Pydantic and storing state in Redis, then JSON is a luxury you pay a RAM tax for. A binary format that stays compatible with your existing models is simply a more rational choice.

For us, PyByntic became exactly that — a logical optimization that didn’t break anything, but eliminated an entire class of problems and unnecessary overhead.

GitHub repository: https://github.com/sijokun/PyByntic