In the fast-moving world of 2026, we are witnessing a strange technical tug of war. On one side, we have the massive AI labs trying to convince us that we need a brand new protocol just to let Large Language Models talk to our data and our tools. They call it MCP (Model Context Protocol). If you listen to the marketing, it is the "missing link" that will finally turn AI from a clever chatbot into a functional agent.

But if you actually spend your days inside a terminal, you have likely noticed something funny. While the industry is busy building new JSON layers and complex authorization schemes, the most effective developers are already solving the problem with a tool that has been around for fifty years.

I am talking about the Command Line Interface. The reality is that MCP is failing because it tries to replace a perfect abstraction with a clunky one.

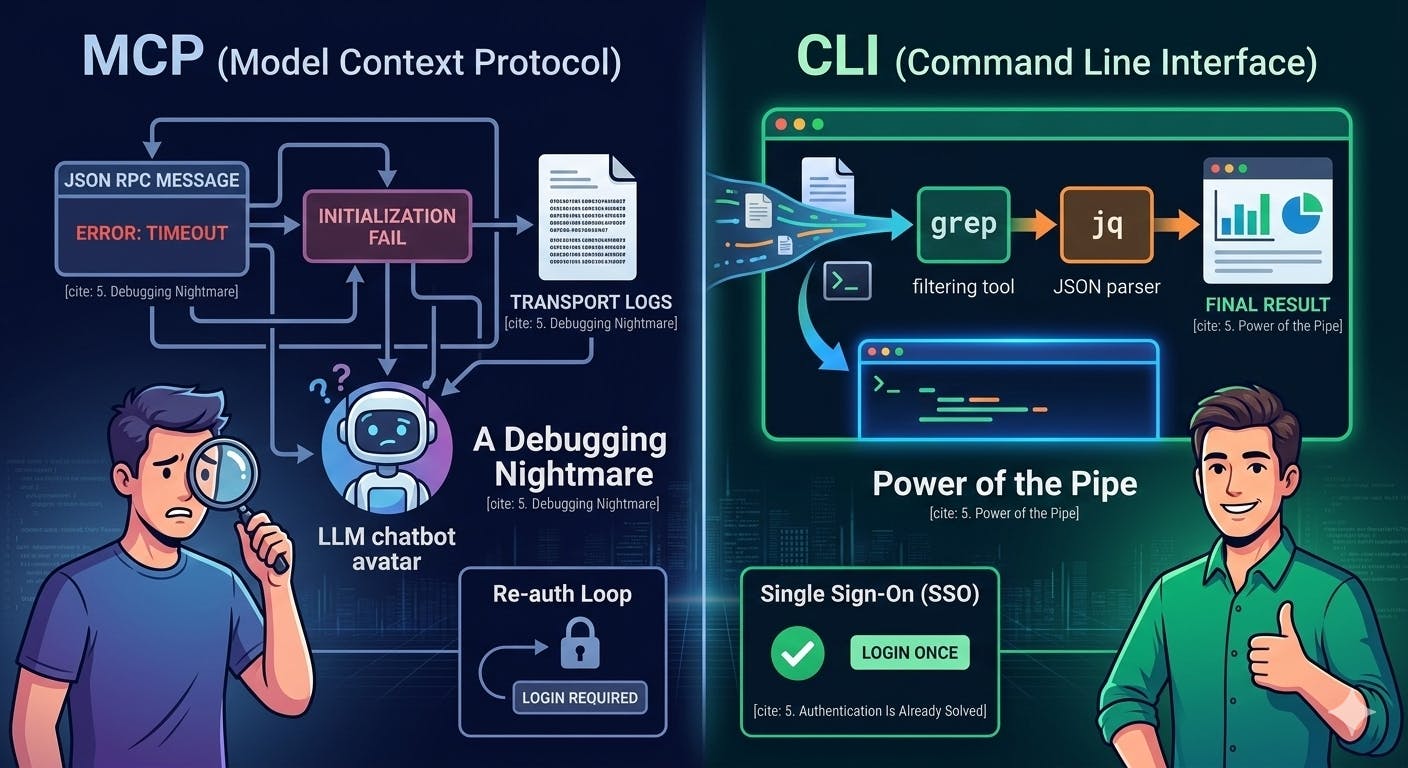

The Debugging Nightmare

One of the biggest issues with the rush toward MCP is the loss of transparency. In the classic Unix world, everything is text. If a tool does something weird, a human can run that exact same command in their own terminal and see exactly what happened. You have total visibility into the input and the output.

With MCP, that transparency vanishes into what I call the debugging nightmare. If an agent fails to fetch a file via an MCP server, you are suddenly digging through transport logs and decoding JSON RPC messages. You are trying to figure out if the server crashed, if the initialization failed, or if the protocol itself just choked.

Debugging should not require a special decoder ring. When a CLI tool fails, it tells you why in plain English. That is a feature, not a bug. By hiding the tool behind a protocol, we are creating a "black box" that makes it harder for the human "pilot" to keep control of the "agent" passenger.

The Power of The Pipe

If you have ever spent a late night wrangling a massive dataset, you know the magic of the Unix pipe. The ability to send data from one tool to another is the secret sauce of productivity.

Consider a complex task like analyzing a cloud infrastructure plan. In a CLI world, an agent can run a plan, pipe it through jq to filter for specific changes, and then grep for errors in a single line of bash. This is the power of the pipe.

In the MCP world, you have two bad choices:

- You dump the entire massive file into the context window and hope the model does not hallucinate.

- You build custom filtering logic directly into every single MCP server you write.

Why do more work to get a worse result? The CLI gives agents access to decades of optimized, composable tools that just work. LLMs are already world-class experts at using these tools because they were trained on nearly every man page ever written.

The Problem with Reinventing Auth

The creators of MCP spent a lot of time thinking about how to handle permissions. It is a noble goal, but it ignores the fact that we already have battle-tested authentication flows that work perfectly.

Tools like the AWS CLI or kubectl already handle complex SSO logins and credential rotation. When I am at my keyboard, I run a login command once and I am good for the day. My agent can inherit those same permissions without needing a separate, redundant authentication layer built into a new protocol.

Using multiple MCP tools today feels like an endless loop of re-authentication. Using a CLI feels like a single door that stays open while you work. When auth breaks in a CLI, I fix it the same way I always have. I do not need to learn a whole new troubleshooting manual just for my AI tools.

Building For Humans and Agents

The best tools are the ones that work for both people and machines. When a company builds an MCP server instead of a good CLI, they are building a wall. They are creating a tool that only the AI can use easily, leaving the human developer in the dark.

But when you build a rock-solid CLI, you win twice. You give your human power users a tool they love, and you give the AI agents a standard interface they already know how to drive.

The terminal has been the heart of development for half a century for a reason. It is time we stop trying to replace it with shiny new protocols that add more friction than they remove. The agents are already at home in the terminal. We should be too.