Hello everyone, my name is Dmitrii Ivashchenko and I'm a software engineer at MY.GAMES. In this article, we'll discuss the differences between garbage collection in Unity and .NET.

One of the main advantages of the C# programming language is automatic memory management. It eliminates the need for developers to manually free memory from unused objects and significantly reduces development time. However, this can also lead to a large number of problems related to memory leaks and unexpected application crashes.

Aside from general recommendations for avoiding garbage collection issues in C#, it's important to highlight the specifics of memory management implementation in Unity, which result in additional constraints.

First, let's examine how memory management works in .NET.

Memory management in .NET

The Common Language Runtime (CLR) is the runtime environment for managed code in .NET. Any high-level .NET code written in languages like C#, F#, Visual Basic, and so on, is compiled into Intermediate Language (IL-code), which is then executed in the CLR. In addition to executing IL code, the CLR also provides several other necessary services, such as type safety, security boundaries, and automatic memory management.

Managed heap

When a new CLR process is initialized, it reserves a contiguous region of address space for that process — this space is referred to as the managed heap. The managed heap stores a pointer to the address where the next object will be allocated in the heap. Initially, this pointer is set to the base address of the managed heap. All reference types (often value types, too) are placed in the managed heap.

When an application creates the first reference type, memory is allocated for the type at the base address of the managed heap. When creating the next object, the garbage collector allocates memory for it in the address space immediately following the first object. As long as address space is available, the garbage collector continues to allocate space for new objects in this way.

Allocating memory from managed heap is faster than allocating unmanaged memory. For example, if you want to allocate memory in C++, you would need to make a system call to the operating system to do this. When using CLR, the memory is already reserved from the OS when the application is launched.

Since the runtime allocates memory for an object by adding a value to the pointer, it happens almost as quickly as allocating memory from the stack. Additionally, because new objects that are allocated sequentially are also stored sequentially in the managed heap, an application can access objects very quickly.

CLR GC algorithm

The garbage collection (GC) algorithm in CLR is based on several considerations:

-

New objects have a shorter life span, while older objects have a longer one.

-

It's faster to compact the memory for a small portion of the managed heap than for the entire managed heap at once.

-

New objects are usually related to each other and are available to the application at approximately the same time.

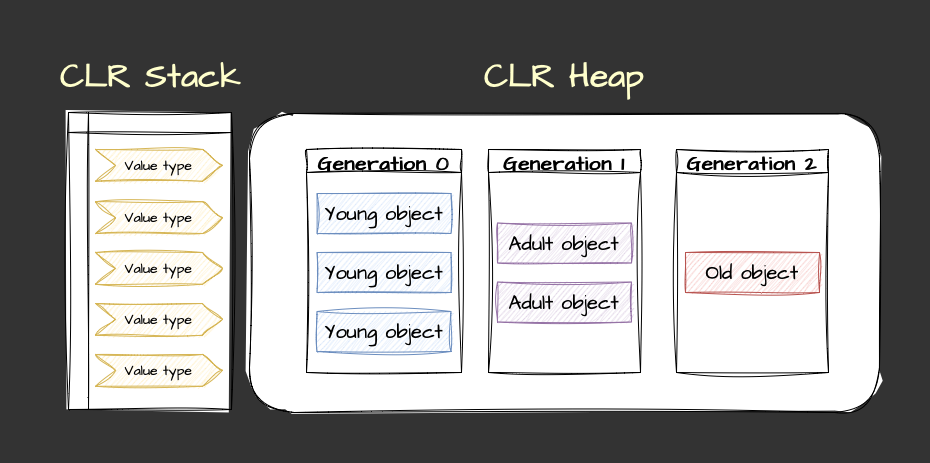

Based on these time-tested assumptions, the CLR garbage collector algorithm is built as follows. There are three generations of objects:

- Generation 0: all new objects go into this generation.

- Generation 1: objects from generation 0 that survive one garbage collection are moved into this generation.

- Generation 2: objects from generation 1 that survive a second garbage collection are moved into this generation.

Each generation has its own address space in memory and is processed independently of the others. When an application is launched, the garbage collector places all created objects in the generation 0 space. Once there is no longer enough space for another object, a garbage collection is triggered for generation 0.

So, how can we determine the objects no longer in use? To do this, the garbage collector uses the list of roots of the application. This list usually includes:

- Static fields of classes.

- Local variables and method parameters.

- References to objects stored in the thread stack.

- References to objects stored in processor registers.

- Objects awaiting finalization.

- Objects associated with event handlers may also be included in the list of roots.

The garbage collector analyzes the list of roots and builds a graph of accessible objects starting from the root objects. Objects that cannot be reached from the root objects are considered dead, and the garbage collector frees up the memory occupied by these objects.

After dealing with any dead objects from generation 0, the garbage collector moves the remaining live objects to the address space of generation 1. Once becoming a part of generation 1, objects are less frequently considered by the garbage collector as candidates for removal.

Over time, if cleaning up generation 0 does not provide enough space for creating new objects, garbage collection for generation 1 is performed. The graph is constructed again, dead objects are deleted again, and the surviving objects from generation 1 are moved to generation 2.

If constant memory leaks are occurring, the garbage collector will consume the available address space of all three generations and decide to request additional space from the operating system. If memory leaks persist, the OS will continue to allocate additional memory to the process up to a certain limit, but when its available memory runs out, it will be forced to stop the process.

Some details have intentionally been omitted in this text for ease of understanding, but nonetheless, this allows us to see the complexity and thoughtfulness of memory management organization in .NET. Now, equipped with this knowledge, let's take a look at how this is organized in Unity.

Memory management in Unity

Since the Unity game engine is written in C++, it obviously uses some amount of memory for its runtime which is not accessible to users (this is called native memory). It's also important to highlight a special type of memory called C# unmanaged memory, which is used when you use Unity Collections structures like NativeArray<T> or NativeList<T>. All other used memory space is managed memory and uses a garbage collector to allocate and free memory.

Due to the absence of CLR, memory management in Unity applications is handled by the scripting runtime (Mono or IL2CPP). However, it should be noted that these environments are not as efficient at memory management as .NET. One of the most frustrating consequences of this is fragmentation.

Memory fragmentation

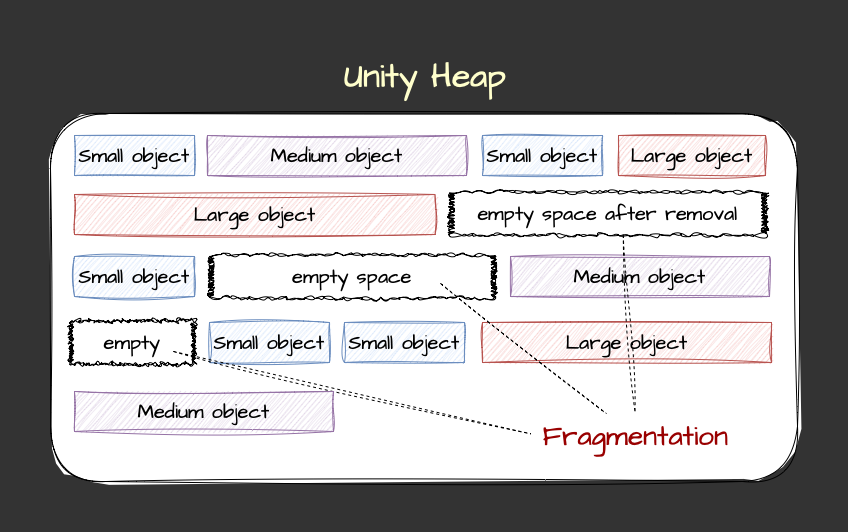

Memory fragmentation in Unity is the process where the available memory space is divided into scattered blocks. Fragmentation occurs when an application constantly allocates and deallocates memory, leading to the division of free space in memory into many continuous blocks of different sizes. Memory fragmentation can be of two types:

- External fragmentation: this occurs when the free space in memory is divided into multiple non-contiguous blocks of different sizes distributed throughout the memory. As a result, there can be enough overall free memory available to accommodate a new object, but there might not be a suitable contiguous block of free space to accommodate it.

- Internal fragmentation: this occurs when allocated memory blocks have more space than required to store an object. This leaves unused memory space inside the allocated blocks, which can also lead to inefficient resource utilization.

Memory fragmentation in Unity is particularly relevant due to the use of a conservative garbage collector that does not support memory compaction. Without compaction, freed memory blocks remain scattered, which can cause performance issues, especially in the long term.

The Boehm-Demers-Weiser garbage collector

Unity uses the conservative Boehm-Demers-Weiser (BDW) garbage collector, which stops the execution of your program and resumes normal execution only after completing its work. BDW's work algorithm can be described as follows:

- Stop the world: the garbage collector pauses program execution for garbage collection.

- Root scanning: it scans the root pointers, determining all live objects directly accessible from the program code.

- Object tracing: it follows references from root objects to determine all available objects, creating a graph of accessible objects.

- Reference counting: it counts the number of references to each object.

- Memory reclamation: the garbage collector frees memory occupied by objects that have no references (dead objects).

- World resumption: after garbage collection is complete, program execution continues.

This is a conservative garbage collector, which means it does not require precise information about the location of all object pointers in memory. Instead, it assumes that any value in memory that can be a pointer to an object is a valid pointer. This allows the BDW garbage collector to work with programming languages that do not provide precise pointer information.

Incremental garbage collection

Starting with Unity 2019.1, the BDW is used in incremental mode by default. This means that the garbage collector distributes its workload across multiple frames instead of stopping the main CPU thread (stop-the-world garbage collection) to process all objects in the managed heap.

Thus, Unity takes shorter breaks while executing your application, instead of having one long interruption, allowing the garbage collector to process objects in the managed heap. Incremental mode does not speed up garbage collection as a whole, but since it distributes the workload across multiple frames, performance spikes related to GC are reduced.

Incremental garbage collection can be problematic for your application. In this mode, the garbage collector divides its work, including the marking phase. The marking phase is the scanning of all managed objects by the garbage collector to determine which objects are still in use and which can be cleared.

Dividing the marking phase works well when most references between objects do not change between work fragments. However, too many changes can overload the incremental garbage collector and create a situation where the marking phase will never complete. In this case, the garbage collector will switch to performing a full, non-incremental collection. To inform the garbage collector of each reference change, Unity uses write barriers; this adds some overhead when changing references, which affects the performance of managed code.

Let's compare:

|

Unity (Boehm-Demers-Weiser) |

.NET GC |

|

|---|---|---|

|

Algorithm |

Conservative garbage collector |

Generational garbage collector |

|

Runtime Environment |

Mono or IL2CPP |

.NET Core, .NET Framework, .NET 5+ |

|

Root Scanning |

Less precise root scanning |

Precise root scanning |

|

Object Tracing |

Yes |

Yes |

|

Reference Counting |

Yes |

No |

|

Memory Compaction |

No |

Yes (except for large objects) |

|

Generations |

No |

Yes (0, 1, and 2) |

|

Speed |

Slower due to overhead and lack of compactification |

Faster due to generational approach and precise root scanning |

|

Stop-the-world |

Yes |

Yes (but with less impact on performance due to incremental garbage collection) |

|

Fragmentation Handling |

Prone to fragmentation (due to lack of object compaction) |

Reduced fragmentation (due to object compaction) |

Thus, with no CLR GC in Unity, we get a mechanism prone to heap fragmentation and slow, cumbersome garbage collection in the space of all objects not divided into generations. Let's consider the consequences from this that should be taken into account when developing games in Unity.

Avoiding Unity GC limitation pitfalls

Due to Unity Incremental GC limitations, the following pain points can be identified:

- GC calls are more expensive than in .NET (an entire object graph has to be processed, not just its subset).

- Frequent object creation and deletion leads to memory fragmentation; spaces between objects can only be filled with new objects of equal or smaller size.

- Frequent changes in object relationships make incremental GC mode difficult to work with (GC cycles take more frames and reduce FPS).

- Too frequent changes in object relationships (in each frame) result in GC switching to non-incremental mode; instead of spreading GC launches over multiple frames, we get one big world stop until garbage collection is complete.

To avoid these pain points, be particularly careful with unnecessary memory allocations in the heap, which can cause garbage collection spikes:

-

Boxing: avoid passing value type variables instead of reference type variables. This creates a temporary object and potential garbage that is implicitly associated with it, converting value types to object types. A simple example:

int day = 15; int month = 10; int year = 2023; Debug.Log(string.Format("Date: {0}/{1}/{2}", day, month, year));It may seem that since

day,month, andyearare value types and defined inside the function, there will be no allocations or garbage. But if we look into the implementation ofstring.Format(), we will see that the arguments are of theobjecttype, which means that boxing of value types will occur and they will be placed in the heap:/// Replaces the format items in a specified string with the string /// representation of three specified objects. public static string Format(string format, object arg0, object arg1, object arg2); -

Strings: the the C# language, strings are reference types, not value types. Try to minimize the creation and manipulation of strings. Use the

StringBuilderclass for working with strings during runtime. -

Coroutines: although the

yieldoperator itself does not create garbage, creating a newWaitForSecondsobject does:private IEnumerator BadExample() { while (true) { // Creating a new WaitForSeconds object here causes garbage generation. yield return new WaitForSeconds(1f); } } private IEnumerator GoodExample() { // Cache the WaitForSeconds object to avoid garbage generation. WaitForSeconds waitForOneSecond = new WaitForSeconds(1f); while (true) { // Reuse the cached WaitForSeconds object. yield return waitForOneSecond; } } -

Closures and anonymous methods. in general, try to avoid using closures in C# if possible. Minimize the use of anonymous methods and method references in performance-related code, especially if the code runs on every frame. Method references in C# are reference types and they are located on the heap. This means that when you pass a method reference as an argument, temporary memory allocations may occur. This happens regardless of whether an anonymous method is passed or a method is already defined. Moreover, when you turn an anonymous method into a closure, the amount of memory that needs to be passed to the closure method greatly increases.

-

LINQ and regular expressions: both of these tools generate garbage due to hidden boxing. If performance is critical, avoid using LINQ and regular expressions.

-

Unity functions: please note that some functions allocate memory on the heap. Keep references to arrays in cache instead of allocating them inside a loop. Also, use functions that don't generate garbage. For example, prefer using

GameObject.CompareTaginstead of comparing strings withGameObject.tag(as returning a new string creates garbage). Use non-allocating alternatives to Unity API methods, such asPhysics.RaycastNonAlloc.

If you know that at a certain point in the game the garbage collection process will not affect the gaming experience (for example, on a loading screen), you can initiate garbage collection by calling System.GC.Collect. However, the best practice to strive for is zero-allocation. This means that you reserve all the memory you need at the start of the game or when loading a specific game scene, and then reuse that memory throughout the entire game cycle. In combination with object pools for reuse and the use of structures instead of classes, these techniques solve most memory management issues in the game.

[story continues]

tags