TL;DR —

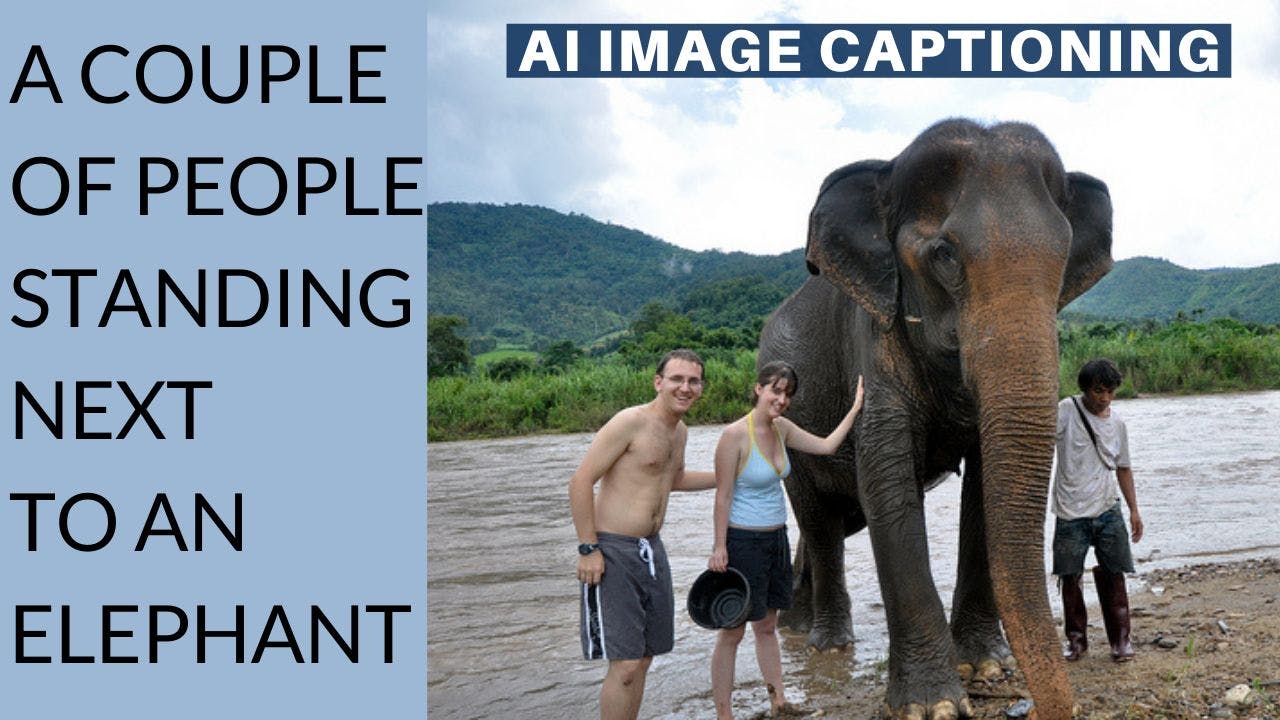

DALL-E beat all previous attempts to generate images from text input using CLIP, a model that links images with text as a guide. A very similar task called image captioning may sound really simple but is, in fact, just as complex. It is the ability of a machine to generate a natural description of an image. It’s easy to simply tag the objects you see in the image but it is quite another challenge to understand what's happening in a single 2-dimensional picture, and this new model does it extremely well!

We’ve seen AI generate images from other images using GANs. Then, there were models able to generate questionable images using text. In early 2021, DALL-E was published, beating all previous attempts to generate images from text input using CLIP, a model that links images with text as a guide. A very similar task called image captioning may sound really simple but is, in fact, just as complex. It is the ability of a machine to generate a natural description of an image.

It’s easy to simply tag the objects you see in the image but it is quite another challenge to understand what’s happening in a single 2-dimensional picture, and this new model does it extremely well!

Watch the video

References

►Read the full article: https://www.louisbouchard.ai/clipcap/

►Paper: Mokady, R., Hertz, A. and Bermano, A.H., 2021. ClipCap: CLIP Prefix for Image Captioning. https://arxiv.org/abs/2111.09734

►Code: https://github.com/rmokady/CLIP_prefix_caption

►Colab Demo: https://colab.research.google.com/drive/1tuoAC5F4sC7qid56Z0ap-stR3rwdk0ZV?usp=sharing

►My Newsletter (A new AI application explained weekly to your emails!): https://www.louisbouchard.ai/newsletter/

►Paper: Mokady, R., Hertz, A. and Bermano, A.H., 2021. ClipCap: CLIP Prefix for Image Captioning. https://arxiv.org/abs/2111.09734

►Code: https://github.com/rmokady/CLIP_prefix_caption

►Colab Demo: https://colab.research.google.com/drive/1tuoAC5F4sC7qid56Z0ap-stR3rwdk0ZV?usp=sharing

►My Newsletter (A new AI application explained weekly to your emails!): https://www.louisbouchard.ai/newsletter/

Video Transcript

00:00

we've seen ai generate images from other

00:02

images using guns then there were models

00:05

able to generate questionable images

00:07

using text in early 2021 dolly was

00:10

published beating all previous attempts

00:12

to generate images from text input using

00:14

clip a model that links images with text

00:16

as a guide a very similar task called

00:19

image captioning may sound really simple

00:21

but is in fact just as complex it's the

00:23

ability of a machine to generate a

00:25

natural description of an image indeed

00:28

it's almost as difficult as the machine

00:30

needs to understand the image and the

00:32

text it generates just like in text to

00:34

image synthesis it's easy to simply tag

00:36

the objects you see in the image this

00:38

can be done using a regular

00:39

classification model but it's quite

00:41

another challenge to understand what's

00:43

happening in a single two-dimensional

00:45

picture humans can do it quite easily

00:47

since we can interpolate from our past

00:49

experience and we can even put ourselves

00:51

in the place of the person in the

00:52

picture and quickly get what's going on

00:55

this is a whole other challenge for a

00:56

machine that only sees pixels yet these

00:59

researchers published an amazing new

01:01

model that does this extremely well in

01:03

order to publish such a great paper

01:05

about image captioning the researchers

01:07

needed to run many many experiments plus

01:10

their code is fully available on github

01:12

which means it is reproducible these are

01:14

two of the strong points of this episode

01:16

sponsor weights and biases if you want

01:18

to publish papers in big conferences or

01:20

journals and do not want to be part of

01:22

the 75 of the researchers that do not

01:25

share their code i'd strongly suggest

01:27

using weights and biases it changed my

01:29

life as a researcher and my work in my

01:31

company weights and biases will

01:32

automatically track each run the hyper

01:34

parameters the github version hardware

01:37

and osu's the python version packages

01:39

install and training script everything

01:41

you need for your code to be

01:42

reproducible without you even trying it

01:45

just needs a line of code to tell what

01:47

to track once and that's it please don't

01:50

be like most researchers that keep their

01:52

code a secret i assume mostly because it

01:54

is hardly reproducible and try out

01:56

weights and biases with the first link

01:58

below as the researchers explicitly said

02:00

image captioning is a fundamental task

02:02

in vision language understanding and i

02:05

entirely agree the results are fantastic

02:07

but what's even cooler is how it works

02:09

so let's dive into the model and it's in

02:12

our working a little before doing so

02:14

let's quickly review what image

02:16

captioning is image captioning is where

02:18

an algorithm will predict a textual

02:20

description of a scene inside an image

02:22

here it will be done by a machine and in

02:25

this case it will be a machine learning

02:27

algorithm this algorithm will only have

02:29

access to the image as input and will

02:31

need to output such a textual

02:33

description of what is happening in the

02:36

image in this case the researchers used

02:38

clip to achieve this task if you are not

02:40

familiar with how clip works or why it's

02:42

so amazing i'd strongly invite you to

02:45

watch one of the many videos i made

02:47

covering it in short clip links images

02:49

to text by encoding both types of data

02:52

into one similar representation where

02:54

they can be compared this is just like

02:56

comparing movies with books using a

02:58

short summary of the piece given only

03:00

such a summary you can tell what's it

03:02

about and compare both but you have no

03:04

idea whether it's a movie or a book in

03:07

this case the movies are images and the

03:09

books are text descriptions then clip

03:11

creates its own summary to allow simple

03:14

comparisons between both pieces using

03:16

distance calculation on bit differences

03:19

you can already see how clips seems

03:21

perfect for this task but it requires a

03:23

bit more work to fit our needs here clip

03:26

will simply be used as a tool to compare

03:28

text inputs with images inputs so we

03:30

still need to generate such a text that

03:32

could potentially describe the image

03:34

instead of comparing the text to images

03:36

using clips encoding they will simply

03:39

encode the image using clips network and

03:41

use the generated encoded information as

03:44

a way to guide a future text generation

03:46

process using another model such a task

03:49

can be performed by any language model

03:51

like gpt3 which could improve their

03:53

results but the researchers opted for

03:54

its predecessor gpd2 a smaller and more

03:57

intuitive version of the powerful openai

04:00

model they are basically conditioning

04:02

the text generation from gpt2 using

04:04

clips encoding so clips model is already

04:07

trained and they also used a pre-trained

04:10

version of gpd2 that they will further

04:12

train using the clips encoding as a

04:14

guide to orient the text generation it's

04:17

not that simple since they still need to

04:19

adapt the clips encoding to a

04:21

representation that gpt2 can understand

04:23

but it isn't that complicated either it

04:25

will simply learn to transfer the clips

04:27

encoding into multiple vectors with the

04:30

same dimensions as a typical word

04:32

embedding this step of learning how to

04:34

match clips outputs to gpd2's inputs is

04:37

the step that will be thought during

04:39

training as both gpt2 and clip are

04:41

already trained and they are powerful

04:43

models to do their respective tasks so

04:45

you can see this as a third model called

04:47

a mapping network with the sole

04:49

responsibility of translating one's

04:51

language into the other which is still a

04:53

challenging task if you are curious

04:55

about the actual architecture of such a

04:57

mapping network they tried with both a

04:59

simple multi-layer perceptron or mlp and

05:02

a transformer architecture confirming

05:04

that the latter is more powerful to

05:06

learn a meticulous set of embeddings

05:08

that will be more appropriate for the

05:09

task when using powerful pre-trained

05:11

language models if you are not familiar

05:14

with transformers you should take five

05:15

minutes to watch the video i made

05:17

covering them as you will only more

05:19

often stumble upon this type of network

05:21

in the near future the model is very

05:23

simple and extremely powerful just

05:26

imagine having clip merge with gpt3 in

05:28

such a way we could use such a model to

05:30

describe movies automatically or create

05:32

better applications for blind and

05:34

visually impaired people that's

05:36

extremely exciting for real world

05:38

applications of course this was just an

05:40

overview of this new model and you can

05:42

find more detail about the

05:44

implementation in the paper linked in

05:46

the description below i hope you enjoyed

05:48

the video and if so please take a second

05:51

to share it with a friend that could

05:52

find this interesting it will mean a lot

05:55

and help this channel grow thank you for

05:57

watching and stay tuned for my next

05:59

video the last one of the year and quite

06:01

an exciting one

06:06

[Music]

[story continues]

Written by

@whatsai

I explain Artificial Intelligence terms and news to non-experts.

Topics and

tags

tags

artificial-intelligence|ai|sota|machine-learning|computer-vision|data-science|youtubers|youtube-transcripts|web-monetization

This story on HackerNoon has a decentralized backup on Sia.

Transaction ID: lcbyD-6DbB4ucb-F-VBM3lXUeD8xxCKusGub4-ik5vM