This is a simplified guide to an AI model called PaddleOCR-VL-1.5 maintained by PaddlePaddle. If you like these kinds of analysis, join AIModels.fyi or follow us on Twitter.

Model overview

PaddleOCR-VL-1.5 represents an advancement in compact vision-language models designed for document understanding tasks. Built by PaddlePaddle, this 0.9B parameter model handles optical character recognition and document parsing across multiple languages. Unlike its predecessor PaddleOCR-VL, the 1.5 version improves robustness for real-world document scenarios. The model combines vision and language understanding in a single, lightweight architecture suitable for deployment on resource-constrained devices.

Model inputs and outputs

The model accepts document images as visual input and processes them through a vision-language framework to extract and understand text content. It returns structured text recognition results with spatial information about where text appears within documents. The architecture balances model size with performance, making it practical for production environments where computational resources remain limited.

Inputs

- Document images in standard formats (JPEG, PNG) containing text or structured document layouts

- Image dimensions ranging from low to high resolution, with automatic scaling

- Multi-language documents with text in various writing systems and scripts

Outputs

- Extracted text with character-level accuracy and word boundaries

- Bounding box coordinates indicating text location within images

- Confidence scores for recognition results

- Layout understanding identifying document structure and text regions

Capabilities

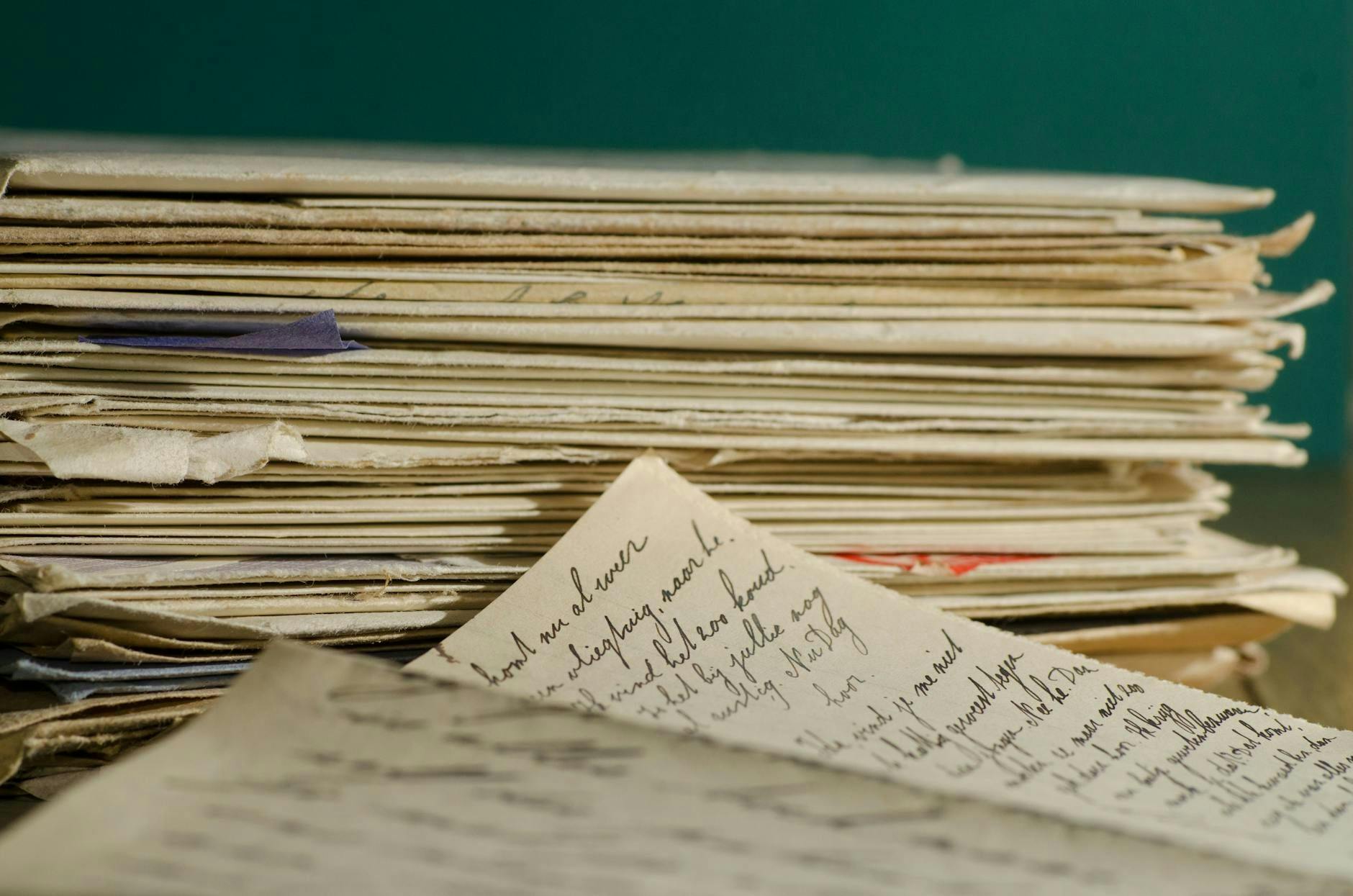

The model excels at extracting text from documents photographed in varied lighting conditions, angles, and quality levels. It handles forms, invoices, receipts, and handwritten documents with robust recognition. Multi-language support enables processing of documents containing text in different languages simultaneously. The system recognizes both printed and stylized text, making it suitable for diverse real-world document types.

What can I use it for?

Organizations can deploy this model for document digitization pipelines, automating data extraction from paper records without manual transcription. Financial institutions use it for invoice and receipt processing at scale. Educational platforms leverage it for converting scanned textbooks and educational materials into searchable digital formats. E-commerce companies implement it for order processing and shipping label reading. The lightweight design makes it suitable for mobile applications and edge devices where server-based processing becomes impractical.

Things to try

Experiment with severely degraded documents to test robustness limits—old photocopies, faxes, or images with heavy shadows. Test on documents combining multiple languages to see how the model handles code-switching and mixed-script scenarios. Try using it on non-standard document types like menu boards, street signs, or product packaging to explore its generalization capabilities. Process documents at various angles and rotations to understand how perspective changes affect accuracy. Run batch processing on large document collections to evaluate throughput and resource consumption in your deployment environment.