Cheng Lou's new library isn't just a performance trick — it's a philosophical/logistical/dare-I-say/spirtual challenge to how browsers have handled text layout for 30 years.

On March 28, 2026, Cheng Lou — formerly of Meta's React core team and creator of the beloved react-motion animation library, now building frontend infrastructure at Midjourney — dropped pretext on GitHub with a viral X thread and a set of polished browser demos.This isn't just another frontend utility; it’s a high-stakes bet that the browser’s layout engine has become a literal bottleneck for the next generation of spatial and agentic UIs. Lou accompanied the launch with a viral X thread and a set of polished browser demos that make the DOM look ancient.

Pretext is a pure JavaScript/TypeScript library for multiline text measurement and layout. It sidesteps the need for DOM measurements like getBoundingClientRect and offsetHeight, which trigger layout reflow — one of the most expensive operations in the browser. Instead, it implements its own text measurement logic using the browser's font engine as ground truth.

The API is two-phase: prepare() does the one-time work of normalizing whitespace, segmenting text, applying line-breaking rules, and measuring segments via canvas — returning an opaque handle. Then layout() is the cheap hot path: pure arithmetic over cached widths, no DOM involvement whatsoever. github

On current benchmarks, prepare() runs in about 19ms for a shared 500-text batch, while layout() runs in about 0.09ms for that same batch. github That's the difference between blocking your main thread and not.

The repo hit 7,100 GitHub stars within days of launch. Simon Willison called it "exciting." The demos are genuinely impressive.

Now let's look at what's actually going on.

What's Actually New Here

The problem Pretext solves is real and old. If you've ever built a virtualized list — a chat interface with 50,000 messages, a feed with variable-height cards, a masonry layout — you've run into this wall. You need to know how tall a block of text will be before you render it. The browser can only tell you that height after it's already been rendered and measured. The DOM measurement forces a synchronous reflow. Do this for 500 items, and you've just handed the browser a 30ms+ frame tax.

When UI components independently measure text heights (for example, a virtual scrolling list sizing 500 comments), each getBoundingClientRect() or offsetHeight call forces synchronous layout reflow. For 500 text blocks, this can cost 30ms+ per frame. In a world obsessed with LCP and Core Web Vitals, triggering a synchronous reflow for 500 items isn't just bad DX—it’s architectural malpractice. For 500 text blocks, this can easily cost 30ms+ per frame, effectively murdering your 60fps dreams.

The classic workaround is to estimate — cache some rough average, or batch DOM reads after writes. Neither is clean. Neither is accurate. Neither handles internationalization gracefully.

Pretext's actual innovation is the architecture of the split. As Simon Willison explains it: "The prepare() function splits the input text into segments (effectively words, but it can take things like soft hyphens and non-latin character sequences and emoji into account as well) and measures those using an off-screen canvas, then caches the results. This is comparatively expensive but only runs once. The layout() function can then emulate the word-wrapping logic in browsers to figure out how many wrapped lines the text will occupy at a specified width and measure the overall height."

The off-screen canvas approach isn't new — canvas measureText() has been around for years. What's new is the complete, production-hardened system built on top of it: internationalization across CJK scripts, Arabic bidirectional text, emoji width correction per-browser, proper Unicode segmentation via Intl.Segmenter, and — this is the part that almost nobody does — a rigorous accuracy validation harness.

Willison again, on the testing methodology: "The earlier tests rendered a full copy of the Great Gatsby in multiple browsers to confirm that the estimated measurements were correct against a large volume of text. This was later joined by the corpora/ folder using the same technique against lengthy public domain documents in Thai, Chinese, Korean, Japanese, Arabic, and more."

That's not typical open source rigor. That's the obsessive kind.

That's not typical open source rigor. That's the obsessive kind.

There's also something genuinely novel buried in the walkLineRanges() API. CSS only knows fit-content, which is the width of the widest line after wrapping. If a paragraph wraps to 3 lines and the last line is short, CSS still sizes the container to the longest line. There's no CSS property to say "find the narrowest width that still produces exactly 3 lines." That requires measuring text at multiple widths and comparing line counts — which is exactly what Pretext's walkLineRanges() does, without DOM text measurement in the resize path.

Chat bubble shrinkwrap is the killer demo for this. Every messaging app you've ever used has dead space in its bubbles because CSS fit-content can't do better. Pretext can binary-search the optimal width. That's a capability that didn't exist before.

Why This Matters for Developers and Builders

If you're building any of the following, Pretext is directly relevant:

Virtualized lists with dynamic content. React Virtual, TanStack Virtual, and friends all require height estimation for variable-sized items. Right now, most implementations either measure on mount (expensive) or guess (inaccurate). We’ve spent a decade "guessing" row heights and praying the scrollbar doesn't jump; Pretext finally treats text layout as a deterministic math problem rather than a DOM-dependent mystery. This provides a path to accurate pre-render height calculation without the "jank" of late-discovery measurements.Pretext provides a path to accurate pre-render height calculation without DOM reflow.

Chat and messaging UIs. Messaging apps that virtualize thousands of message bubbles must calculate their height in advance. cloudmagazin Pretext handles this natively and opens the door to pixel-perfect bubble sizing that no CSS-only solution can match.

Canvas and WebGL text rendering. Figma, Miro, Excalidraw, and any tool rendering to canvas rather than the DOM currently has to either approximate text dimensions or round-trip through the DOM. Pretext gives those tools a clean path to accurate text measurement without touching the DOM at all.

Server-side layout. Pretext explicitly mentions server-side rendering as an eventual target. If that lands, it could enable pre-computing text layouts before they hit the browser entirely — meaningful for tools like print-to-PDF pipelines or layout engines that run in Node.

AI-assisted development. Lou mentions this himself in the README: "development time verification (especially now with AI) that labels on e.g. buttons don't overflow to the next line, browser-free." As AI agents generate and test UI code, having accurate browser-free text measurement closes a feedback loop that currently requires a real browser.

The layoutNextLine() API is worth calling out specifically — it lets you route text one row at a time when the available width changes per-line. That's how magazine-style text wraps around a floated image. CSS handles this natively in flow layout, but the moment you leave flow — Canvas, WebGL, custom layout engines — you lose it. Pretext gives it back.

What's Questionable or Unclear

Let's not get carried away.

The 500x benchmark is the developer's own caveat. Cheng Lou himself called the comparison "unfair" on X — the benchmark compares a cached computation path (hot path) against a full initial measurement (cold path). The real number you care about is the comparison between properly batched DOM reads (the best-practice approach) versus Pretext's hot path. That comparison is nowhere in the docs. 500x is a ceiling figure against worst-case DOM usage, not a real-world differential. Let’s be real: 500x speedups usually live in the land of marketing fiction, and while the math here is solid, comparing a hot cache to a cold DOM feels like bringing a calculator to an abacus fight. The real number you care about is the comparison between properly batched DOM reads and Pretext's hot path, which remains unproven in the docs.

The demo page had problems at launch. Several developers noted rendering issues at launch — text was being cut off with overflow: hidden, making it unreadable on desktop. On mobile, one Hacker News commenter described the page as "fully and completely broken." It's ironic — though not damning — for a text layout library's homepage to have text layout issues. But it does signal this is early-stage software.

Server-side rendering isn't there yet. The README frames it as "soon" and "eventually." For anyone thinking about using Pretext in SSR-heavy Next.js or Remix apps, that's a blocker. The entire value prop of pre-computing layout falls apart if you can't do it on the server.

system-ui is explicitly unsafe. The caveats in the README note that "system-ui is unsafe for layout() accuracy on macOS. Use a named font." That's a significant gotcha. A huge percentage of apps in the wild use system-ui as their base font. If you're adding Pretext to an existing app without thinking about this, you'll get subtly wrong measurements on Mac users' screens and probably never know why. Shipping a layout engine that chokes on the default macOS font is a massive "Enter at Your Own Risk" sign for anyone not building in a strictly controlled design system. If you drop Pretext into a generic app, you'll get subtly wrong measurements on Mac screens and likely never debug why.

The scope is intentionally narrow. Pretext targets a specific CSS text setup: white-space: normal, word-break: normal, overflow-wrap: break-word. Anything outside that box — and there's a lot outside that box in complex real-world apps — is out of scope. The README says it plainly: "Pretext doesn't try to be a full font rendering engine (yet?)"

The Bigger Picture

Pretext is interesting for reasons beyond its own feature set.

It's a bet that the browser's layout engine, as a black box, has become a bottleneck. That's not a new complaint — React itself is partly a workaround for how expensive DOM manipulation is. But Pretext goes further: it says the browser's measurement primitives are insufficient, not just slow. The problem isn't that getBoundingClientRect() is slow in isolation; it's that the browser forces you to render before you can measure, and render-before-measure is the wrong order of operations for a class of modern UIs.

Lou described his development process candidly in a thread on X/Twitter: "Waiting on the AI verifier loop against ground truth browsers at various widths. Arabic took a while. Got like 2 pounds lighter at the end of the month while making Pretext." This is the dawn of "vibe-coded" infrastructure: using LLMs to grind edge cases into dust until a pure JS library can outperform C++ browser internals that have existed since the Netscape era. The humans set the bar; the machines chase the pixels. Which, if nothing else, tells you this wasn't a weekend hack. The accuracy work for internationalization alone is the kind of thing that usually doesn't get done in open source projects.

The development methodology itself is worth noting. Lou used Claude Code and Codex in a tight verification loop, using actual browser behavior as ground truth for each iteration. This is a preview of how performance-critical libraries will increasingly get built: not by humans painstakingly reading Unicode specs, but by AI-assisted iteration against empirical browser output. The humans set the bar; the machines chase it.

Whether Pretext itself becomes a permanent fixture in the ecosystem or gets absorbed into a future framework primitive is an open question. The CSS Text Level 4 spec has been inching toward better intrinsic sizing primitives for years. If browsers ever natively expose something like "find the minimum width for this text at N lines," Pretext's core use case shrinks. But that spec work moves at geological pace, and real apps need solutions now.

For the specific class of problems it targets — virtualized text, canvas rendering, pre-render height prediction, multiline shrinkwrap — Pretext is the most complete, most honestly validated tool in the field. The hype around "every website is broken" is overblown. But the underlying technical argument is sound: for complex text UIs, you've been forced to measure what you can't yet see, and Pretext finally changes that.

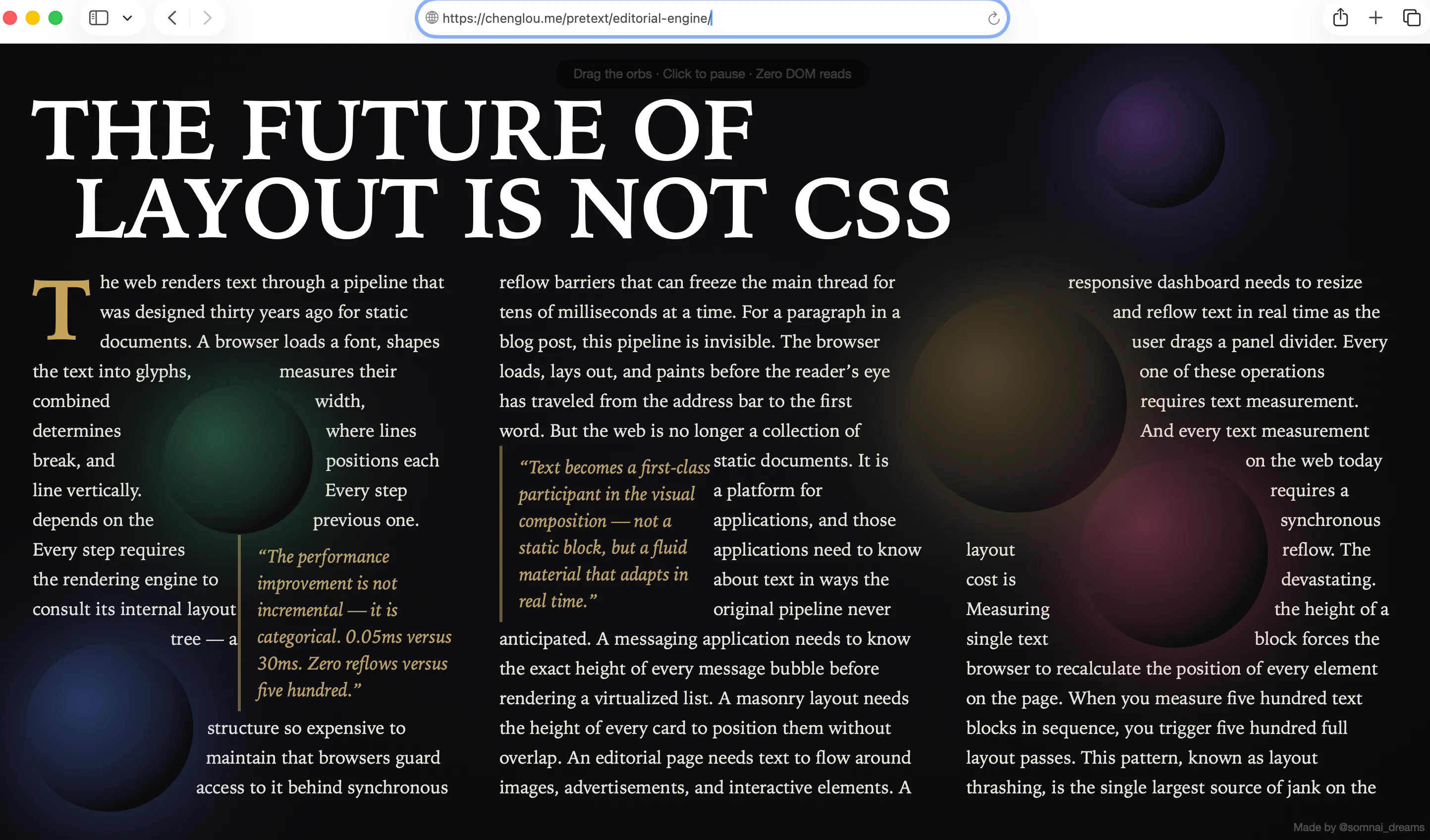

Install it: npm install @chenglou/pretext. Demos: chenglou.me/pretext. Source: github.com/chenglou/pretext. Featured image credit, https://chenglou.me/pretext/editorial-engine/