TL;DR —

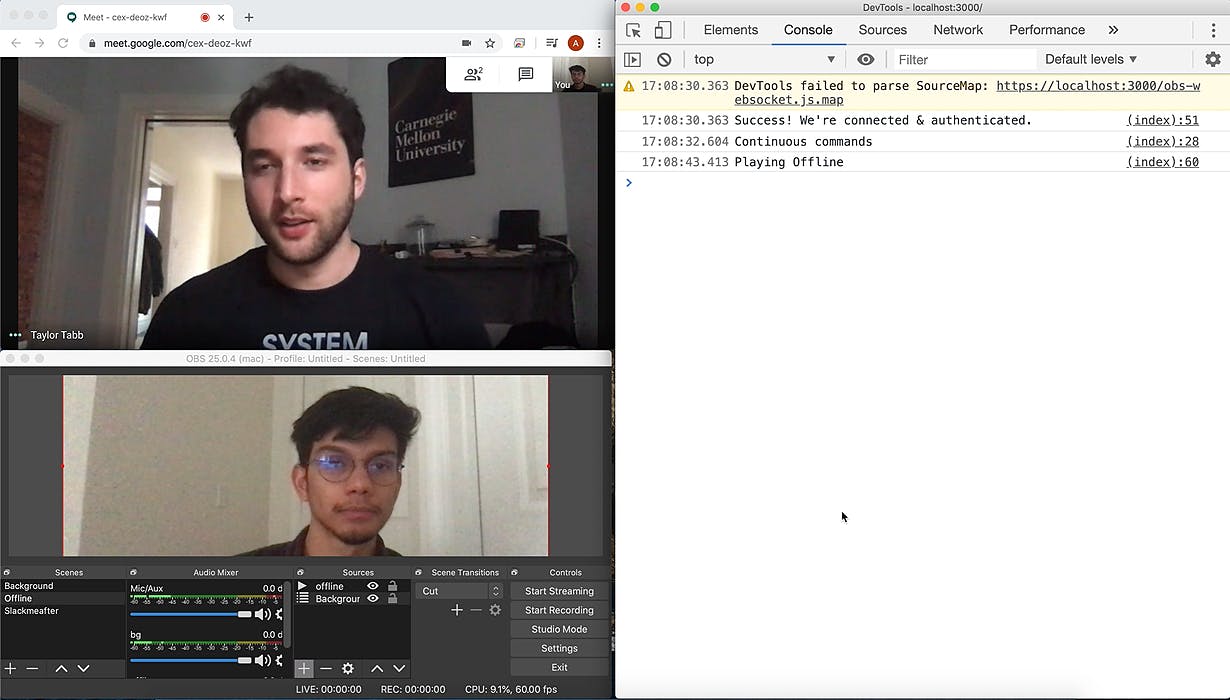

This morning I stumbled onto a Gizmodo article talking about a guy (Matt Reed) who made a digital AI twin that replaced him on a Zoom call and would participate in conversation. After doing some research and digging into Matts’ code, I made a version of his own. I detect keywords and playback time using the same Artyom library, but instead of images, I use prerecorded clips. I then added all the clips I recorded above into OBS. I created a simple webpage that would listen for audio from Google Hangouts or Zoom and would tell OBS what scene what to play based on the questions I was asked.

[story continues]

Written by

@adnanaga

Technical Writer on HackerNoon.

Topics and

tags

tags

quarantinetime|zoom|hangouts|javascript|nodejs|zoombot|ai|artificial-intelligence

This story on HackerNoon has a decentralized backup on Sia.

Transaction ID: ZduJH9vgNlm5I7UESZ9nW2S3vF880jmE5J4HZswwY04