If you are still building Retrieval Augmented Generation systems like it is 2022, you are already behind. Back then, the formula was simple: take some text, chop it into chunks, turn them into vectors, and throw them at an LLM.

That pipeline works perfectly in a demo or a slide deck. But the moment your RAG system meets real users, messy data, and complex edge cases, it falls apart. Your users ask about trends, relationships, or specific numbers, and your AI responds with a confident hallucination.

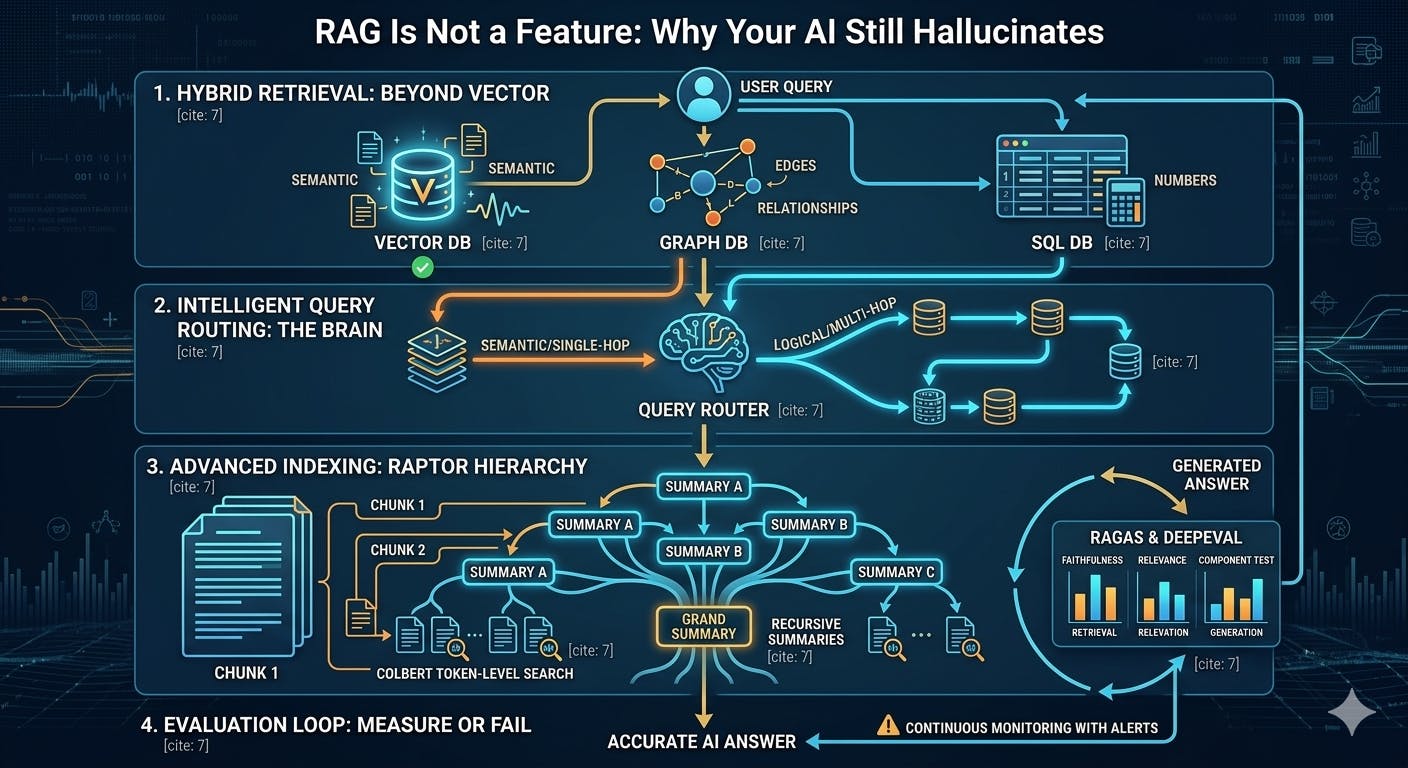

The hard truth is that in 2026, RAG is no longer a simple feature you "plug and play" into an app. It is a sophisticated engineering system. If you want to bridge the gap between a toy and a production grade tool, you need to implement these four critical layers.

1. Retrieval Is More Than Just Vector Search

The biggest mistake teams make is assuming that every question is semantic. Vector search is great for finding "meaning," but it is terrible at finding relationships or structured data.

To build a robust system, you need a hybrid approach.

- Graph Databases: These are mandatory for "relationship" questions. If a user asks how Project A connects to Department B, a vector search might fail. A Graph DB identifies the nodes and edges of your data.

- SQL Databases: When it comes to numbers, dates, and structured tables, vectors are useless. You need your system to query actual rows and columns for precision.

- Vector Search: Save this for when the user is looking for a concept or a general vibe.

2. Intelligent Query Routing: The Hidden Superpower

Most RAG systems are "dumb." They take the user query and immediately try to find a matching chunk of text. A production grade system has a brain before it has a hand.

Before retrieving anything, an Intelligent Query Router must decide the path of least resistance. It asks:

- Is this a logical question (SQL) or a semantic one (Vector)?

- Is this a "single hop" simple query or a "multi hop" complex problem that needs multiple data sources?

- Which data source should I hit first to get the most context?

This decision layer alone removes about 80% of bad answers. It stops the system from looking for a needle in the wrong haystack.

3. Advanced Indexing: Moving Beyond Naive Chunking

If your indexing strategy is just "split every 500 tokens," you have already lost. Naive chunking leads to low recall and missing context.

Modern systems use much smarter representations of the same data:

- RAPTOR (Recursive Abstractive Processing for Tree Organized Retrieval): This builds a hierarchy of summaries. Instead of just searching raw text, the AI can search through high level summaries of entire documents to find the right neighborhood first.

- ColBERT: This uses token level retrieval rather than entire chunk embeddings. It allows for much finer granularity during the search process.

- Multi View Indexing: You should index the same data in different ways (summaries, keywords, and raw text) to ensure the AI can "see" the information from multiple angles.

4. The Evaluation Loop: Measure or Fail

If you cannot measure the quality of your RAG system, you cannot fix it. Most teams build a demo, see that it works once, and ship it. This is how silent hallucinations enter your product.

A professional system requires an Evaluation Loop that is non negotiable. You need:

- End to end evaluation: Tools like Ragas help measure the "faithfulness" and "relevance" of the final answer.

- Component testing: Use DeepEval to test the retrieval and generation steps separately. If your retrieval is 100% accurate but your generation is wrong, you know exactly where the bug is.

- Continuous Monitoring: Production data changes. You need to monitor how your RAG performs against new data types in real time, not just during a one off demo.

Build Systems, Not Toys

The era of "one click AI" is over. Users in 2026 expect their AI tools to be accurate, reliable, and grounded in reality. The difference between a team that builds a "neat bot" and a team that builds a "mission critical tool" is the willingness to treat RAG as an engineering discipline.

Stop treating your AI like a magic black box and start building the layers that make it work.