Table of Links

PROBLEM STATEMENT

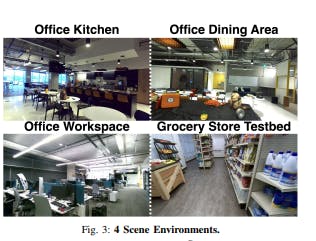

We consider a large indoor environment, specifically defined as a room encompassing at least 750 sq ft. The objective is to 3D reconstruct the a-priori unknown and unstructured environment and localize objects prompted by open-vocabulary and long-tail natural-language queries. We make the following assumptions:

-

The environment and all objects within it are static.

-

Queried objects are seen at least from one of the cameras.

-

A mobile robot with 3 orthogonal cameras.

For the grocery store environment, the TTT robot with a single pair of stereo cameras is used [15]. For each trial, the system is prompted by a natural-language query, and outputs the heatmap and localized 3D coordinate of the most semantically relevant location in the scene. The trial is deemed successful if this point falls within a manually annotated bounding box for that object. The objective is to efficiently build a 3D representation that maximizes this success rate for large-scale scenes.

METHODS

We use a Fetch mobile robot equipped with an RGB-D Realsense D455 camera and two side facing ZED 2 stereo cameras mounted with known relative poses. We build a map of the scene with a set of 3D Gaussians in an online fashion, registering new images as the robot drives around the environment. The overall pipeline for LEGS is outlined in Figure 2.

There are three key components of the system:

-

Multi-Camera Reconstruction: To improve the effective field-of-view of the robotic system, we use multiple cameras pointing in different directions to provide more viewpoints of the environment.

-

Incremental 3DGS Construction: A significant challenge of large scene mapping is localization error due to its accumulative nature. To mitigate this error, we perform global Bundle Adjustment (BA) with DROIDSLAM to improve pose accuracy for all previously recorded poses in the scene. Global BA can be executed multiple times in a given traversal, and after each BA, the prior image-pose estimates are updated with the corresponding pose.

-

Language-Embedded Gaussian Splatting: We implement a language-aligned feature field inspired by the method from LERF [1] that samples from gaussian primitives instead of from a density field.

A. Multi-Camera Reconstruction

Online image registration enables Gaussian Splatting on a mobile base with a comprehensive sensor suite including multiple camera views (left, right, center). During testing of vanilla Gaussian Splatting on our multi-camera setup, we found that offline Structure from Motion (SfM) pipelines frequently failed to find correspondences between images from different cameras, largely due to the lack of scene overlap from offset camera views. Because we peform online image registration with a visual SLAM algorithm for one camera and we know the corresponding extrinsic transforms to the other cameras, we can compute the corresponding pose estimate for each camera. Due to limited GPU memory onboard the Fetch robot, the image data is then streamed over network to a desktop computer for processing and training.

B. Incremental 3DGS Construction + Bundle Adjustment

Standard methods for radiance field optimization require image-pose pairs as input, and Gaussian Splatting greatly benefits from having a pointcloud as a geometric prior for initialization. Poses and pointclouds are typically provided through offline Structure from Motion (SfM) techniques like COLMAP [51] which require all training images to be collected ahead of time. We build off of Nerfstudio’s [52] Splatfacto implementation of Gaussian Splatting and modify it to operate on a stream of images and poses, and incorporate updates from global bundle adjustment (BA) to further optimize poses by building a keyframe graph to minimize the Mahalanobis distance between the reprojected points and the corresponding revised optical flow points [53].

- Online Optimization: For online pose estimation we use DROID-SLAM [53], a monocular SLAM method that takes in monocular, stereo, or RGB-D ordered images and outputs per-keyframe pose estimates and disparity maps. During operation, we feed DROID-SLAM input frames from one of the side-facing Zed cameras, and extrapolate the poses of other cameras using the camera mount CAD model. Registered RGBD keyframes from DROID-SLAM are incrementally added to Splatfacto’s training set. We initialize new Gaussian means per-image by sampling 500 pixels from each depth image and deproject them into 3D using the corresponding metric depth measurement. We use a learned stereo depth model trained on synthetic images [54], which can predict thin features and transparent objects with high accuracy.

- Global Bundle Adjustment: Incorporating images with pose drift from SLAM systems results in artifacts in 3DGS models like duplicated or fused objects, fuzzy geometry, and ghosting (Fig. 4). Though prior work has demonstrated that pose optimization inside a 3DGS can track camera pose [44], tracking iterations for the method with reported results takes up to a second to perform, making it difficult for online usage. Instead, to mitigate drift, we incorporate updates from global BA and update training camera poses in the 3DGS accordingly. This allows tracking of new camera frames at 30fps in tandem with continual 3DGS optimization for faster model convergence.

C. Language Embedded Gaussian Splats

In Language Embedded Radiance Fields (LERF) [1] the language field is optimized by volumetrically rendering and supervising CLIP embeddings along rays during training. In contrast, 3DGS provides direct access to explicit gaussian means, allowing us to implement a multi-scale language

embedding function, Flang(⃗x,s) ∈ R D. This function takes an input position ⃗x and physical scale s, outputting a Ddimensional language embedding. Flang is implemented by passing a sampled ⃗x through a multi-resolution hash encoding [19], which produces the input z to the MLP mθ (z,s), with the MLP evaluated last resulting in feature output y ∈ R D. These features can then be projected and rasterized into feature images using Nerfstudio’s [52] tile-based rasterizer implementation, with loss gradients backpropagated through the MLP. Hash encoding employs a hash table to store feature vectors corresponding to grid cells in a multi-resolution fashion. This hash grid encoding scheme effectively reduces the number of floating-point operations and memory accesses needed during training and inference, compared to directly feeding position features into an MLP.

Language-aligned features are obtained from the training images using multi-scale crops passed through the CLIP encoder, a technique shown in LERF to be crucial for semantic understanding in large scenes where object sizes may vary drastically. This is in contrast to prior work which averages CLIP embeddings as a tradeoff between speed and accuracy [17]. LEGS facilitates inference at approximately 50 Hz 1080p, and its hybrid explicit-implicit representation allows faster scene querying without volumetric rendering. Given a natural language query, we query the language field to obtain a relevancy map similar to LERF. To localize the query in the world frame, we find the relevancy over CLIP features and take the argmax relevancy over 3D gaussian means. This method offers a significant speed increase over feature field methods distilled in NeRFs, where the volumetric representation must first render a dense pointcloud.

Authors:

- Justin Yu

- Kush Hari

- Kishore Srinivas

- Karim El-Refai

- Adam Rashid

- Chung Min Kim

- Justin Kerr

- Richard Cheng

- Muhammad Zubair Irshad

- Ashwin Balakrishna

- Thomas Kollar Ken Goldberg

This paper is