Recently a member of my family spent two hours in a hospital emergency room for a painful but not life-threatening stomach issue. The bill that arrived, $12,000, was so shocking that I thought I’d need urgent medical care. After our healthcare insurance kicked in, we were on the hook to pay $4,000, which was a far cry from the ironically named “good faith estimate” of $941 provided by the hospital

There is no such thing as good faith in the American healthcare system.

According to the Agency for Healthcare Research and Quality,

A new class of AI health assistants might provide some help, however imperfectly. Amazon, Microsoft, OpenAI, and Perplexity have all launched AI health apps designed to help people manage personal health needs. Some might ask why anyone would trust AI with their health. The better question is: given what the system already costs us, why wouldn’t we use every tool available to avoid the dystopian experience of navigating American healthcare?

A New Kind of First Opinion

In January 2026, OpenAI launched ChatGPT Health, a dedicated health AI product that lets users connect their medical records, lab results, and fitness data to get personalized health guidance. Microsoft followed with Copilot Health in March. Amazon expanded its Health AI assistant to its main platform the same week. Perplexity launched Perplexity Health shortly after. Each product is different in emphasis and design, but they share a common premise: that people deserve a knowledgeable, always-available resource to help them understand what is happening with their bodies before they make decisions about where to seek care.

This is not AI playing doctor. None of these products diagnose conditions or prescribe treatment. What they do, and what matters enormously for the ER problem, is help people think more clearly about a question that currently gets answered by panic and guesswork: how serious is this, really, and where should I go?

Meet the New Health AI Apps

The four consumer-facing products leading this wave each bring a distinct personality to the space.

ChatGPT Health, “The Friendly Family Doctor,” is the most mature and most explicitly consumer-designed of the group, built to connect your medical records, lab results, fitness data, and insurance information into a single personalized health conversation. OpenAI says that hundreds of millions of people were already asking health questions through ChatGPT every week, and so the company decided to built ChatGPT Health to serve a need directly.

Perplexity Health, “The Medical Librarian,” is the most research-native of the four. Every response links to clinical guidelines or peer-reviewed journals, making it less a health companion and more a rigorous research partner that shows its work. For users who distrust AI-generated health information without a source they can verify, that citation-first approach is a meaningful differentiator.

Microsoft Copilot Health, “The Prep Coach,” is built explicitly around helping individuals make sense of their own health data and arrive at doctor visits better prepared. Microsoft has framed its ambition more modestly than its rivals: less “understand your health” and more “make the time you have with your doctor count more.”

Amazon Health AI, “The Pharmacy Counter,” is the most transactionally useful of the group, reflecting Amazon’s retail DNA. Where the others are primarily informational, Amazon’s product is oriented around getting something done, including prescription renewal management, which addresses a category of avoidable ER visits: people who end up in emergency rooms simply because they’ve run out of medication and can’t reach their doctor.

The 2:00 A.M. Problem

Here is what the current system looks like for a lot of Americans. You wake up at 2:00 a.m. with chest tightness and a racing heart. You Google your symptoms, which tells you it could be a heart attack or anxiety or too much coffee. Nothing in that search helps you decide whether to call 911, drive to urgent care in the morning, or take some deep breaths and go back to sleep. So you go to the ER, wait three hours, pay thousands of dollars, and are told it was anxiety.

An AI health assistant changes the nature of that 2:00 a.m. conversation. Instead of a list of possibilities ranked by terrifying probability, you get a tool that knows your medical history, your recent lab results, your medications, and your baseline vitals from your wearable if you have one. It can tell you that your resting heart rate has been elevated for three days, or that this pattern is consistent with something you experienced six months ago that resolved on its own, or that one of the symptoms you’re describing is a warning sign that genuinely warrants urgent attention. That is a different kind of guidance: not a diagnosis, but context that helps you make a better decision.

What the Numbers Say

People are already using AI for health guidance whether or not the healthcare establishment approves. The apps covered here suggest the tools are catching up.

The Untapped Opportunity: Your Financial Advocate

OpenAI reports that between 1.6 and 1.9 million ChatGPT queries arrive every week specifically about health insurance: questions on plan comparisons, billing, claims, enrollment, and cost-sharing. Americans are not just confused about their health. They are drowning in the financial machinery that surrounds it, and they are already turning to AI for relief.

After my own ER bill arrived, I tried to dispute the bill, but without success. The hospital insisted the charges were reasonable. But reasonable compared to what? So, I put ChatGPT to work to find out. It broke down the medical billing codes, flagged where the hospital had likely overcoded the visit and overcharged for services, translated technical CPT and revenue codes into plain language, and pointed me to the strongest areas to dispute. ChatGPT gave me context for what “reasonable” should be. As a result, the hospital cut the bill in half.

A serious financial advocacy capability, built into the same platform where you track your symptoms, review your labs, and prepare for your appointments, could do something none of the existing products currently features as part of their health offerings: help you navigate the cost side of care with the same intelligence it brings to the clinical side.

Before deciding whether to go to the ER at all, you (or a trusted person in a position to help you) could ask, say, ChatGPT Health to help you think through whether your situation warrants emergency care or whether an urgent care clinic would handle it just as well for a fraction of the cost.

After the visit, you could upload your itemized bill and ask the AI to review every line for accuracy, checking whether the procedure codes match what was actually done, whether any charges appear duplicated, and whether anything looks inconsistent with standard billing for your diagnosis. When a dispute gets denied, you could ask AI to draft an appeal letter grounded in your plan’s own language and the relevant clinical guidelines.

General ChatGPT already does versions of all of this for people who know to ask. Billing codes are public, as my experience attests. Insurance policy language is uploadable. AI can dig into an itemized hospital bill in seconds and flag charges that deserve a closer look. The knowledge and the capability exist. What doesn’t exist yet is a dedicated health AI product that packages this as a first-class feature rather than something a resourceful patient figures out on their own.

The New York Times

At a time when a single ER visit can generate a bill capable of derailing a family’s finances, a health AI assistant that doubles as a financial advocate (flagging errors, building appeals, comparing costs before care rather than absorbing shocks after it) would be something this country has never had: Not only a smarter way to understand your body, but a smarter way to survive the system built around it.

A Caveat

These products are promising, but they are not proven. No large-scale clinical study has yet established that AI health assistants reduce unnecessary ER visits or improve patient outcomes. The patient safety organization ECRI named the misuse of AI chatbots in

The specific risk that matters most in the ER context is false reassurance. An AI that tells someone their symptoms are probably nothing, when they are in fact something, doesn’t save them money. It costs them time they may not have. The companies building these products are aware of this risk and have built in safeguards (high-risk query redirection, clinical advisory panels, grounding in verified medical literature), but none of those safeguards are foolproof, and none have been validated at the scale these products are now operating at.

The populations most likely to use these tools as alternatives to expensive care are also the populations most likely to have complex, undertreated conditions that are harder to assess accurately. The benefit flows most cleanly to people who are already reasonably healthy and well-connected to the healthcare system. For everyone else, the tools are useful but the stakes of a wrong answer are higher.

AI Instead of Nothing

The right way to think about these products is not “AI instead of a doctor.” It is “AI instead of nothing.” For the person sitting alone trying to decide whether that chest tightness is an emergency, the alternative to an AI health assistant is not a physician. It’s an online search, fear, and a coin flip. On that comparison, a well-designed AI health assistant that knows your history, surfaces relevant context, and tells you clearly when something warrants urgent care is progress.

ER is not always avoidable. But for the millions of Americans who end up in emergency rooms every year because they had no better way to answer a simple question (is this serious?) a knowledgeable, always-available first opinion may be the most tangible benefit AI has delivered to ordinary people so far.

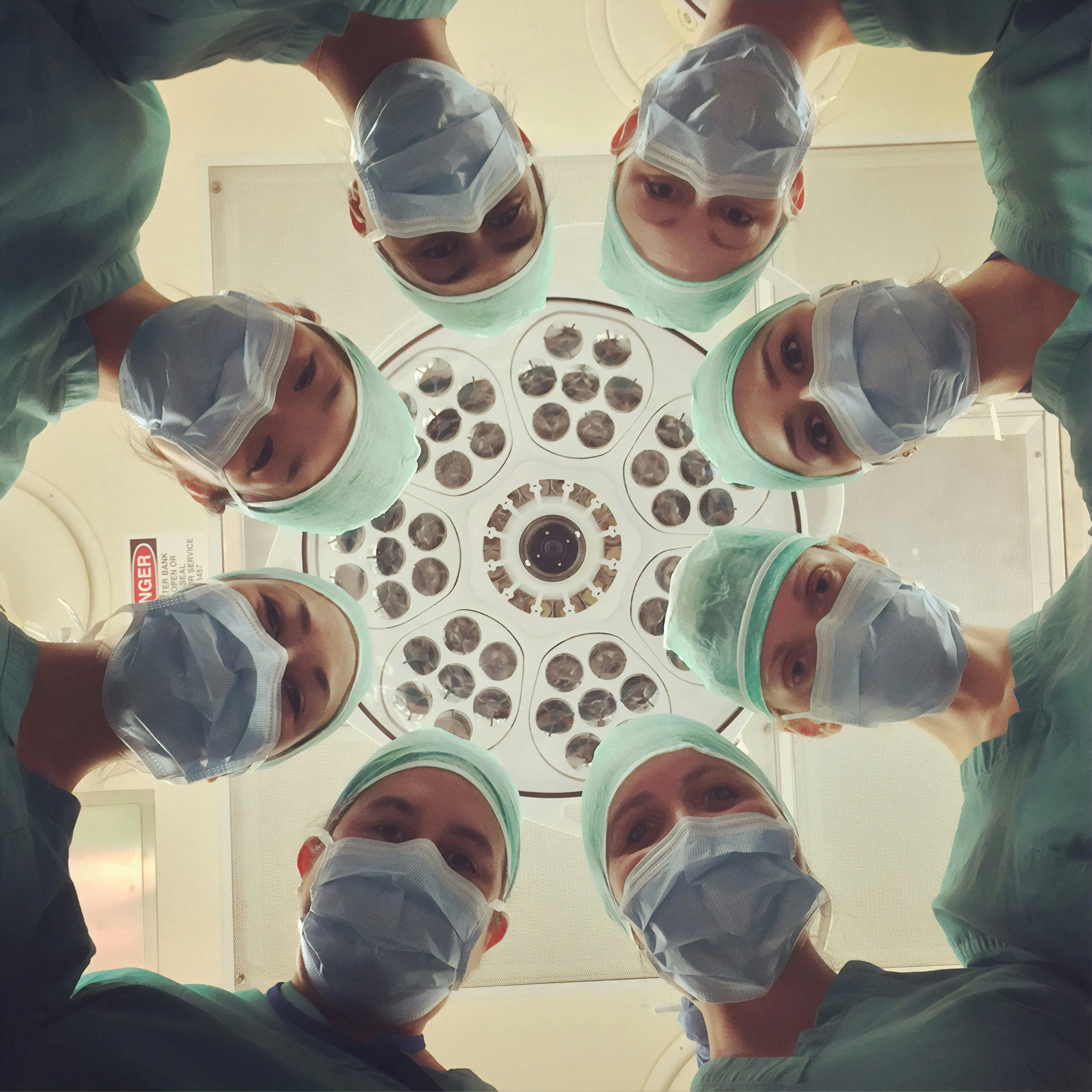

Photo source: National Cancer Institute on Unsplash.

[story continues]

tags