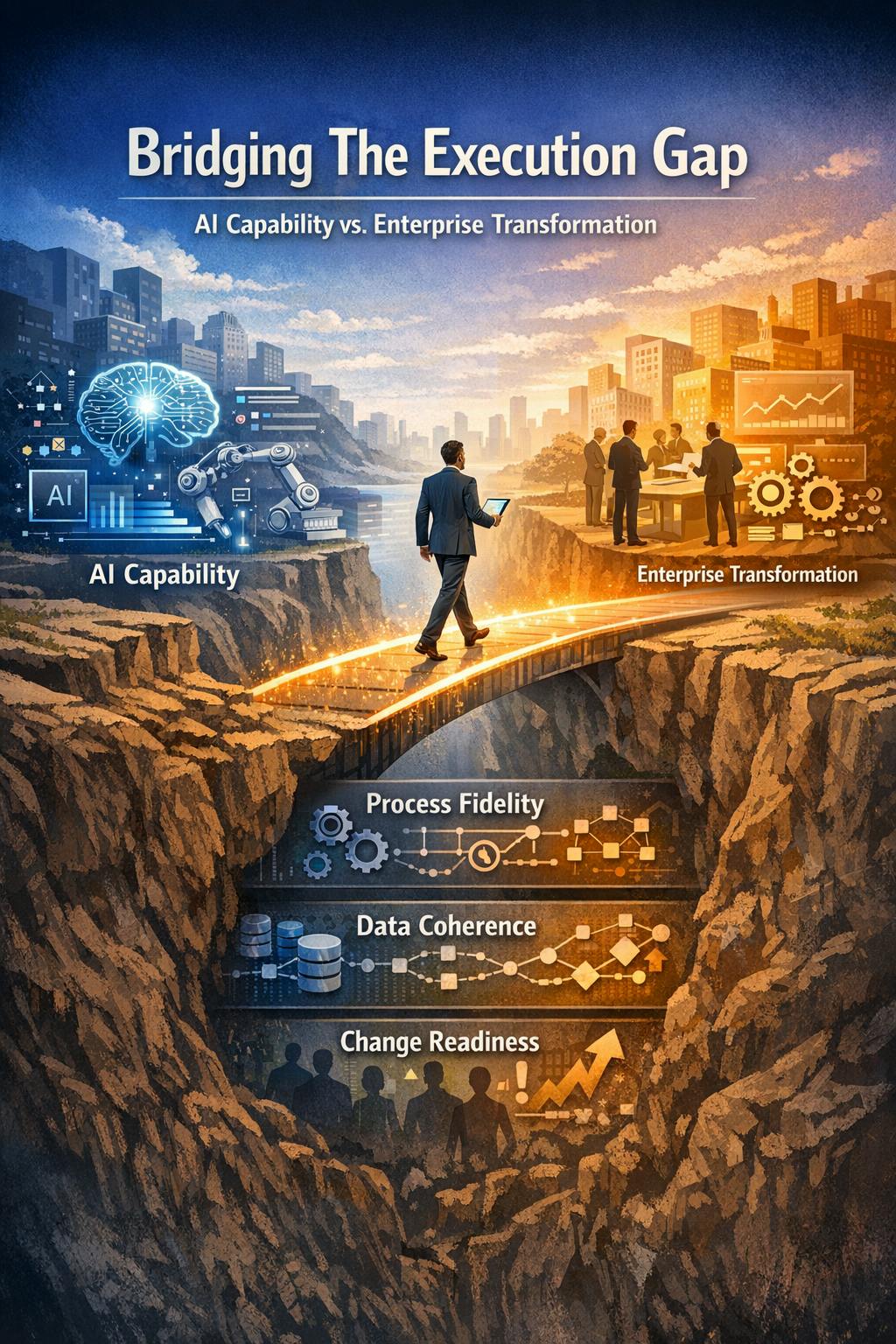

There is a widening chasm in enterprise technology that is quietly undermining billions in AI investments. On one side, AI capabilities are advancing faster than most organizations can track. On the other side, most enterprises are still struggling to show meaningful returns from their AI investments. Between these two realities sits what I call the execution gap - the structural disconnect between AI capability demonstrated at the model layer and measurable enterprise transformation outcomes realized at the workflow and decision layer.

After leading AI transformation programs across financial services, healthcare and M&A execution over 14 years, I have observed this pattern repeatedly: impressive AI capabilities that fail to translate into sustained business value because of systematic disconnects between how AI systems are built and how enterprises actually make decisions, absorb change, and measure value.

Why Capability Does Not Equal Outcome

Modern foundation models can summarize legal documents, generate production-ready code, and reason through complex supply chain scenarios with remarkable accuracy. Yet most enterprises that deploy these capabilities see adoption rates below 30% within the first year. The technology works. The transformation does not.

The core problem is that AI capabilities are delivered at the model layer, but value is realized at the workflow layer. A language model that can draft contract clauses is impressive in a demo. But if the legal team's approval process runs through a legacy document management system, if the business glossary is inconsistent across divisions, and if there is no mechanism to validate AI outputs before they enter a compliance pipeline, the model's capability becomes irrelevant.

I witnessed this firsthand while leading AI-driven decision systems for M&A execution. We deployed autonomous AI agents that could reduce prospect research time by 95%, an extraordinary technical achievement. But the real breakthrough came when we architected the complete decision system: integrating explainable AI with governance controls, real-time analytics, and existing stakeholder workflows. That holistic approach accelerated our M&A execution timeline by 70%, precisely because we addressed the execution gap rather than just the model capability.

The Three Layers of the Execution Gap

After analyzing dozens of AI deployments across Fortune 500 companies, I have identified that the execution gap operates across three layers simultaneously.

Layer 1: Process Fidelity

Enterprises run on processes that evolved over years, carrying institutional knowledge, regulatory constraints, and exception-handling logic that exists nowhere in any documentation. When an AI system is inserted into such a process without rigorous mapping, it creates brittle points of failure.

While implementing analytics platforms across 32+ healthcare systems, I discovered that “patient readmission” was defined nine different ways across facilities, each reflecting legitimate but undocumented clinical judgment calls. No AI model could deliver value until we addressed this process archaeology challenge first.

Product leaders need to lead process archaeology efforts before any model deployment: shadowing end users to understand actual workflows, mapping exception handling, identifying regulatory checkpoints, and documenting institutional knowledge that exists only in practitioners' heads.

Layer 2: Data Coherence

Most enterprise AI projects underestimate the extent to which their training and inference pipelines depend on data that is inconsistently labeled, siloed across departments, or governed by shifting policies.

I have seen $4 million AI projects stall for eight months because two business units used different definitions for the same customer attribute. In one financial services implementation, what the risk team called “exposure” was fundamentally different from what the trading desk meant by the same term. No machine learning model could reconcile this; it required organizational negotiation.

Product leaders must treat data coherence as a product problem, not an infrastructure problem. Solving it requires cross-functional data workshops, lightweight governance focused on high-impact attributes first, and automated data quality dashboards that make incoherence visible to stakeholders. In platforms generating $60M in annualized savings through workforce analytics, data coherence consumed 40% of the product effort.

Layer 3: Change Readiness

Deploying an AI feature to end users who do not trust it, do not understand it, or whose performance metrics do not reward using it correctly is a recipe for shelf-ware.

We built machine learning models for synergy value prediction that were technically sophisticated and demonstrably accurate. But adoption was tepid until we implemented “explainability layers” that showed deal teams exactly which historical comparables influenced each prediction and allowed them to adjust assumptions. Once users felt they understood and controlled the AI, adoption accelerated and realization timelines decreased by 10%.

Change readiness requires transparent AI decision-making with clear explanations, user override capabilities that preserve expert judgment and build trust, aligned incentive structures, and progressive rollout. Managing cross-functional teams of 30+ stakeholders taught me that change readiness is not a training problem, it is a product design problem.

What Bridging the Gap Actually Looks Like

Product leaders who consistently close the execution gap share several practices.

Start with Outcomes, Not Capabilities

Instead of starting with ‘how do we use LLMs in our claims processing workflow,’ they start with ‘what does a 15% reduction in claims cycle time require, and where in that chain does AI have the highest leverage?’

When I conduct discovery for new AI product initiatives, I spend the first 30 days mapping outcome metrics and only then identify where AI capabilities can move those metrics.

Architect for Human-AI Collaboration

The most successful enterprise AI deployments augment expert judgment rather than substituting for it. A fraud analyst who uses an AI co-pilot and can override its recommendations generates far more useful signals than a fully automated system that never surfaces its uncertainty. The override decisions become training data. The edge cases become product improvements. The user becomes an advocate rather than a resistor.

Instrument Everything

Product leaders who close the gap build measurement infrastructure from day one, tracking not just model accuracy metrics but workflow throughput, user override rates, time-to-decision, downstream error rates, and adoption velocity. Through systematic A/B testing, often conducting 10+ concurrent experiments, I have learned that instrumentation is about understanding where the execution gap persists and iterating rapidly to close it.

A Case Study

Consider a recent implementation where these principles came together. A Fortune 100 financial services firm needed to accelerate M&A deal execution while maintaining rigorous due diligence standards.

Execution gap-aware approach: We mapped the actual decision workflow across 8 stakeholder groups, identifying 23 critical decision points where AI could augment expert judgment. We built cross-functional agreement on 12 core metrics that needed consistent definitions. We designed explainable AI governance frameworks where deal teams could see exactly why the system flagged risks or identified synergies, with full override capability.

Result: 70% reduction in execution timeline. The AI agents reduced prospect research time by 95% and increased outreach by 5x. But the transformation outcome came from architecting the complete decision system, integrating those capabilities into trusted, governed, explainable workflows that executives would actually use for billion-dollar decisions.

A Forward-Looking Perspective

The enterprises that will win the AI era are not necessarily the ones with the most advanced models. They are the ones with product leadership capable of translating capability into durable operational change.

As agentic AI systems grow more capable and autonomous, the execution gap will paradoxically widen for organizations that have not built the foundational discipline described above. Autonomous agents make decisions faster than organizations can validate. Multimodal AI integrates data types that existing governance frameworks never anticipated. Organizations that have not solved process fidelity, data coherence, and change readiness at human speed will find themselves completely unable to operate at AI speed.

The product leader's role in this environment is part strategist, part organizational anthropologist, and part systems engineer. It requires the ability to hold a long-term transformation vision while making precise, high-frequency decisions about where to deploy limited organizational attention.

Closing the execution gap is not a one-time achievement. It is a continuous discipline, and the organizations that treat it as such are the ones building sustainable competitive advantage through AI.