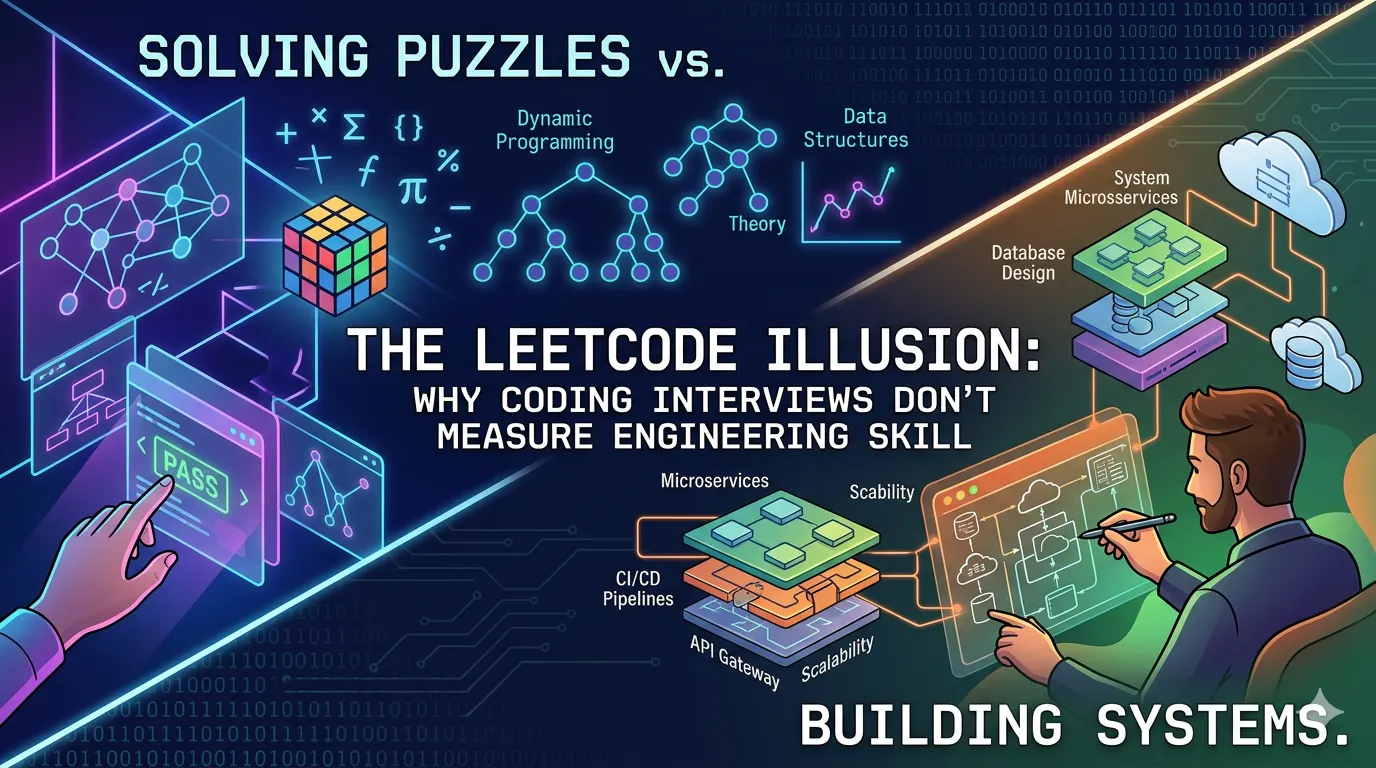

For years, we've treated coding interviews as the gateway to software engineering.

Solve a few algorithm problems under pressure, and you're "in." Struggle with them, and suddenly your ability as an engineer is questioned.

But here's the uncomfortable truth: LeetCode-style interviews have never been a reliable measure of engineering skill.

At best, they're a hit-or-miss signal.

Sometimes you're in the zone. Sometimes the pressure gets to you. Sometimes you've seen a similar problem before. Sometimes you blank out completely.

And that's exactly why companies run multiple coding rounds. Not because each one is deeply meaningful - but because collectively, they hope to average out the randomness.

Flunk one, ace the others. That's often enough.

That alone should tell us something.

The Signal We Think We're Measuring

Coding interviews are designed to test problem-solving ability, data structures and algorithms, and your ability to write clean code under time pressure.

These are useful skills. But let's be honest - they're only a small slice of what software engineering actually is.

Because real-world engineering looks nothing like: "Reverse this linked list in 20 minutes while someone watches you."

What Engineering Actually Looks Like

I've worked on distributed backend systems, event-driven pipelines, and platform infrastructure across Amazon, Microsoft, and Salesforce. The work was rarely about pulling the right algorithm out of my head on command.

It's about working through ambiguity. Reading messy systems. Debugging under uncertainty. Making tradeoffs. Shipping something that'll still behave at 2:22 a.m. when the dashboard turns red.

None of this is captured well by solving isolated algorithm problems on a whiteboard or shared editor.

That gap has always been there. Now AI is making it impossible to ignore.

Even More Broken in the Age of AI

If coding interviews were a weak signal before, they're even weaker now.

We're entering a world where tools like Claude can generate working code in seconds. Which raises an uncomfortable question: If AI can write the code, what exactly are we testing?

Knowing syntax? Memorizing patterns? Implementing algorithms from memory?

That's no longer the bottleneck.

Today, an engineer's value is shifting toward problem framing, system design, making trade-offs, and validating and adapting AI-generated code.

You don't need to "know Ruby on Rails" the way we used to. If the tools can help you write it, the real skill is: Can you understand, adapt, and integrate it into a system?

So What Should Interviews Measure Instead?

If we agree that coding interviews are incomplete, what's the alternative?

Here's what I think a better interview process should look like.

1. Debugging Over Implementation

Give candidates a broken system. Ask them to identify the issue, reason through the problem, and fix it.

This tests real-world thinking, reading code, understanding side effects. Because most engineering work isn't writing new code. It's fixing existing code.

2. Realistic Codebase Tasks

Instead of isolated problems, give candidates a small, messy codebase with a feature request or a bug to fix.

Yes, this is harder to design. Yes, questions can get leaked. Yes, it requires more effort.

But it's also far closer to the actual job.

3. System Thinking (Even for Juniors)

We underestimate how early this matters.

Even junior engineers should be evaluated on how they break down problems, how they think about edge cases, how they structure solutions. Not just whether they can implement a known pattern.

4. Collaboration Signals

Engineering is a team sport.

Interviews should test how candidates communicate, how they ask questions, how they respond to hints. Not just silent problem-solving.

5. AI-Aware Evaluation

This is the big shift.

Instead of banning AI, embrace it. Ask: Can the candidate use AI tools effectively? Can they validate the output? Can they spot incorrect or suboptimal solutions?

Because that's the real skill now.

The Honest Objection

The industry's hesitation to change is understandable. LeetCode isn't going away anytime soon.

It's scalable. It's standardized. It's easy to administer.

And in a world of thousands of applicants, companies optimize for efficiency, not perfection.

But as engineers, we should recognize it for what it is: a filtering mechanism. Not a measure of true ability.

Final Thought

We're overdue for a hiring methodology that respects both the complexity of the craft and the reality of how the craft is practiced today.

The question has never been whether engineers can solve a problem on a whiteboard. The question is whether they can solve the problems that actually show up - messy, ambiguous, half-specified - in a world where the tools keep changing faster than any interview rubric.

That's the engineer worth hiring. We just need interviews worth giving them.

[story continues]

tags