I’ve spent more than two decades designing enterprise systems. I’ve lived through SOA, cloud, big data, microservices, DevOps — each promising transformation.

Most didn’t fail because the technology was immature. They failed because the architecture underneath wasn’t fully thought through.

We are at a similar moment with Agentic AI.

Right now, much of the focus is on models, prompts, orchestration frameworks, and tool calling. Those are important. But they are not the core challenge.

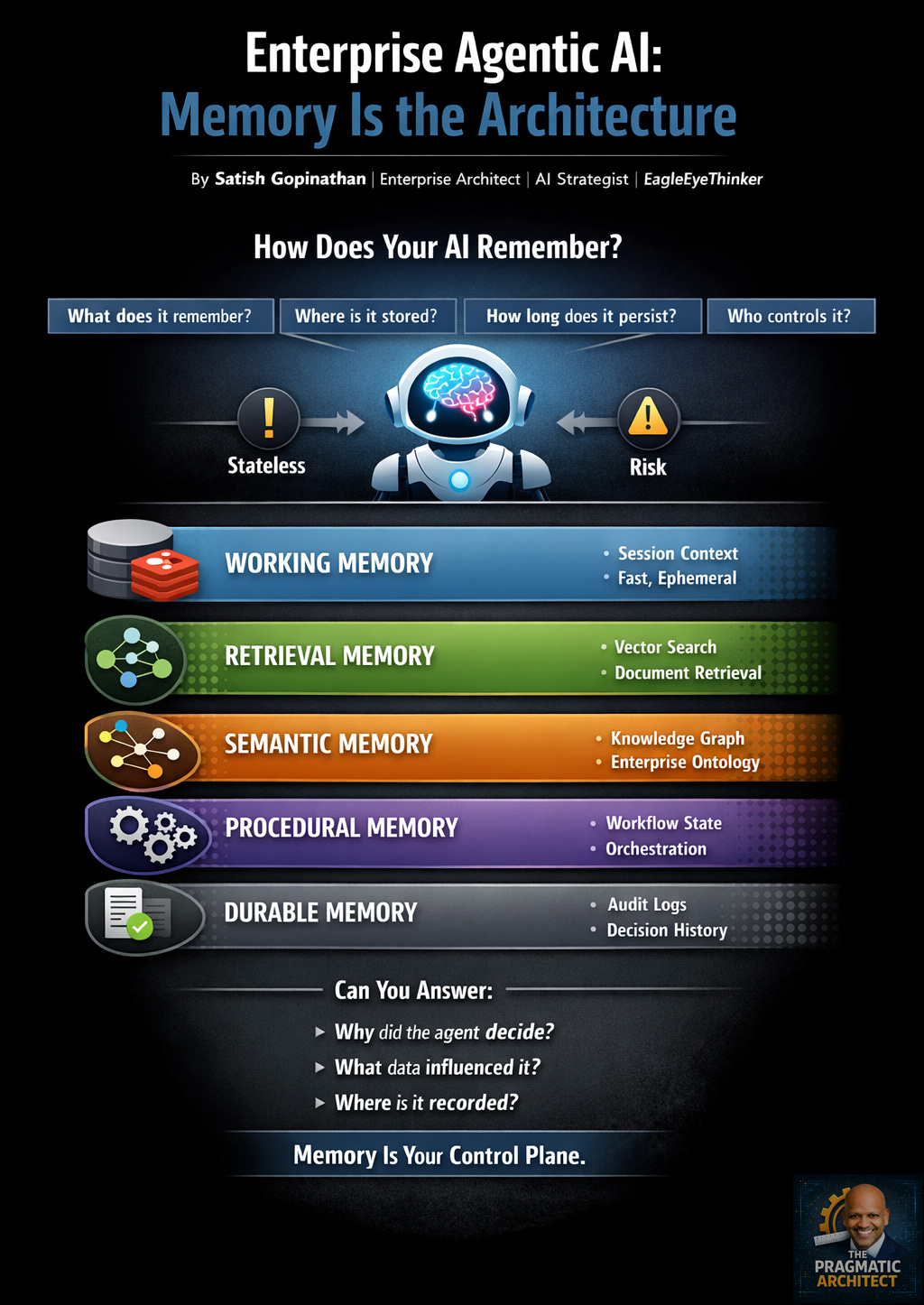

The real challenge is memory.

If you haven’t designed how an agent remembers, you haven’t designed the system.

LLMs do not remember. They process the context you provide. Without deliberate external memory layers, agents forget prior interactions, misapply policies, lose workflow state, and behave inconsistently across sessions. That may be tolerable in a prototype. It is unacceptable in an enterprise environment.

In production systems, memory is not a single capability. It is layered — and each layer serves a distinct purpose.

There are five memory areas that matter:

- Working Memory Short-lived, session-bound context optimized for speed and low latency. Often implemented using in-memory systems such as Redis. This ensures conversational continuity — not long-term intelligence.

- Retrieval Memory Vector-based knowledge retrieval that allows agents to fetch relevant enterprise content at runtime. This reduces hallucination but does not, by itself, create understanding.

- Semantic Memory Structured representation of enterprise relationships — org hierarchies, product taxonomies, compliance mappings. This layer gives the agent contextual awareness of how things connect.

- Procedural Memory Workflow and execution state. This is how the agent remembers how to act, not just what to say. It includes orchestration logic, tool coordination, and multi-agent flows.

- Durable Memory Persistent audit trails, event logs, and decision history. This layer enables explainability, compliance, traceability, and continuous improvement.

Most teams collapse these into one store — a vector database, a cache, or a collection of documents. That’s not architecture. That’s convenience.

Mature systems separate concerns because speed, grounding, structure, execution, and governance have different performance and risk requirements.

When agents begin influencing business outcomes — approving claims, escalating incidents, generating recommendations — memory stops being a technical implementation detail. It becomes governance infrastructure.

At that point, leadership must be able to answer three critical questions:

- Why did the agent make this decision?

- What data and prior state influenced it?

- Where is that recorded and auditable?

If those answers are unclear, you don’t have enterprise AI. You have unmanaged automation.

The organizations that will lead in Agentic AI will not necessarily have the largest models. They will have the most disciplined memory architectures — tiered, observable, governed, and aligned to enterprise risk frameworks.

The conversation needs to shift.

Not: “Which model are we using?” But: “How does our AI remember — and how do we control that memory?”

That’s where real architecture begins.

Practical Reference — Enterprise AI Memory Architecture

For those who want to see this model in action, I’ve published a GitHub reference implementation demonstrating the core memory layers used in production-grade Agentic AI systems:

- Working memory (session context)

- Retrieval memory (vector search)

- Semantic memory (knowledge graph)

- Procedural memory (workflow orchestration)

- Durable memory (audit trail)

The goal is not to showcase a framework. It is to demonstrate how tiered memory architecture translates from concept to implementation using open-source tools.

Memory is not just storage. It is how agents maintain continuity, grounding, structure, execution state, and accountability.

Example Use Case Implemented

Enterprise HR Policy Assistant

- Retrieves policy documents

- Understands organizational relationships

- Executes workflow logic

- Maintains session continuity

- Logs decisions for audit and compliance

Explore the implementation: https://github.com/eagleeyethinker/enterprise-agentic-ai-memory-lab