Whether it's monolith software, microservices, or distributed systems, during deployment, there's always a point where a newly released version and an older user version coexist. When they communicate, the same data might be interpreted differently semantically across different versions.

For example, in the old version, the variable "length" might mean meters, but in the new version, it might subtly change to "cm." The calculations are correct and error-free, but the semantics are completely broken. To address this, teams often build an intermediary layer to recognize and "translate" the data, ensuring semantic consistency across different service versions. This solution is effective and increases the system's flexibility and adaptability.

However, under business pressure, it inadvertently leaves a maintenance burden as this middleware layer becomes bloated over multiple versions. This is accompanied by the challenge of capturing and managing the semantics of data in the database. The semantics of the same data object can differ across different versions.

These are common problems in distributed systems or client-server systems. The Theus Framework was never designed for such systems; it focuses on on-premises systems.

Ultimately, a monolith or microservice is a classification that reflects the packaged form of software rather than its execution characteristics. In terms of functionality, a purely monolithic desktop application also has individual functions and services that communicate with each other and with the external environment. And what Theus manages, it can probably also be effective in distributed, microservice systems. This is especially true when projects involve AI agents as part of the system's logical process.

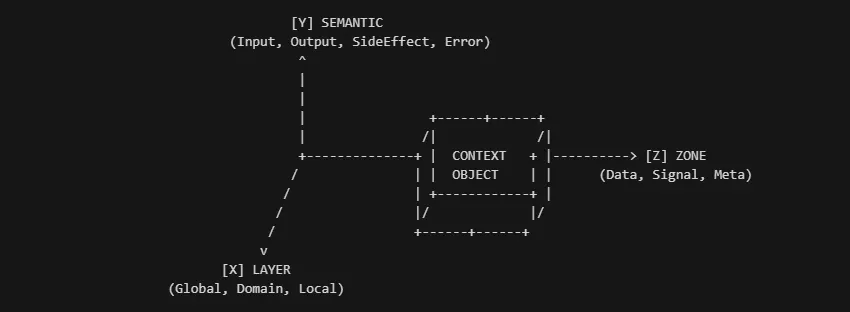

Theus's philosophy initially was "code is a liability, data is an asset." It always prioritized data management. The source code had to be managed and controlled to ensure the accuracy and consistency of the data. This applies not only to the final data stored in the database, but also to all data being read, calculated, and modified during the program's runtime. Initially, to address this, Theus managed data using a three-axis model similar to the Oxyz coordinate system.

Data in Theus is always associated with 3 types of attributes:

1. Layer: defines the location and lifecycle of a data object in the program

- global: exists immutably throughout the execution process

- domain: data exists within a full business process cycle

- local: exists only within a function/process

2. Semantic: defines the capabilities and meaning of a data object

- Input: read-only

- Output: mutable

- Side-effect: interacts with the external environment.

3. Zone: policy.

The rules applied to that data object are defined by its name prefix:

Variables:

- No prefix, defaults to domain, belongs to normal business logic, can read and write, and must be guaranteed by a transaction (either true - success or false - failure, no half-state).

- Prefix: SIG_variable, Meta_log_variable: Data used for logs and control signals. Cannot be used as input for an @process function.

- Prefix: Heavy_variable: Heavy data types such as media (images, music, videos), NumPy arrays, and very large matrices. This object defaults to no transactions to ensure maximum read and write performance.

However, during the development and use of Theus, I encountered a security vulnerability related to the permissions of a Python object. Programmers know that a Python list always has an update method. Although the Theus (Supervisor Proxy) security layer checks the permissions of functions that declare output for the list variable, if permission is not granted, the function cannot modify the list. However, if [main.py](http://main.py) or a function not managed by Theus is not protected, the Theus SuperVisor Proxy itself still provides Python with a direct reference to that list, and it can easily modify the data using the list's internal methods such as .append(), .extend(). One way to counter this is to have Theus block all these permissions for the Python object from initialization. But this would create significant friction for developers during programming. And how many methods can be blocked when Python changes or updates? There is a break in Theus's three-axis model, between the semantic axis and the zone axis. The structural solution is not simply to block list methods, but to establish a link and constraint between the semantics and the zone of a data set. And it must also be flexible enough so that when the scheme changes its declaration, the zone's policy on a data object also changes accordingly.

Setting physical rules for data

If an object is declared as belonging to a DATA Zone, it has full read and write permissions by default. However, these permissions are not granted by default. Every data object is wrapped in a glass enclosure. Viewable only, untouchable. Only when your @process declares output = data_context permission will Theus grant it valid write access.

If an object belongs to the SIGNAL Zone, by default it can only be added, not deleted.

If it's META, it can be updated, but not deleted or added.

If an object belongs to the Heavy Zone, by default it exists in SHM - shared memory, no shadow copy, no transactional access.

| Zone | Prefixes | Physics Ceiling | Philosophy (The "Why") |

|---|---|---|---|

| DATA | (None) | READ, APPEND, UPDATE, DELETE | Mutable State. Business logic requires full freedom to mold the state. |

| SIGNAL | sig_, cmd_ | READ, APPEND | The River. Events flow forward. You cannot "undo" (Delete) or "change" (Update) an emitted event. |

| LOG | log_, audit_ | READ, APPEND | The History. The past is immutable. You can only append new entries. |

| META | meta_ | READ, UPDATE | The Config. You can tune settings (Update) but structural deletion is forbidden. |

| HEAVY | heavy_ | READ, UPDATE (Ref) | The Boulder. Shared Memory pointers. You can swap the pointer, but deep mutation is un-audited. |

Function Lens

Learning from the security vulnerability related to permissions for `list`, instead of Theus asking: what will this function do if it only inputs and doesn't output, it's better to provide a reference to the Python Object for faster reading. Then the function can freely use `.update()` and `.append()`, and Theus knows nothing about it.

Switching to the CBS security model: Instead of asking what the function will do, Theus now provides the function with a lens – a lens that allows it to see what it's doing from the start based on the @process contract input/output.

If the data object is fully declared with input and output in the function's @process, and it belongs to Data, then the function can read and write validly. But if it's not declared, it's denied the ability to change the state of the data object.

If that object doesn't belong to Data but to a LOG Zone, that lens only gives it the highest permission of append, not delete or replace.

The final capability of a Lens is calculated as: FinalLens = (ProcessRequest) INTERSECT (ZoneCeiling)

How does Theus know which Policy to apply?

The "View-Policy" Model: Policy is not stored on the Data Object. It is stored on the ContextGuard (The Lens).

- Instantiation: When a Process starts, python creates a

ContextGuardspecific to that Process ID. - Injection: The

outputslist is injected into this Guard. - Overlay: When accessing

ctx.domain.logs, the Guard calculates the intersection of thelogsZone Physics and the injectedoutputsLicense. - Enforcement: It returns a

SupervisorProxyconfigured with the specificu8capability bitmask for that interaction.

This allows Process A to see logs as AppendOnly while Process B (an Admin process) sees it as Mutable, all while accessing the same underlying memory in Rust core of Theus.

Data Correctness

After addressing the issue of data access leaks within a process or function, the next step is to control correctness and prevent semantic drift.

Writing numerous functions to check values and conditions scattered throughout the system is a painful and maintenance-hazard process. To solve this, Theus uses an idea from industrial production management systems. This is Theus's audit trail feature. The central point of this feature lies in a single `spec.yaml` configuration file containing all the criteria for the data objects that the @process function executes.

Each time the @process function is launched, it triggers the audit mechanism.

It checks all input variables for validity. If not, it fails and refuses execution.

After the calculation is complete, the audit checks if the output value is valid. If not true → reject committing the new value. If true → commit via a transactional method.

Audits are not just TRUE/FALSE. They have multiple trigger thresholds and multiple levels of response.

Reaction Levels

C: Alert and logging

B: Block, rejects the execution of that single process, but the entire process continues (business classification)

A: Abort, stops the entire process.

S: Stop, urgently stops the entire process. (equivalent to A but with business classification).

Trigger Thresholds

Minimum threshold: the minimum number of violations that occur without causing an audit error. Audit trail does not throw any errors.

Maximum threshold: the maximum number of violations that will trigger the highest level response. A/B

Between these two thresholds, the audit only logs without throwing errors (level C).

Flaky reset_on_success: false

Audit trail allows you to enable or disable error accumulation to proactively detect "flickering" errors. Enabled, it continuously accumulates errors until it reaches the maximum trigger threshold. Disabled, it will reset the counter if the data satisfies the spec.yaml the next time.

spec.yaml will look like this:

```yaml

audit:

threshold_max: 3 # Global Default: Block after 3 violations

reset_on_success: true # Reset counter on successful execution

process_recipes:

add_product:

inputs:

- field: "price"

min: 0

level: "B" # Block if violated

max_threshold: 1 # Override: Block after 1 fail

outputs:

- field: "domain.total_value"

max: 1000000000

level: "S" # Safety Stop

message: "Danger! Overflow."

```

All control is centralized in a single spec.yaml file, reducing the burden on developers, eliminating the need for scattered manual validation logic and manual updates in multiple places.

In summary

This will indirectly reduce semantic drift between different versions running simultaneously. Instead of requiring compatibility across versions to run and leaving a huge technical debt and an inconsistent database along the entire timeline of a data object, it detects and alerts developers early so they can make corrections.

https://github.com/dohuyhoang93/theus

https://pypi.org/project/theus