TL;DR —

This study introduces a novel approach, using unimodal training to enhance multimodal meme sentiment classifiers, significantly improving performance and efficiency in meme sentiment analysis.

Authors:

(1) Muzhaffar Hazman, University of Galway, Ireland;

(2) Susan McKeever, Technological University Dublin, Ireland;

(3) Josephine Griffith, University of Galway, Ireland.

Table of Links

Conclusion, Acknowledgments, and References

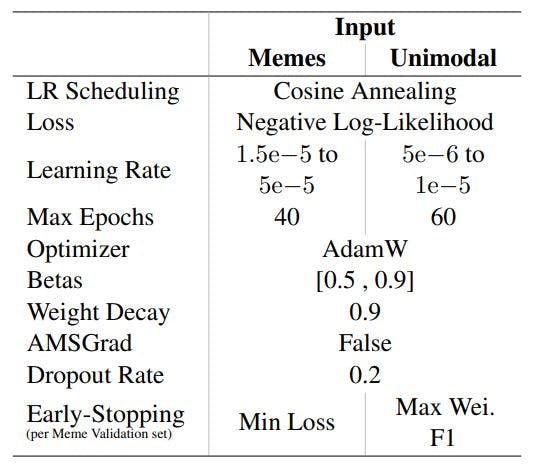

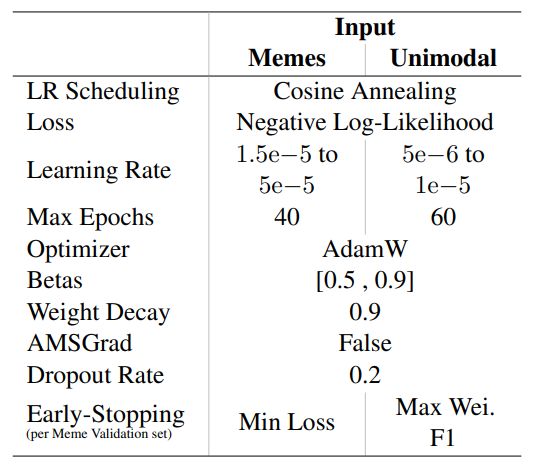

A Hyperparameters and Settings

E Contingency Table: Baseline vs. Text-STILT

A Hyperparameters and Settings

This paper is available on arxiv under CC 4.0 license.

[story continues]

Written by

@memeology

Memes are cultural items transmitted by repetition in a manner analogous to the biological transmission of genes.

Topics and

tags

tags

meme-sentiment-analysis|text-stilt|unimodal-sentiment-analysis|sentiment-labeled-data|model-training-hyperparameter|model-training-settings|sentiment-analysis|meme-sentiment-classification

This story on HackerNoon has a decentralized backup on Sia.

Transaction ID: YKcrduxALLv6tyvCX7hsA-F9ojWWelYWjnXzWsHHBw8