Table of Links

3 Model and 3.1 Associative memories

6 Empirical Results and 6.1 Empirical evaluation of the radius

6.3 Training Vanilla Transformers

7 Conclusion and Acknowledgments

Appendix B. Some Properties of the Energy Functions

Appendix C. Deferred Proofs from Section 5

Appendix D. Transformer Details: Using GPT-2 as an Example

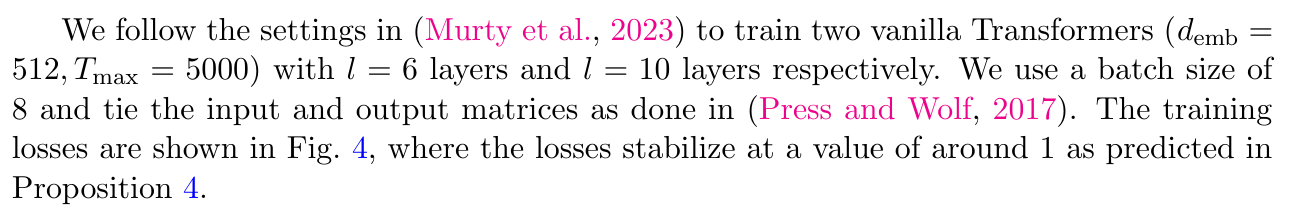

6.3 Training Vanilla Transformers

We next train vanilla transformer models using a small amount of high-quality data. The of Question-Formation dataset, proposed by McCoy et al. (2020), consists of pairs of English sentences in declarative formation and their corresponding question formation. The dataset contains D = 2M tokens. The sentences are context-free with a vocabulary size of 68 words, and the task is to convert declarative sentences into questions.

Authors:

(1) Xueyan Niu, Theory Laboratory, Central Research Institute, 2012 Laboratories, Huawei Technologies Co., Ltd.;

(2) Bo Bai baibo (8@huawei.com);

(3) Lei Deng (deng.lei2@huawei.com);

(4) Wei Han (harvey.hanwei@huawei.com).

This paper is

[story continues]

tags