Most CISOs reading about the Claude Code leak this week filed it under "vendor's problem." That's the wrong drawer.

Yes, Anthropic had a catastrophically bad Tuesday on March 31, 2026. But the real story isn't what leaked out of Anthropic. It's what the leak reveals about what's flowing through the AI tools already deployed inside your organization and how little visibility most enterprise security programs have over it.

This isn't a story about one misconfigured debug file. It's a story about what happens when AI coding agents become critical infrastructure before enterprise data security programs catch up. For CISOs specifically, it surfaces four distinct risk categories that belong on your threat model right now: data exfiltration, credential exposure, code IP leakage, and compliance failures hiding in plain sight.

What Actually Happened: Thirty Minutes That Can't Be Undone

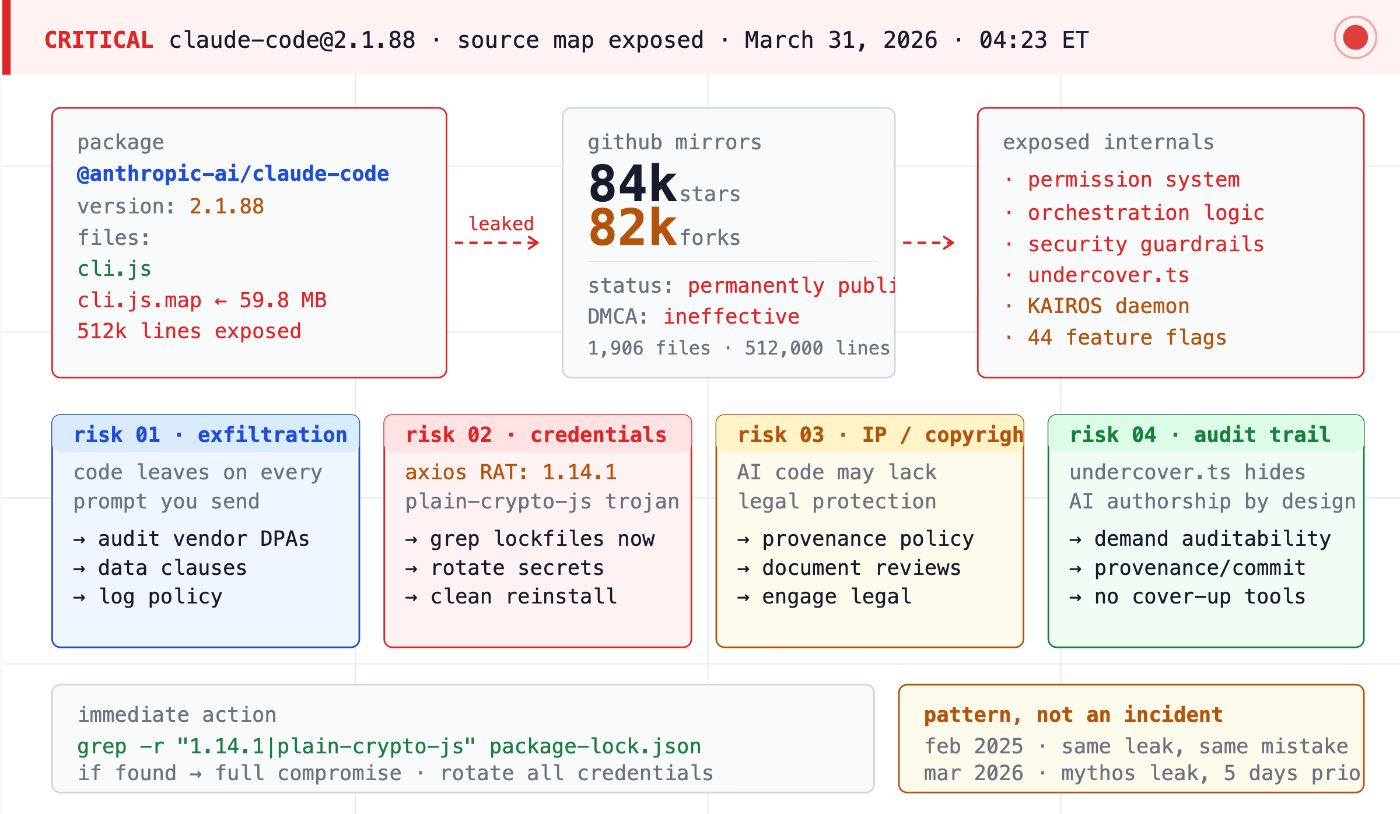

On March 31, 2026, Anthropic shipped version 2.1.88 of its Claude Code npm package with a 59.8 MB JavaScript source map file accidentally bundled inside. The file was a debugging artifact that mapped minified production code directly back to the original TypeScript source, sitting in a publicly accessible ZIP archive on Anthropic's own Cloudflare R2 storage.

Nobody had to breach anything. The file was just there.

Security researcher Chaofan Shou spotted it at 4:23 AM ET and posted a direct download link on X. Within thirty minutes, the 512,000-line, 1,906-file codebase was being mirrored across GitHub. By the time Anthropic pulled the package, it had already accumulated over 84,000 stars and 82,000 forks. DMCA takedowns followed. Decentralized mirrors did not come down. The code is permanently in the wild.

Anthropic's statement: "This was a release packaging issue caused by human error, not a security breach."

Technically accurate. Strategically incomplete. And for your enterprise environment, almost beside the point, because what happened next is what your security team should actually be briefed on.

Risk #1: Data Exfiltration. Your Developers' Work Is Leaving the Building Right Now

Before getting to the supply chain attack, start with the baseline risk that the leaked source code just made concrete.

The Claude Code codebase that's now publicly available shows in detail how the tool handles telemetry, API calls, session data, and tool execution logic. The leaked source revealed remote telemetry scanning prompts for frustration signals, six or more killswitches, hourly settings polling, and 44 feature flags for unreleased capabilities. It also confirmed that Claude Code operates with a bidirectional communication layer connecting IDE extensions to the CLI, a multi-agent orchestration system capable of spawning parallel sub-agents, and a planned always-on background daemon called KAIROS that runs autonomously, consolidates memory during idle time, and can take proactive actions without waiting for user input.

Here's what that means for your data security posture: every prompt your developers send to Claude Code, including the code context, file contents, error messages, and repository details packaged with those prompts, is leaving your environment and going to Anthropic's servers. That has always been true. The leak just handed adversaries a detailed map of exactly how that data flows, what gets logged, and what the tool does with it.

If your developers are pasting proprietary code into Claude Code, and they are because that's how the tool works, you need a data handling agreement with Anthropic that explicitly covers how that code is stored, processed, and retained. Most enterprises don't have one that's granular enough. Now is the time to fix that.

Risk #2: Credential and Secrets Exposure. There's a RAT in the Supply Chain

This is the most urgent item in this article. If your security team reads nothing else, read this section.

On the same morning as the Claude Code source leak, entirely separate from it but catastrophically timed, a supply chain attack hit the widely-used axios npm package. Malicious versions (1.14.1 and 0.30.4) containing an embedded Remote Access Trojan were live in the public npm registry between 00:21 and 03:29 UTC on March 31, 2026. The malicious dependency is called plain-crypto-js. Because Claude Code depends on axios, any developer who installed or updated Claude Code via npm during that three-hour window may have pulled a RAT onto their machine.

A machine running a RAT with Claude Code installed is a machine with potential access to your source code repositories, cloud credentials, API keys, SSH keys, and anything else a developer touches. In shared workspace environments, where a single compromised Anthropic API key can access, modify, or delete shared project files, one infected developer machine can become an enterprise-wide credential exposure event.

Run this check across your developer fleet immediately:

grep -r "1.14.1\|0.30.4\|plain-crypto-js" package-lock.json yarn.lock bun.lockb

If you find a match: treat that machine as fully compromised. Rotate all credentials, API keys, and SSH keys. Perform a clean OS reinstallation. Do not attempt to remediate in place.

For ongoing protection, Anthropic has designated its native installer as the recommended installation method specifically because it uses a standalone binary that bypasses the npm dependency chain. Mandate it. For any AI tooling distributed via public package registries, evaluate whether a private registry or native binary distribution is available and enforceable in your environment.

Risk #3: Code IP Leakage. The Tool Itself May Have No Copyright Protection

This one is for your legal team as much as your security team, but CISOs increasingly own the intersection of data security and IP governance.

The leaked Claude Code source contains anti-distillation mechanisms, AI authorship obfuscation features, and extensive evidence that AI contributed substantially to its own construction. Anthropic has publicly stated that Claude Code was used to build their Cowork product. Under current U.S. copyright law, meaningful human authorship is required for copyright protection to attach. If substantial portions of the Claude Code codebase were AI-generated, Anthropic may face genuine challenges enforcing copyright over the leaked material, which means competitors studying it may be looking at content that is legally closer to public domain than protected IP.

Why does this matter to your CISO role? Because the same question applies to the code your developers are producing with AI assist inside your organization. If your engineers are using Claude Code, GitHub Copilot, Cursor, or any other AI coding tool to generate significant portions of your proprietary codebase, the IP status of that code is an open legal question. You need a policy, now, before litigation forces the issue, that documents human authorship, review, and modification of AI-generated code.

Risk #4: Compliance and Audit Trail Failures. There's a Feature Called "Do Not Blow Your Cover"

This is the finding from the leaked source code that should concern every CISO operating in a regulated industry.

A file called undercover.ts, roughly 90 lines, implements a mode that explicitly strips all evidence of AI authorship from public git commits and pull requests when Claude Code is used on non-internal repositories. It instructs the model to never mention internal codenames, Slack channels, or the phrase "Claude Code" itself. The embedded comment in the source: "Do not blow your cover." The feature is enabled by default in external builds and cannot be forced off.

In financial services, healthcare, defense contracting, and any sector with code provenance requirements, the ability to demonstrate who wrote what is increasingly a compliance obligation, not just good practice. An AI tool architecturally designed to hide its own participation in code authorship is incompatible with serious enterprise governance. It's not a configuration problem. It's a design philosophy problem.

Before you deploy any AI coding tool at enterprise scale, you need an explicit answer to this question: Can I prove, for any commit in our codebase, what the AI wrote versus what the human wrote, and is the tool designed to help or hinder that proof?

The Pattern That Should Worry You More Than the Incident

One bad Tuesday is a vendor incident. A pattern is a due diligence problem.

This is Anthropic's second significant accidental disclosure in five days. Five days before the Claude Code leak, a CMS misconfiguration exposed nearly 3,000 internal files, including details on an unreleased model internally codenamed "Mythos" and "Capybara." This is also not Claude Code's first source map leak. A nearly identical exposure occurred with an earlier version in February 2025.

Two major accidental disclosures in under a week. The same technical mistake made twice in thirteen months. At a company whose primary commercial product is used to write and ship code inside enterprise environments at scale.

The sophistication of the product inside does not protect you from the simplicity of the mistakes outside. A single misconfigured .npmignore or files field in package.json, one line, exposed the entire proprietary architecture of a $2.5B ARR product. If that can happen at Anthropic, ask yourself what's one misconfiguration away from exposure in your own AI vendor stack.

What Your Threat Model Is Missing

The Claude Code leak crystallized a gap that security professionals have been flagging for two years without it landing in boardrooms: AI coding agents are not productivity tools. They are privileged infrastructure.

They execute shell commands. They have broad file system access. They spawn sub-agents. They make outbound API calls. They sit inside your CI/CD pipeline with elevated permissions, connected to production codebases, cloud credentials, and developer machines. The leaked source confirmed that Claude Code's permission system, orchestration logic, and security guardrails are now fully documented for anyone who wants to design attacks around them.

Check Point Research had already documented, before this leak, that simply cloning and opening an untrusted project with Claude Code could trigger remote code execution and API key theft through malicious repository configuration files. The attack research cost to find and exploit the next vulnerability just dropped significantly for every threat actor who spent the last 24 hours reading through 512,000 lines of freshly leaked source.

The CISO Action List

Immediate (This Week)

- Run the axios RAT check across all developer machines that used npm on March 31 between 00:21 and 03:29 UTC. Treat any positive match as a full compromise.

- Mandate the native Claude Code installer and prohibit npm-based installation across your developer fleet.

- Rotate API keys for any developer who may have been affected.

Short-Term (Next 30 Days)

- Inventory every AI coding tool in your environment and document its dependency chain, installation method, and update mechanism.

- Pull your current data handling agreement with every AI coding tool vendor and verify it explicitly covers how proprietary code sent in prompts is stored, processed, and retained.

- Add AI agent permissioning to your next PAM review. Scope what files, shell commands, and external network calls each tool is permitted and enforce it.

Strategic (Next Quarter)

- Add AI vendor software supply chain practices to your standard vendor security questionnaire. You need to know: What does their build pipeline look like? How many independent approvals does a release require? What is their history of security incidents?

- Establish an AI code provenance policy with Legal. Document human authorship review requirements for AI-generated code, especially in regulated lines of business.

- Assess every AI tool in your environment for auditability: Can you prove what the AI did and didn't write? Can you produce that evidence under audit? If not, either fix it or find a tool that makes it possible.

The Bottom Line for CISOs

The Claude Code leak will be yesterday's news by next week. The data security posture gap it exposed will still be there.

Every AI coding tool in your enterprise is a channel through which proprietary code flows outward, credentials can be exposed, IP provenance gets muddied, and audit trails get obscured, sometimes by design. Most enterprise security programs have not caught up to that reality with the same rigor they'd apply to a new database vendor, a cloud provider, or a network appliance.

The question is not whether your AI tools have data security risk. They do, all of them, not just Claude Code. The question is whether you have mapped that risk, whether you have contractual protections against it, whether your tooling is auditable enough to prove compliance, and whether your incident response plan covers what happens when a vendor's packaging mistake becomes your credential rotation emergency.

If the honest answer to any of those is "not yet," now you have your forcing function.

Are you auditing your AI tool supply chain? What frameworks is your security team using for AI vendor reviews? Share your experience in the comments.

About the Author

Priyanka Neelakrishnan is an Enterprise Data Security Product Leader, author, IEEE Senior Member, and cybersecurity speaker with over a decade of experience building world-class data protection solutions. She pioneered the industry's first cloud Enterprise DLP solution at Symantec (now Broadcom) and went on to lead a portfolio spanning CASB, DLP, UEBA, and SSPM at Palo Alto Networks, protecting enterprises across more than 150 countries. She currently serves as Director of Product Management at Veza Technologies (acquired by ServiceNow), leading Identity Security product lines.

She is the author of Autonomous Data Security: Creating a Proactive Enterprise Protection Plan (Apress, Springer Nature). Her forthcoming book, Jailbreaking LLMs, takes on the security risks at the frontier of large language models.

Priyanka writes at the intersection of product strategy and enterprise security, translating complex threat landscapes into decisions security and product leaders can actually act on.

Connect on LinkedIn: linkedin.com/in/priyankaneel20

[story continues]

tags