Test Web applications that offer URL shortening services represent a class of latency-sensitive systems frequently used as benchmarks for evaluating backend performance. While micro-benchmarking with trivial applications such as Hello World is common, such approaches lack the complexity of real-world use cases involving routing logic, request parsing, data validation, and interaction with persistent storage. To address this limitation, this article evaluates the performance of a minimal yet realistic URL shortener service implemented in two widely used backend frameworks: Node.js with Express and Java with Spring Boot. The popular notion is that Java is faster than Node.js. Or, Node.js, being interpreted is slower than compiled Java application. This article tries to run a real-world application to validate the notion.

Node.js operates on a single-threaded event loop model, limiting its default execution to a single CPU core unless explicitly configured for clustering. In contrast, Spring Boot leverages the Java Virtual Machine (JVM), which natively supports multithreading and is capable of utilizing all available CPU cores. This fundamental architectural difference has led to a general perception that Java-based applications outperform JavaScript-based ones in multi-core environments.

The primary objective of this study is to assess whether this perception holds true for a real-world application. The experiment proceeds in two phases. In the first phase, a single-instance Express application is compared with a standard Spring Boot application. In the second phase, the Express application is clustered across four processes to utilize multiple cores, and its performance is again compared to that of the Spring Boot application. All implementations perform identical operations, including generating short URLs, resolving them, and performing basic validation and I/O. The tests are conducted under controlled conditions with consistent workloads and measured using throughput and latency as primary metrics.

This article aims to provide a comparative analysis based on empirical data to guide developers and system architects in selecting appropriate backend technologies for high-performance web services. Let’s get started.

Test setup

All benchmarks were conducted on a MacBook Pro with an Apple M2 chip, featuring 12 CPU cores and 16 GB of RAM.

To ensure high-quality load generation, the widely recognized Bombardier tool was used. The tool was customized to send a random and unique URL in the request body, formatted as a JSON string.

Bombardier was configured to simulate a constant load of 100 concurrent connections, completing a total of 1M requests per test.

The following software versions were used, all up to date at the time of testing:

- Node.js: v24.4.1, Express: V5

- Java: v21.0.8, Spring Boot: v3.5.3

All tests interacted with a PostgreSQL database, a widely adopted open-source object-relational database system with over 35 years of development. PostgreSQL is well-known for its reliability, extensive feature set, and strong performance.

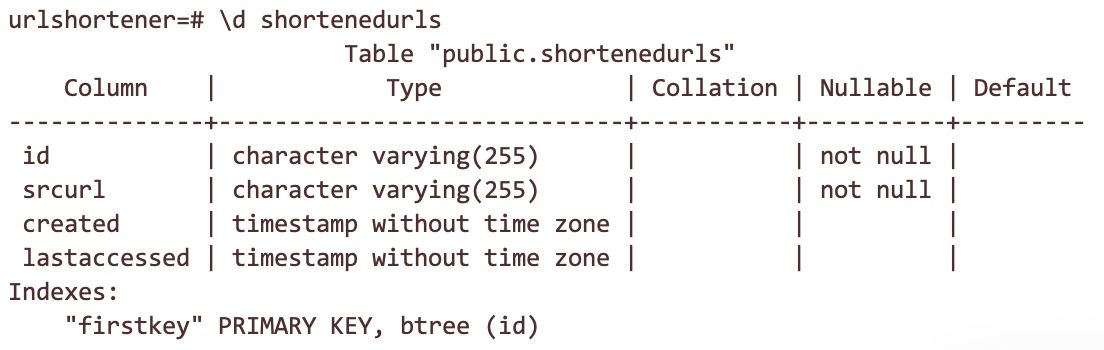

A single table named shortenedurls was used for all database operations. This table stores both the original and shortened URLs. Its structure is as follows:

Before each test, the table is truncated so that the index doesn’t get oversized and slow down the application running later in the test cycle.

Applications

Each application uses a distinct web framework, resulting in slight differences in application implementation. However, the core functionality remains consistent. A URL shortener application has been chosen for benchmarking, as it represents a real-world use case while remaining simple enough for controlled testing.

The application evaluates performance across the following operations:

- JSON parsing from the incoming request

- Request validation

- Database read via an ORM

- JSON response construction

The implementation for each application is detailed below:

Node.js

https://gist.github.com/mayankchoubey1/e299cd8dbd2147a9cb7fe401381d91ea?embedable=true

Spring Boot

https://gist.github.com/mayankchoubey1/256d27253be534181432e75cf0476ccc?embedable=true

Readings

Each test is conducted with a total of 1 million requests. The following performance metrics are recorded for each test run:

- Total time to complete 1 million requests

- Requests per second (RPS)

- Mean latency

- Median latency

- Maximum latency

- CPU usage

- Memory usage

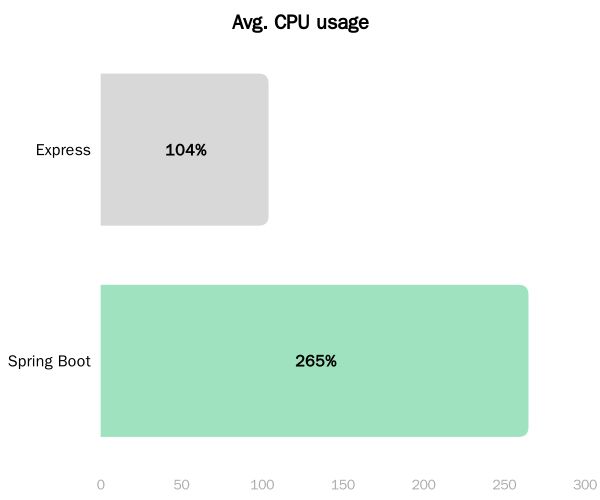

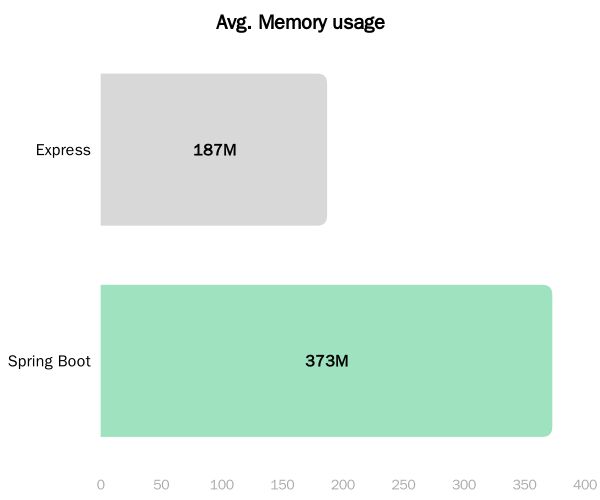

It is important to note that resource consumption—specifically CPU and memory usage—is as critical as raw performance. A faster runtime that incurs significantly higher resource costs may not provide practical value in real-world scenarios.

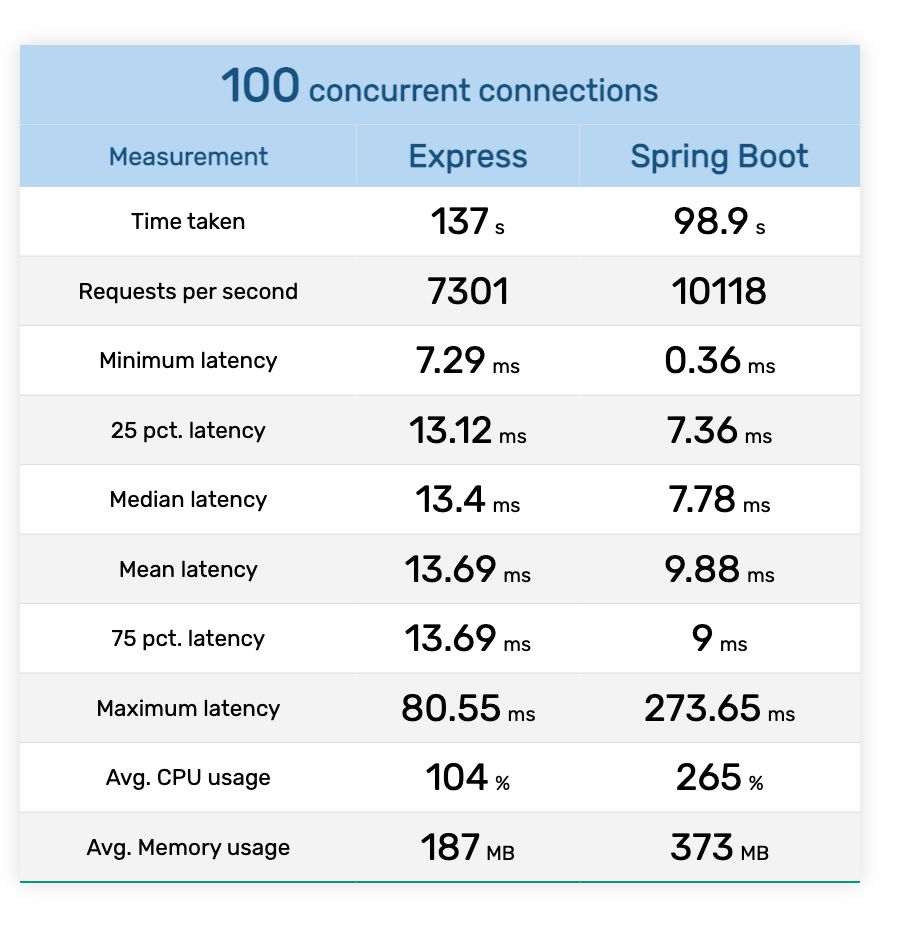

Test phase 1 - Single Application Testing

In the first phase, the benchmark is executed for single instance of Express app. Spring Boot already uses all cores, therefore both phases are same for Spring Boot.

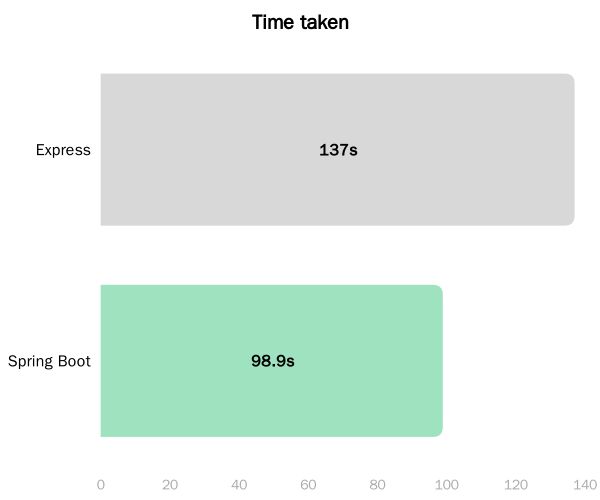

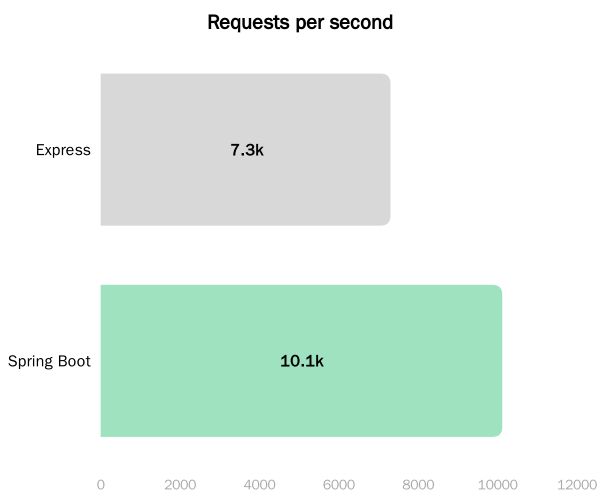

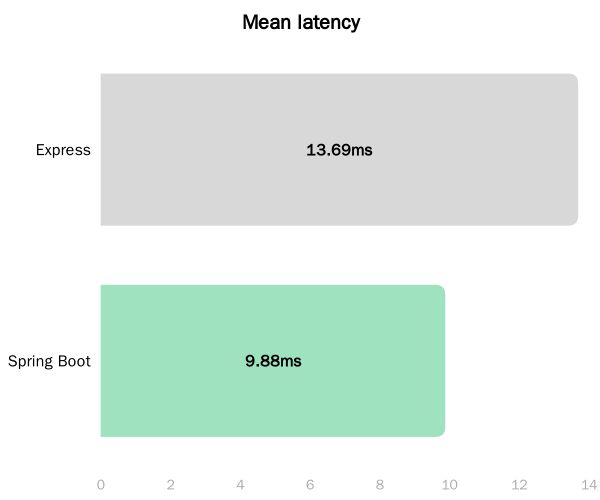

The results in chart form are as follows:

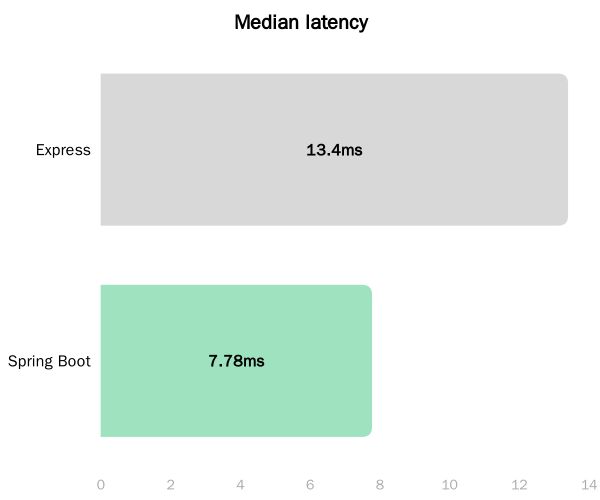

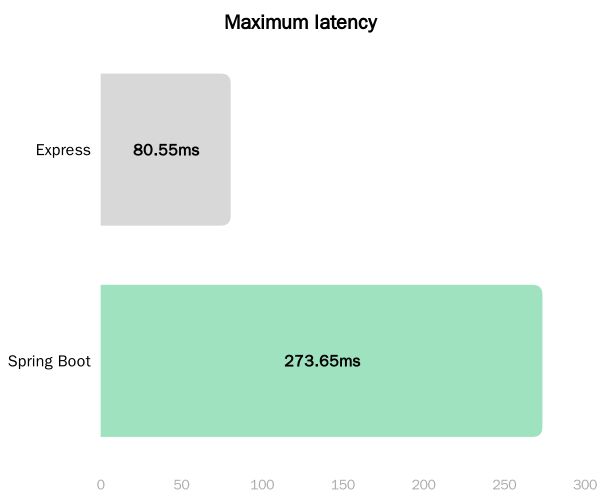

In the single-instance configuration, the Spring Boot application demonstrated higher throughput than its Express counterpart. Specifically, Spring Boot achieved approximately 10K RPS, while Express sustained around 7.3K RPS. Although the absolute difference is moderate, it is non-negligible in performance-critical environments. It is also observed that the Spring Boot application incurs higher resource consumption, exhibiting increased CPU utilization and memory footprint relative to the Express implementation.

It is important to note that the Express application, by default, operates on a single CPU core due to Node.js's single-threaded event loop architecture. Consequently, the comparison lacks parity in CPU resource allocation. This limitation is addressed in the subsequent phase through multi-process clustering of the Express application.

Test phase 2 - Clustered Application Testing

In the second phase, the benchmark is executed for clustered instance of Express app. As mentioned earlier, Spring Boot already uses all cores, therefore both phases are same for Spring Boot.

However, there is a subtle configuration difference. The database pool size for Spring Boot application is 10. While, in clustered mode, Express application uses 4 times the pool size (40 connections). This would again make the test unfair. To be fair, Spring Boot pool size is increased to 40.

Modified application.properties:

spring.datasource.hikari.maximum-pool-size=40

spring.datasource.hikari.minimum-idle=40

The pool size for the express application is kept at 10. In cluster mode, it’d increase to 40 as four workers will be installed by the cluster module. The express application remains the same, except for the main module:

import cluster from "node:cluster";

import express from "express";

import { handleRequest } from "./expressController.mjs";

const numClusterWorkers = 4;

if (cluster.isPrimary) {

for (let i = 0; i < numClusterWorkers; i++) {

cluster.fork();

}

cluster.on(

"exit",

(worker, code, signal) => console.log(`worker ${worker.process.pid} died`),

);

} else {

const app = express();

app.use(express.json());

app.post("/shorten", handleRequest);

app.listen(3000);

}

One final point - The resource usage of clustered express application is cumulative of all the workers. In other words, the CPU usage is the total of all four workers, and the memory usage is also the total of all four workers.

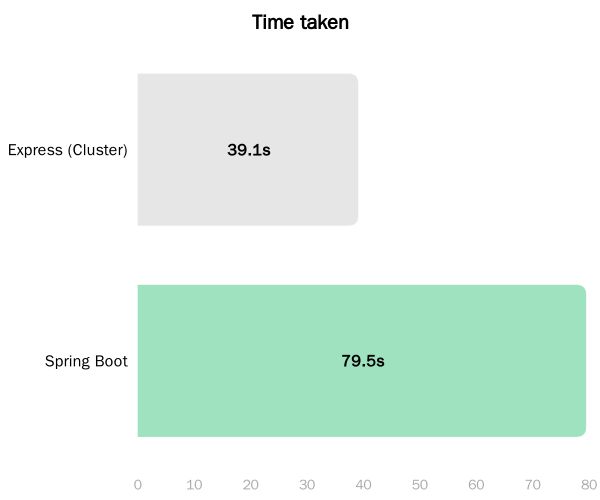

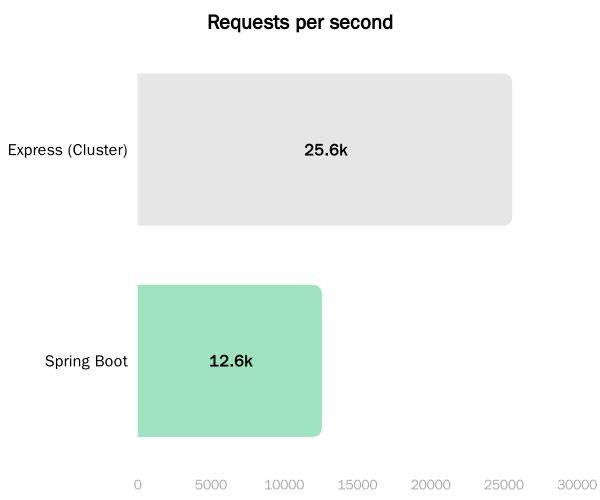

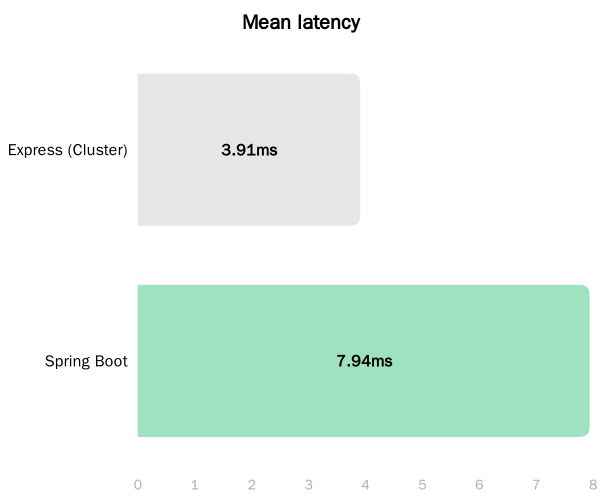

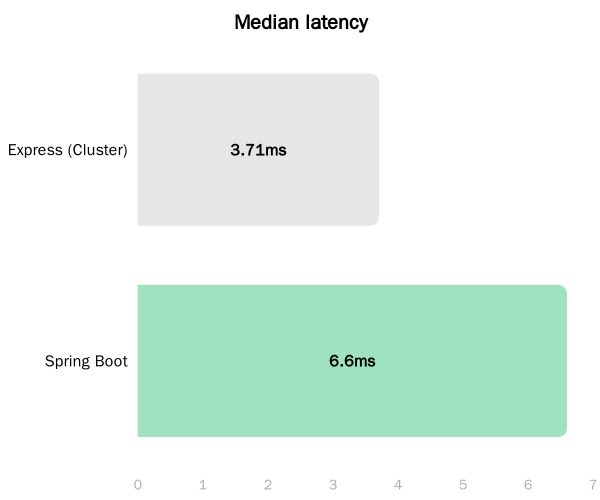

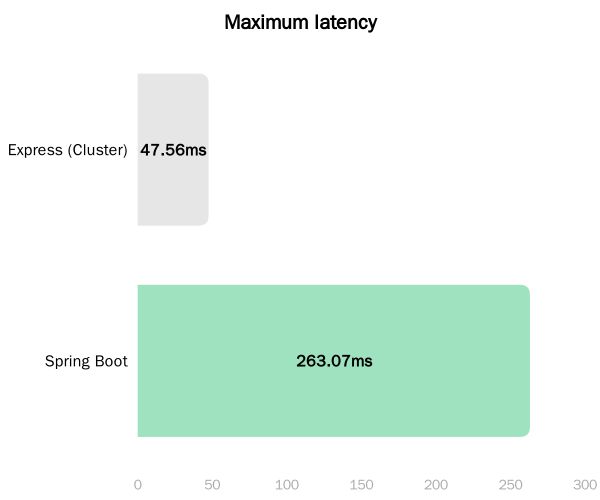

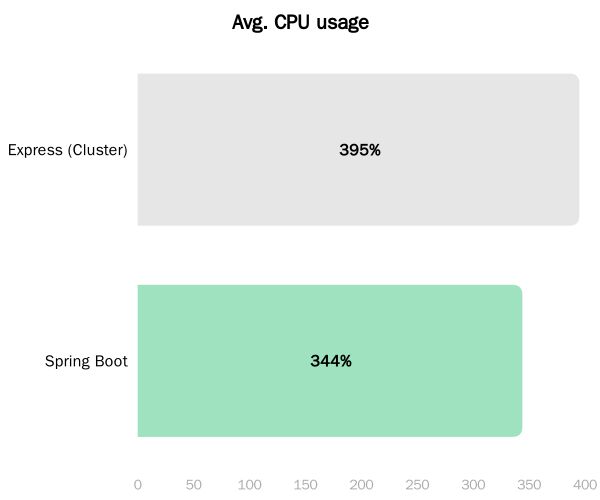

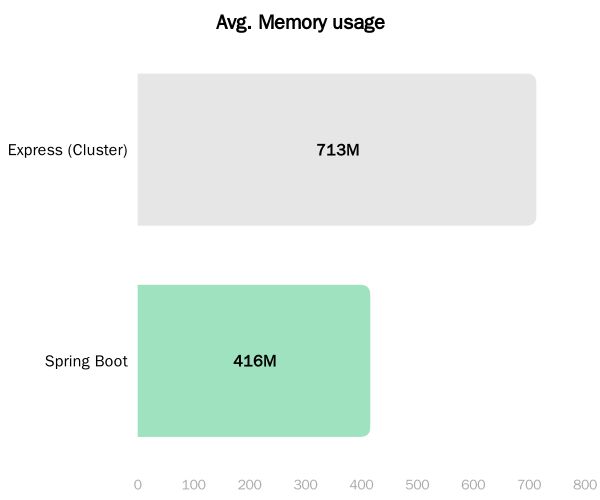

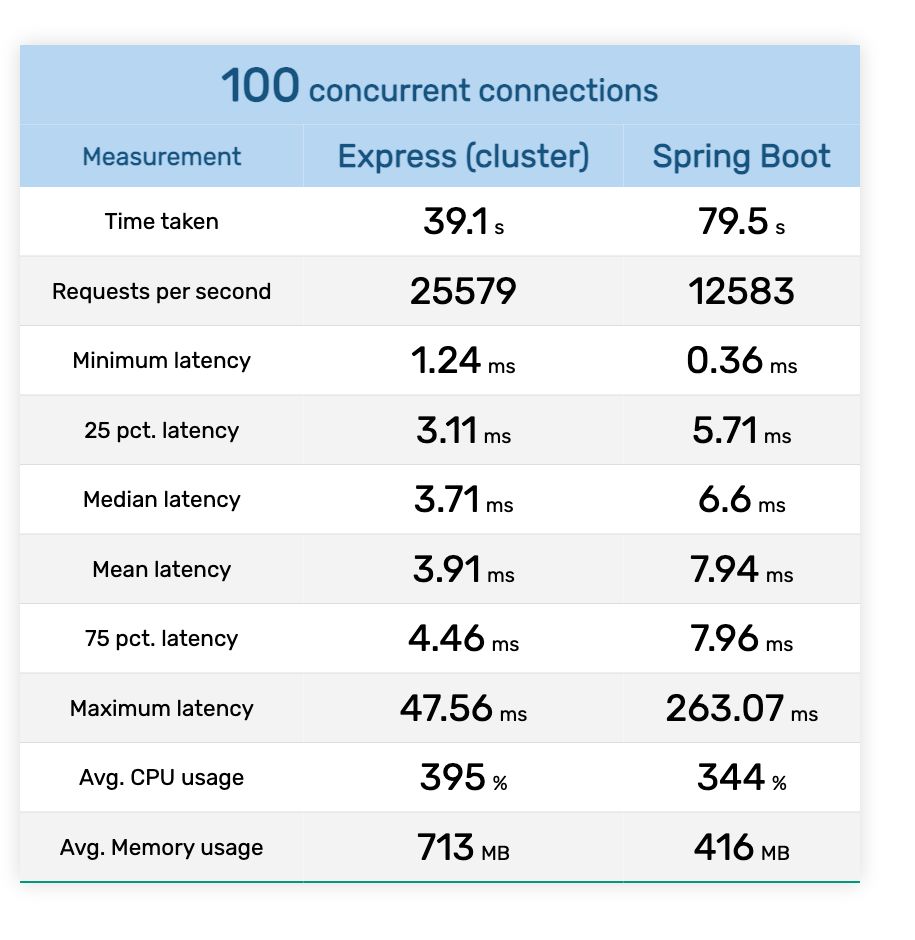

The results are as follows:

Now the results are interesting. The clustered Express application outperforms Spring Boot by a factor of 2. In other words, the Express application offers twice RPS (25K) compared to Spring Boot (12K).

However, the increase in performance didn’t came free. The CPU usage of Express cluster is ~400%, while the memory usage is ~700M.

In cluster mode, Express offers much better performance but uses a significant amount of resources.

Summary

A tabular summary of the runs is as follows:

Single process run

Clustered run

Thanks for reading!

[story continues]

tags