What is Edge Computing?

Before we can dive into edge computing as a whole, we need to understand how we got here. Since the introduction of the computing paradigm, its evolution has been marked by a continuous shift from centralized systems to more distributed architectures. We’ve gone from mainframe computers to desktops to laptops; in each iteration, computing power was brought closer and closer to the end user. As the internet became more robust, computing was decentralized even further, enabling networked communication and data sharing at a global scale.

A major milestone in the computing paradigm was the emergence and domination of cloud computing, which offers scalable and on-demand resources virtually all over the world. Cloud computing has completely revolutionized how individuals and businesses utilize computing power, storage, and offered services. Cloud computing has been revolutionary, but as the number of connected devices and the Internet of Things (IoT) increases, new challenges arise that aren’t addressable with traditional cloud computing.

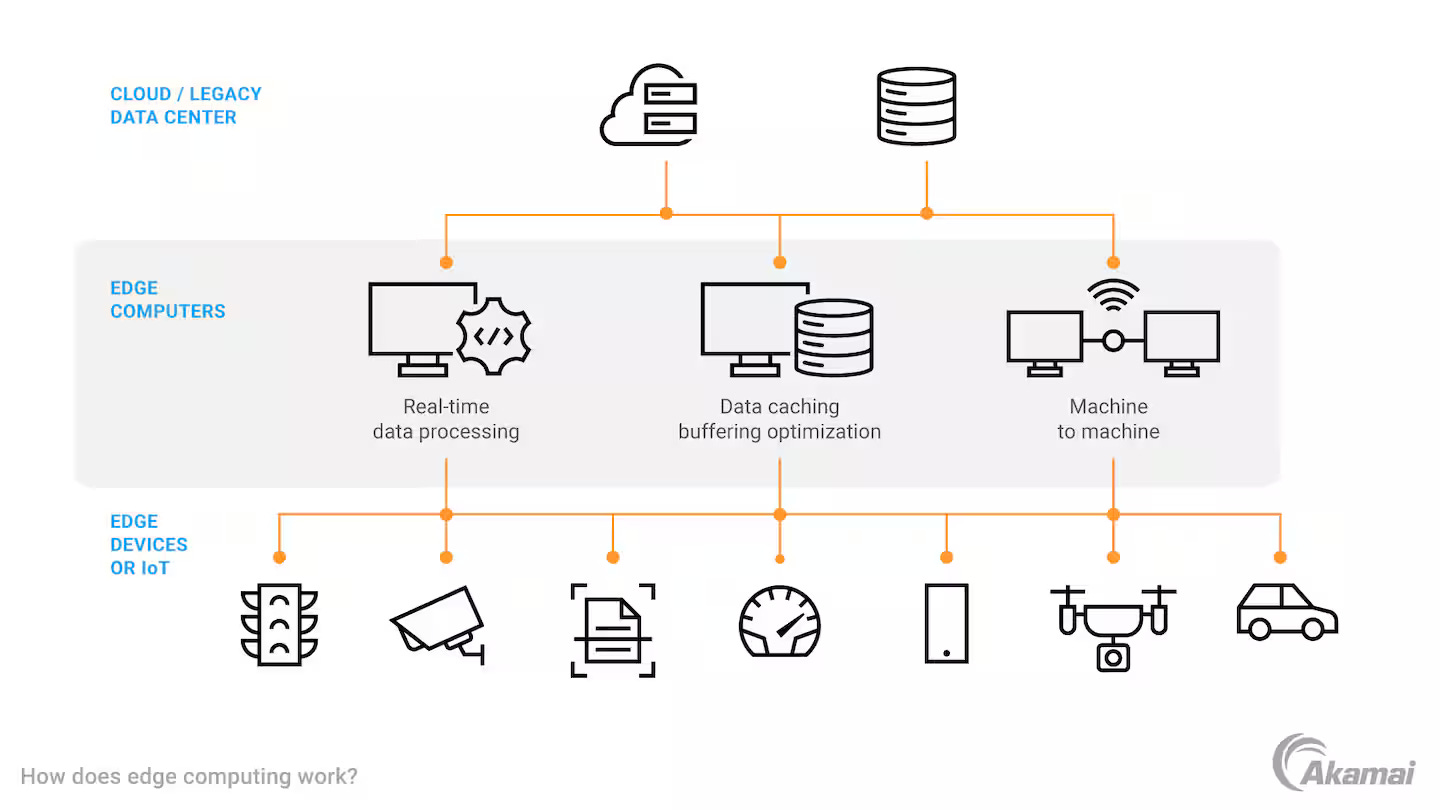

This is where edge computing comes into play. Edge computing is a computing paradigm that brings computation power and data storage needs close to the source of data. Instead of sending all data to a central location (think Cloud Computing and storing everything in the “cloud”) for processing, edge computing processes data at or near the point of generation. By doing so, we’re able to reduce latency, conserve network bandwidth, increase responsiveness, and improve user experience for real-time applications.

Why is Edge Computing Important?

As mentioned above, edge computing provides benefits in areas such as latency, bandwidth, and responsiveness. In addition to those, edge computing can enhance the security posture of a system, increase reliability, and, in some cases, even increase the scalability of a system. We’ll take a look at some of these below:

Reduced Latency

One of the biggest attraction points of edge computing is the fact that you can reduce latency by orders of magnitude. By processing data closer to the generation point, applications are able to respond much faster.

The increased responsiveness is critical for use cases like autonomous vehicles, industrial automation, and healthcare.

- Autonomous Vehicles: processing sensor data immediately is essential in guaranteeing safety.

- Industrial Automation: real-time control systems rely on instant feedback, so quick processing is necessary.

- Healthcare: critical monitoring devices need to be able to process and analyze patient data rapidly.

Network Bandwidth Optimization

In the typical centralized computing model, data needs to be transferred to a central storage server for processing, which, as you can imagine, for large amounts of data, can strain the network and increase costs. With edge computing, it’s possible to filter the data to send only what is required and aggregate data to reduce redundancy, which, ultimately, can lower operational expenses.

Increased Security

Processing data locally can improve the security of a system by localizing data. By localizing data, regulatory compliance is easier to achieve.

Scalability

One can imagine that as the number of connected devices grows, as it has been, centralized computing becomes less and less optimal. Edge computing, on the otherhand, can provide a distributed and load-balanced processing system. By offloading processing tasks from the central servers, one can achieve a distributed processing framework. By having multiple edge nodes, one can achieve a load-balanced, distributed processing system.

Edge Computing Today

Edge computing is being adopted very rapidly across various industries like manufacturing, retail, healthcare, energy, and utilities.

- Manufacturing: for predictive maintenance and real-time quality control.

- Retail: enhancing customer experience by leveraging real-time analytics.

- HealthCare: in medical devices and for telemedicine applications.

- Energy and Utilities: monitoring and controlling distributed resources.

All of these use cases are aided by the various edge computing platforms that exist today. Service offerings such as AWS IoT Greengrass, Microsoft Azure’s IoT Edge, and Google Cloud’s IoT Edge have pushed the envelope for providing cloud services at the edge.

- AWS IoT Greengrass: extends the AWS service portfolio to edge devices.

- Microsoft Azure’s IoT Edge: deploys cloud workloads to run on edge devices.

- Google’s Cloud IoT Edge: extends Google’s data processing and machine learning capabilities to edge devices.

In addition to edge computing platforms, there are various edge hardware out there, including, but not limited to, NVIDIA’s Jetson Devices (I’ve personally used these, and they’re fricking awesome) and Intel’s OpenVINO Toolkit.

Together with a dedicated platform and the hardware to match its possible to get edge applications up and running faster than ever. Having this number of devices does come with some drawbacks:

- Security: as the number of edge devices increases as does the overall attack surface. This means more robust security measures are required.

- Deployment Complexity: deploying and managing a number of distributed devices is non-trivial.

- Interoperability: heterogenous hardware and software platforms can lead to compatability issues.

All in all, currently, the edge compute space looks really good. It’s got an established list of hardware and software platforms, support from major companies, and is only expected to get better and better as the need for edge processing increases. Plus, it doesn’t hurt that the global edge market size was valued at $16.45 billion in 2023 and is expected to grow at a CAGR of 36.9% till 2030.

The Future?

In the last two years, artificial intelligence and machine learning (AI/ML) have taken center stage, as highlighted by the fact that every major company is either incorporating or releasing Generative AI (GenAI) products. With this being the case, Edge Compute for AI (Edge AI) will enable devices to perform tasks like image recognition, classification, natural language processing, and predictive analytics without having to rely on cloud-based models.

- On-Device AI: smartphones and IoT devices will begin to run AI/ML models locally (this can be observed in the new iOS updates with Apple Intelligence).

- Edge AI Processors: specialized processors geared towards AI workloads will start to show up (NVIDIA is already doing this).

Edge computing will prove to be very useful in areas like autonomous vehicles, smart cities, and augmented/virtual reality (AR/VR) by reducing latency and allowing for quick data processing. It will do the same for real-time systems like the ones present in industrial solutions.

While the upside to edge computing is limitless, there are plenty of downsides, namely, security and privacy, interoperability, and complexity. As the market increases, these challenges should begin to subside. This new computing paradigm is poised to create new business and employment opportunities.

That’s all I have for you today. Hopefully you enjoyed the read. If you liked the content and would like to continue to receive my content please consider subscribing; it goes a long way.

Lastly, please comment & share, I’d love to hear everyone's thoughts.

Thanks,

Ali